Retail Strategy Optimization AI Agent

Transform Retail Margins with Intelligent Promotion Management through AI-Agents - Built on Snowflake Cortex

Retail Strategy Optimization AI Agent Overview

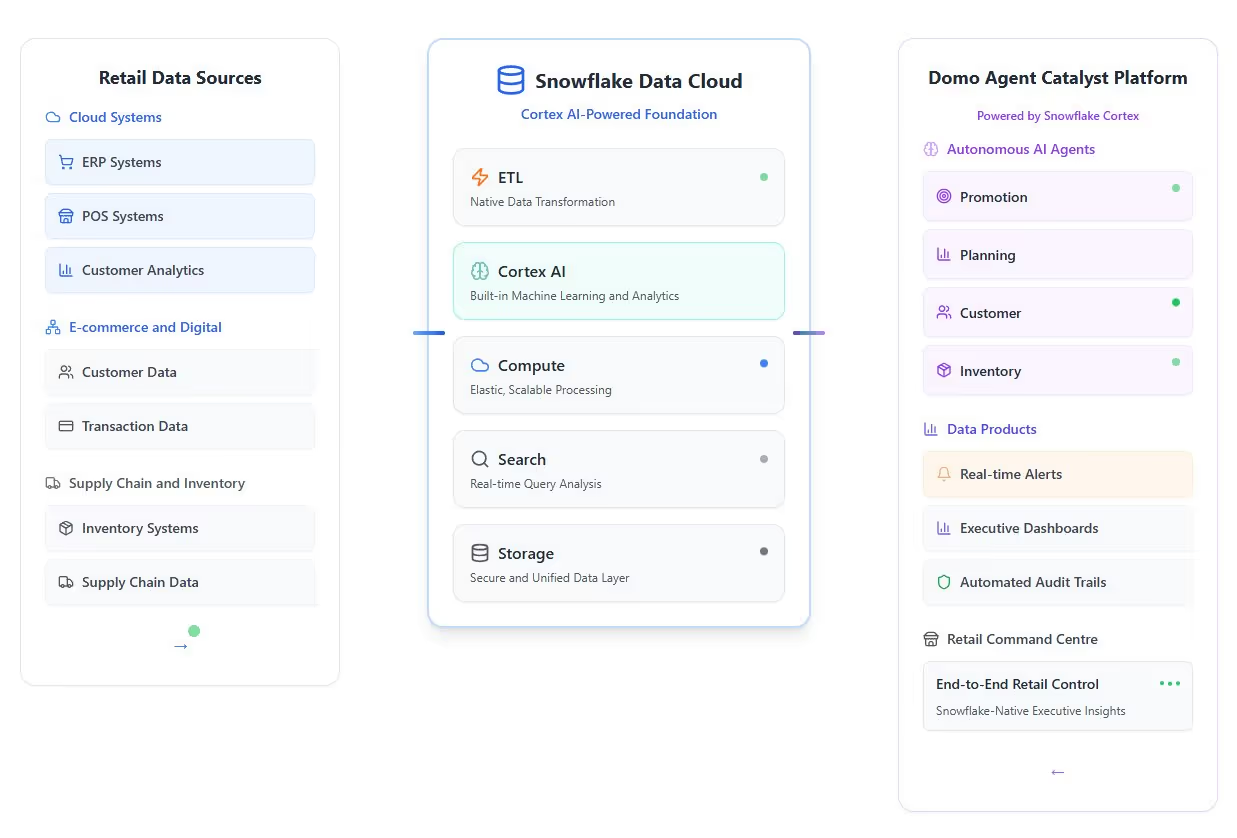

The Retail Strategy Optimization AI Agent (also known as PromoGenie) transforms retail promotion management by providing autonomous decision-making and real-time optimization. Powered by advanced analytics, the agent monitors customer interactions to optimize pricing and maximize margins. This intelligent solution allows marketing leaders to move from reactive to proactive planning, ensuring measurable results across every campaign.

Problem Addressed

Traditional retail promotion management is often fragmented and too slow to keep pace with dynamic market conditions:

- Manual Bottlenecks: Managing promotions across spreadsheets is time-consuming and prone to administrative errors.

- Inaccurate Forecasting: Relying on intuition for budget allocation often leads to overruns or missed revenue opportunities.

- Fragmented Data: Disconnected customer signals make it difficult to unify marketing efforts across different channels.

- Static Pricing: Delayed responses to competitor pricing or shifts in consumer behavior result in lost sales and eroded margins.

What the Agent Does

The agent acts as an autonomous retail strategist that handles end-to-end promotion workflows:

- Identifies Opportunities: Uses machine learning to micro-segment customers and identify high-value cross-selling opportunities.

- Optimizes Pricing: Adjusts prices automatically based on real-time demand, inventory levels, and competitor activity.

- Targets Customers Intelligently: Groups subscribers based on behavior to deliver personalized offers and journey-based triggers.

- Simulates Scenarios: Performs "what-if" modeling for price adjustments and promotional displays to predict outcomes before execution.

- Triggers Automated Actions: Directly executes replenishment tasks or promotional pivots across e-commerce and physical channels.

Benefits

- Maximized Campaign ROI: Boost profitability through data-driven lead scoring and optimized investment efficiency.

- Agility in Dynamic Markets: Detect shifts in consumer behavior and pivot campaigns in seconds rather than days.

- Improved Customer Engagement: Drive loyalty by delivering personalized experiences that match individual intent and behavior.

- Reduced Operational Overhead: Automate repetitive administrative tasks, allowing teams to focus on high-level innovation.

Standout Features

- Autonomous Decision-Making: Moves beyond predefined rules to act intelligently based on real-time environmental cues.

- Real-Time Margin Protection: Monitors margin thresholds to ensure discounts never compromise overall profitability.

- Extensible AI Architecture: Seamlessly integrates with existing CRM, ERP, and Snowflake infrastructure for rapid deployment.

- Dynamic Scenario Modeling: Allows users to query supply chain and loyalty data in natural language to test new strategies.

- Cross-Channel Coordination: Orchestrates frictionless journeys across web, mobile, and in-store touchpoints.

Who This Agent Is For

This agent is built for retail executives, marketing directors, and store operations leads who need to scale their decision-making.

Ideal for:

- Marketing Leaders: Professionals who need real-time visibility into customer behavior to justify campaign spend.

- Merchandisers: Teams focused on shelf optimization and reducing surplus inventory through targeted markdowns.

- E-commerce Managers: Leaders looking to unify fragmented data and automate high-frequency price adjustments.

- Retail Operations Leads: Staff responsible for managing multi-channel promotions and workforce allocation in a fast-paced environment.

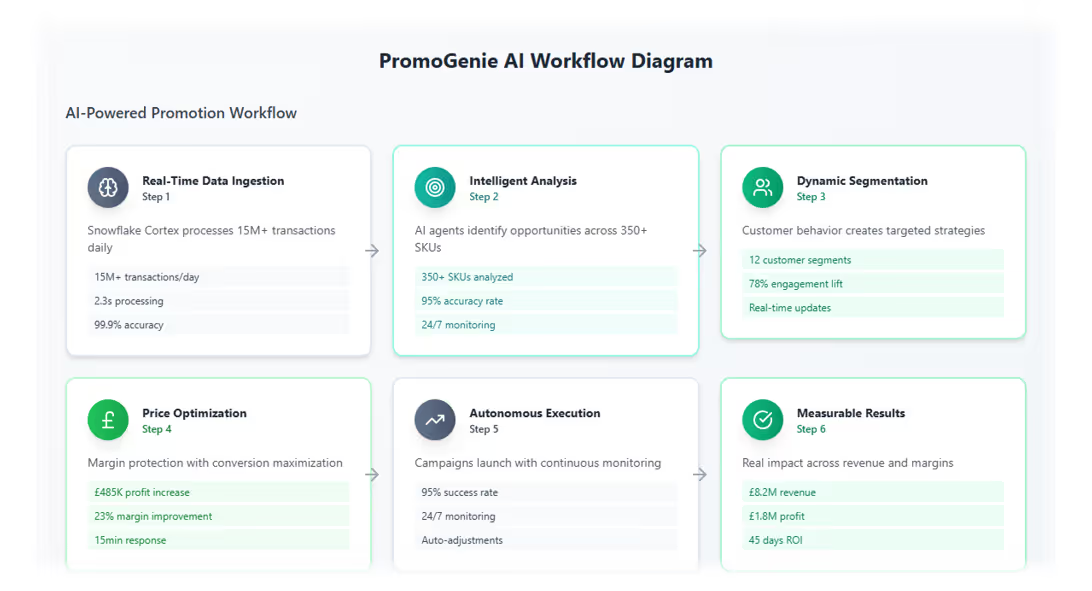

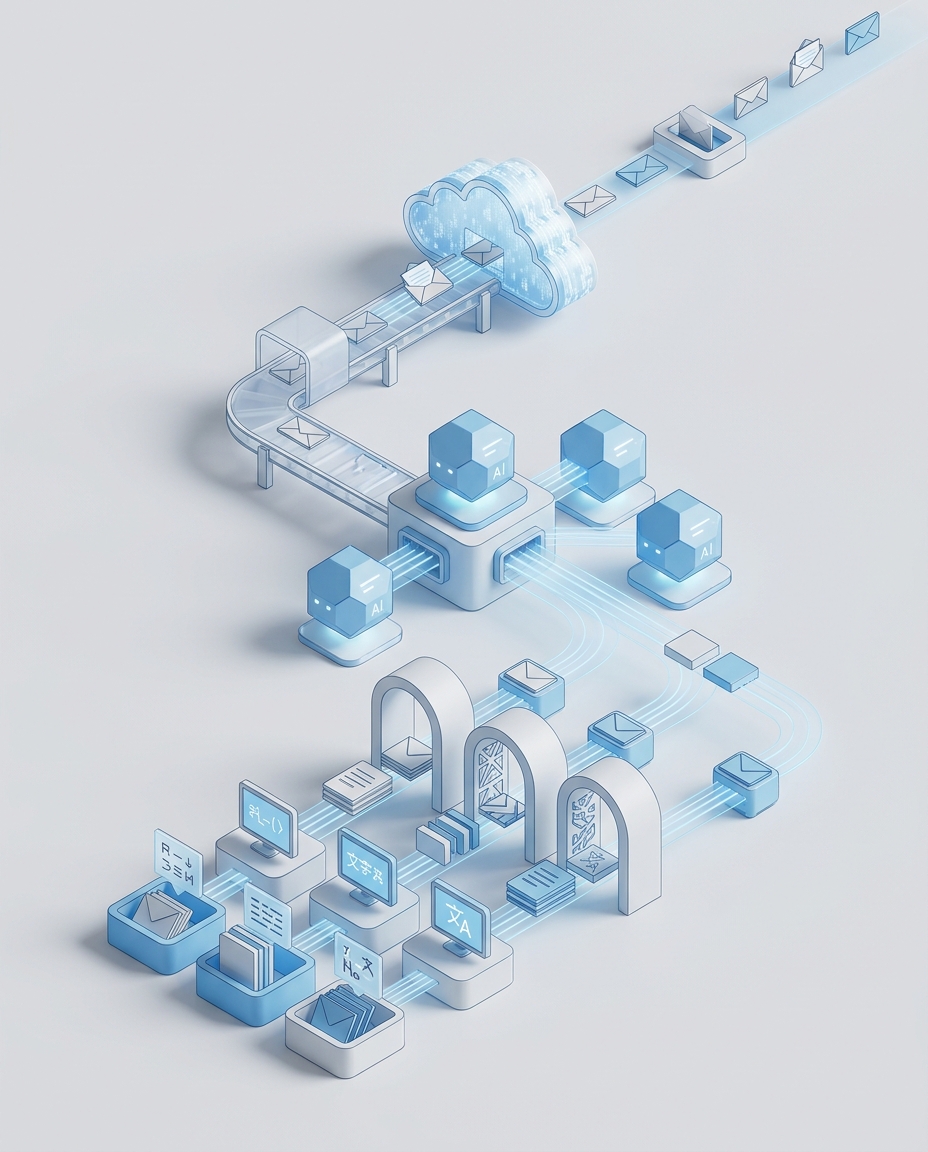

How it works

Manufacturing Process Transformation AI Agent

Empowering Manufacturing with Proactive Manufacturing Decision-Making - Built on Snowflake Cortex

Intelligent AI for Modern Manufacturing Operations

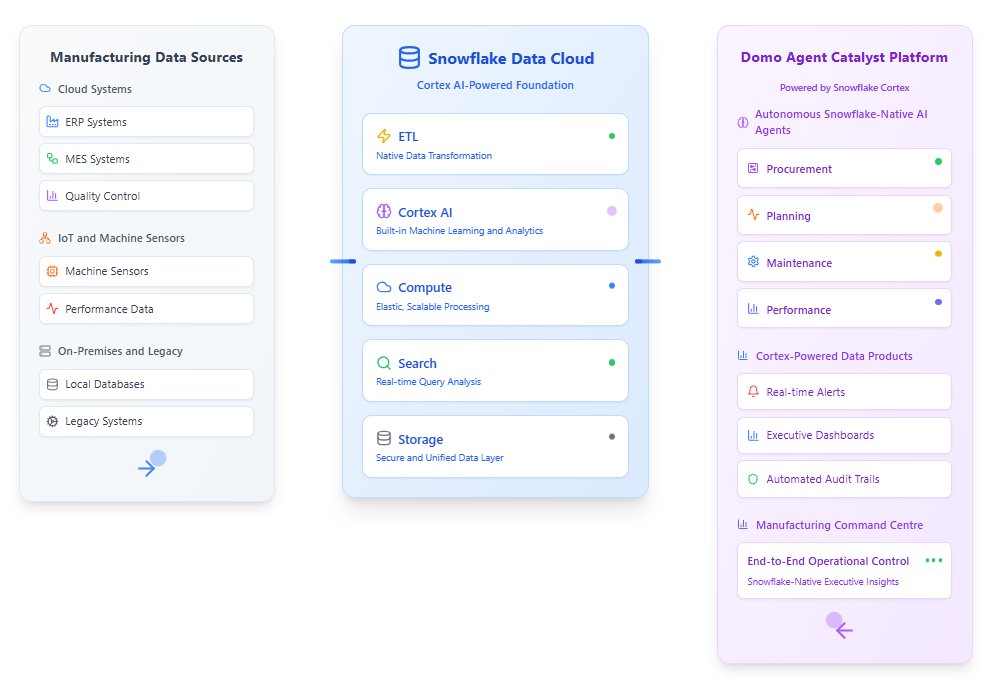

The Manufacturing Process Transformation AI Agent helps manufacturers modernize and optimize production by turning operational data into real time, actionable intelligence. Built on Domo’s AI Agent Catalyst Platform with secure Snowflake integration, this agent continuously monitors production environments, predicts issues before they occur, and recommends targeted actions to improve efficiency, quality, and profitability.

Instead of relying on periodic reports or manual analysis, the agent acts as a centralized decision engine across your production ecosystem. It connects data from machines, sensors, maintenance systems, and supply chain tools to help teams reduce downtime, improve margins, and drive continuous operational improvement.

Benefits

- Reduced downtime

Predict equipment failures before they happen using continuous monitoring and AI driven maintenance recommendations. - Improved operational efficiency

Optimize production schedules, labor allocation, and energy usage based on real time conditions. - Stronger quality control

Detect process deviations earlier by identifying patterns linked to defects or inconsistencies. - Higher margins

Surface cost saving opportunities and efficiency gains that directly impact profitability. - Smarter supply chain alignment

Forecast material needs and adjust production plans based on supply availability and constraints. - Transparent decision making

Every AI recommendation includes a clear explanation and expected business impact. - Continuous improvement over time

The agent learns from outcomes and adapts as your operations evolve.

Why Use AI for Manufacturing Transformation?

Traditional manufacturing optimization depends on delayed analysis and manual interpretation of complex operational data. That approach makes it difficult to respond quickly to emerging issues or simulate the impact of changes before acting.

AI excels at processing large volumes of production data across multiple systems at once. This agent continuously evaluates machine performance, process signals, and historical trends to detect subtle patterns that human teams often miss. Over time, it builds a more accurate operational model that improves predictions and recommendations.

Unlike static dashboards, the Manufacturing Process Transformation AI Agent proactively identifies improvement opportunities, recommends specific actions, and quantifies expected outcomes while maintaining full auditability and governance.

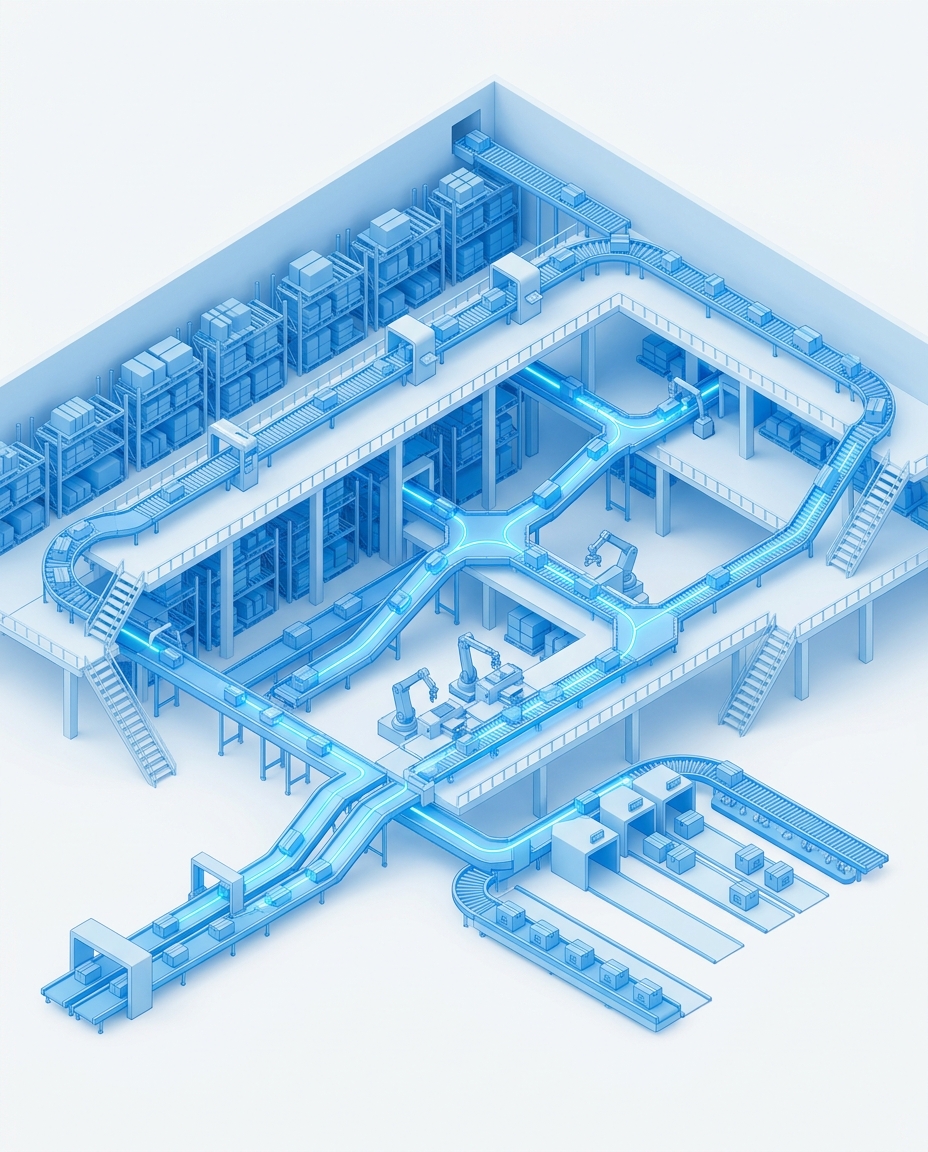

How It Works

Who This Agent Is For

This agent is designed for teams who want to modernize manufacturing operations with intelligent, data driven decision making.

It is ideal for organizations looking to:

- Reduce unplanned downtime and maintenance costs

- Improve production efficiency across multiple lines or facilities

- Detect quality issues earlier in the manufacturing process

- Align production planning with real world supply chain conditions

- Move from reactive reporting to proactive operational optimization

Ideal for: manufacturing operations leaders, plant managers, industrial engineers, maintenance teams, supply chain managers, and continuous improvement teams.

Competitive Content Intelligence AI Agent

Accelerate internal and external research, content strategy, and asset deployment through your marketing channels

Streamline Competitive Content Creation

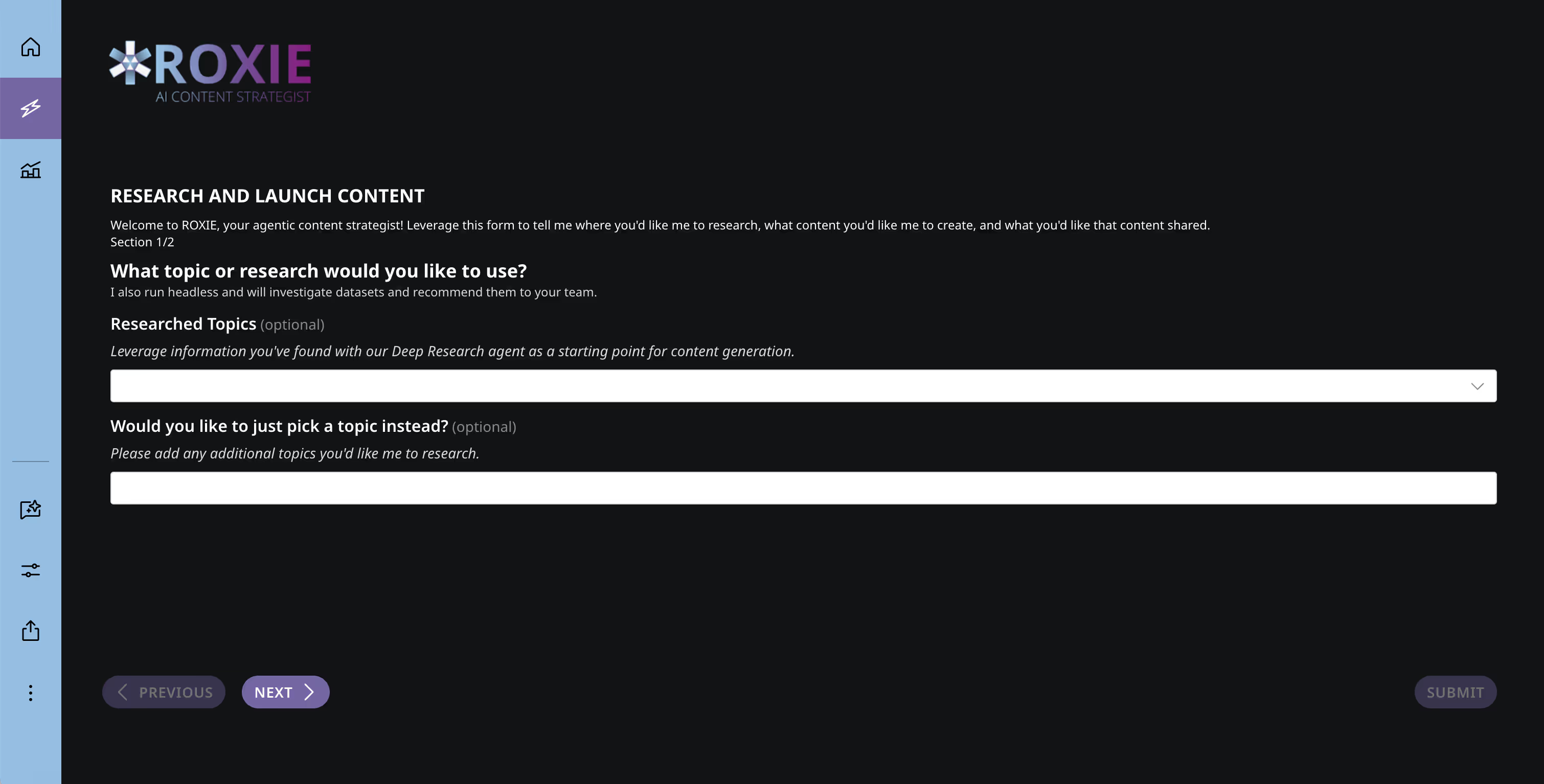

Competitive intelligence is hard to scale and even harder to keep consistent in fast-moving markets. Roxie, your AI Content Strategist, continuously monitors internal CRM data alongside external sources like competitor websites and analyst reports to surface actionable insights.

Roxie helps teams understand where you’re winning or losing deals, why it’s happening, and how to improve your go-to-market messaging—so content stays relevant, differentiated, and aligned to real market signals.

How Roxie Works

Roxie uses purpose-built AI agents to handle every stage of the content lifecycle—from research to publication—while keeping humans in control.

It combines:

- Internal data such as CRM insights, deal notes, and performance signals

- External intelligence including competitor content, analyst coverage, and market trends

The result is content that reflects both what the market is saying and what your data proves.

Key Benefits

- End-to-end content intelligence

Purpose-built agents support deep research, strategy development, SEO optimization, content authoring, tagging, and categorization. - Data-driven topic discovery

Leverages proprietary internal data and publicly available external sources to identify high-impact content opportunities. - Human-in-the-loop quality control

Built-in approvals and integrations ensure tone, accuracy, and brand voice stay consistent. - Seamless publishing

Automatically pushes finalized copy, metadata, and assets to your preferred content management system (CMS).

Powered by Deep Research

Roxie can leverage Domo’s Deep Research agent or focus on a specific topic of your choice to generate competitive insights and content recommendations tailored to your goals.

Built for Scale

Roxie can automatically translate content and be extended to publish across multiple channels, helping teams scale global content efforts without sacrificing quality or consistency.

Who This Agent Is For

The Competitive Content Intelligence AI Agent is designed for teams that need faster, more consistent competitive insights to power better content and messaging.

- Product Marketing Teams

Turn win-loss data and competitive signals into sharper positioning, clearer differentiation, and stronger go-to-market narratives. - Content and Editorial Teams

Identify high-impact topics, create content aligned to real buyer questions, and maintain consistency across formats and channels. - SEO and Growth Teams

Discover competitor gaps, prioritize keywords and topics, and optimize content based on performance data and market demand. - Sales Enablement Teams

Create competitive content, battlecards, and supporting assets that reflect what prospects are actually responding to in deals. - Enterprise Marketing Organizations

Scale competitive intelligence and content creation across products, regions, and teams without sacrificing accuracy or quality.

Sales Incentive & Gamification AI Agent

Real-time sales incentive platform tracking multiple parallel programs with live leaderboards, tier progression, sweep entries, and manager-created bonus contests.

Three programs. Twenty-six reps. One leaderboard that everyone actually looks at.

Sales incentive programs only work when reps can see exactly where they stand. A marketing company managing a wireless carrier’s incentive program had the opposite problem: 26 sales representatives across 11 offices, 8 regions, and 4 skill groups were participating in three parallel incentive programs, and nobody could tell you in real time how many points they had, what tier they were in, or which bonus contests were currently active. The compensation data lived in spreadsheets that were updated weekly — sometimes biweekly — and distributed via email. Reps disputed their numbers. Managers couldn’t tell who was close to a tier threshold. And the promotional contests that were supposed to drive short-term performance spikes went largely unnoticed because they were buried in the same email thread as everything else.

The Sales Incentive & Gamification platform replaces all of that with a real-time, competitive experience that reps actually want to open every morning. Built as a custom ProCode application on Domo, it tracks individual sales history across three parallel programs, calculates points accumulation and sweep entries in real time, visualizes tier progression (Bronze, Silver, Gold) for every rep, and surfaces a live promotions ticker where managers can create and push bonus contests without any development cycle. The result is a transparent, gamified system that motivates reps through competition and visibility rather than opaque spreadsheets and delayed updates.

Benefits

This platform transforms sales incentive management from an administrative burden into a competitive advantage that drives measurable behavior change.

- Real-time transparency: Every rep sees their exact point total, tier status, sweep entries, and ranking the moment a qualifying sale is recorded — no more waiting for weekly spreadsheet updates

- Comp dispute elimination: When every calculation is visible and traceable in real time, the disputes that consumed manager hours every pay period disappear entirely

- Manager-created promotions: Office and regional managers create bonus contests through an AppDB-backed interface and push them to the live promotions ticker instantly — no development cycle, no IT ticket

- Multi-program tracking: Three parallel incentive programs run simultaneously with independent point calculations, tier progressions, and leaderboards — all visible in one interface

- Competitive motivation: Leaderboards by office, region, and skill group create the kind of visible competition that drives incremental effort from reps who can see they are two sales away from the next tier

- Regional leadership visibility: Office-level and region-level roll-ups show exactly which teams are driving program results and which need coaching or support

Problem Addressed

Sales incentive programs fail silently when the people they are designed to motivate cannot see the scoreboard. A rep who does not know they are 50 points from Gold tier has no reason to push for one more sale before Friday. A manager who cannot see which reps are close to bonus thresholds cannot coach them toward the finish line. An office that does not know it is trailing another office by a narrow margin has no competitive spark to close the gap.

The operational burden is equally damaging. Calculating points across three programs with different rules, tracking tier progression for 26 reps, managing sweep entry eligibility, and coordinating promotional contests manually is a full-time administrative job. When it is done in spreadsheets, errors compound, updates lag, and the incentive program — which exists to drive behavior — becomes just another email attachment that reps check once and forget.

What the Agent Does

The platform operates as a real-time incentive calculation and gamification engine:

- Sales data ingestion: Connects to the sales transaction system and attributes each qualifying sale to the correct rep, office, region, and skill group with program-specific point calculations

- Multi-program point engine: Calculates points independently for each of three parallel programs using program-specific rules, multipliers, and qualification criteria

- Tier progression tracking: Maps each rep’s cumulative points against Bronze, Silver, and Gold thresholds, visualizing progress and distance to the next tier in real time

- Sweep entry calculation: Determines sweep entry eligibility based on program rules, calculating and displaying total entries earned per rep per program

- Live promotions ticker: Surfaces active bonus contests — such as gift cards for top earners or extra PTO for reaching specific thresholds — with countdown timers and current standings

- Leaderboard generation: Ranks reps by points, tier, and program across multiple dimensions — by office, by region, by skill group — creating competitive visibility at every organizational level

Standout Features

- No-code promotion creation: Managers create bonus contests through a simple AppDB-backed form — name the promotion, set the rules, pick the date range, and it appears on every rep’s promotions ticker immediately

- Four-dimensional leaderboards: View rankings by individual, by office, by region, or by skill group — each dimension tells a different performance story and creates a different competitive dynamic

- Tier threshold alerts: Reps within striking distance of a tier upgrade receive automated notifications showing exactly how many points or sales they need to advance

- Historical program analytics: Track program performance over time — which program drives the most incremental sales, which promotions generated the biggest spikes, which offices consistently outperform

- Skill group benchmarking: Compare performance across four skill groups to identify training opportunities and ensure incentive structures are calibrated appropriately for different role levels

Who This Agent Is For

This platform is built for organizations running sales incentive programs that are complex enough to need real-time calculation but are currently managed in spreadsheets or legacy comp systems.

- Sales reps who want to see their exact standings, points, and tier progress without waiting for a manager to send an update

- Office managers who need visibility into their team’s incentive performance and the ability to create local promotions that drive short-term results

- Regional leaders managing multiple offices who need comparative performance data to allocate coaching and support resources

- Program administrators calculating points, tiers, and eligibility across multiple parallel incentive structures

- HR and finance teams who need clean, auditable incentive calculation records for compensation processing

Ideal for: telecommunications retailers, insurance sales organizations, automotive dealership groups, staffing agencies, mortgage brokerages, and any organization where sales compensation includes points, tiers, contests, or gamification elements across distributed teams.

Department Sales Recap AI Agent

Weekly department-level sales tracking app that captures daily revenue by department across retail locations with cross-location analysis and benchmarking.

Every department. Every store. Every day of the week.

For a small retail chain, the gap between gut instinct and governed data can be surprisingly expensive. A regional thrift retailer operating three locations was tracking department-level sales in spreadsheets that each store manager maintained independently. The Tampa manager had one format. The St. Petersburg manager had another. The Bradenton manager sometimes forgot to update theirs until the following week. When the regional manager wanted to compare weekend performance across stores or identify which departments were trending up, the answer was always the same: give me a few days to pull the numbers together.

The Department Sales Recap app eliminates that lag entirely. Store managers enter daily revenue by department directly in a ProCode application that handles inline editing, auto-save, and even offline entry when the store’s Wi-Fi drops. Every submission flows into a sync-enabled AppDB collection that automatically pushes structured data into a Domo dataset. Within seconds of entry, the regional manager can see cross-location comparisons, day-of-week patterns, department trends, and store-versus-store benchmarks — no spreadsheet consolidation, no format normalization, no waiting.

Benefits

This app transforms department-level sales tracking from a manual reporting chore into an always-current intelligence layer that makes cross-location retail analysis effortless.

- Instant cross-location analysis: The moment a store manager saves their numbers, the regional view updates — compare Tampa versus St. Petersburg versus Bradenton without any manual consolidation

- Day-of-week pattern detection: See exactly how Saturday revenue compares to Tuesday across every department and location, surfacing weekend traffic patterns and weekday opportunities

- Department benchmarking: Which store’s Women’s department outperforms the others? Is Household trending up in one location and down in another? The data answers these questions instantly

- Offline resilience: Store managers can enter sales data even when Wi-Fi drops — localStorage fallback preserves entries and syncs automatically when connectivity returns

- Zero spreadsheet overhead: No more emailing Excel files, no more version conflicts, no more format normalization — everyone enters data in the same app and it flows into one governed dataset

- Historical trend analysis: Weeks and months of daily department data accumulate into a rich dataset for seasonal analysis, promotional impact measurement, and year-over-year comparison

Problem Addressed

Small and mid-size retail chains often outgrow spreadsheets long before they realize it. When you have three stores, each with five departments, tracking daily revenue across seven days, you are managing 105 data points per week per store. Across three stores, that is 315 weekly entries that someone needs to collect, normalize, and analyze. It sounds manageable until you consider that the analysis — comparing stores, identifying trends, spotting anomalies — requires all 315 entries to be in the same format, in the same place, at the same time. Spreadsheets almost never achieve that.

The downstream cost is invisible but real. A department that is underperforming at one location goes unnoticed for weeks because nobody had time to compare the numbers. A weekend promotion that drove significant traffic at one store is not replicated at others because the data was buried in a spreadsheet that arrived late. The regional manager makes staffing and inventory decisions based on impressions rather than data because the data takes too long to assemble.

What the Agent Does

The app operates as a lightweight but governed sales data capture and cross-location analysis system:

- Department-level entry: Store managers enter daily revenue for each department — Women’s, Men’s, Children’s, Shoes, and Household — through a simple, purpose-built interface

- Inline editing and auto-save: Corrections and updates happen in-place with automatic saving, eliminating the risk of lost entries or version conflicts

- Offline fallback: When store connectivity drops, entries are cached in localStorage and automatically sync to AppDB when the connection is restored

- AppDB-to-Dataset sync: Every submission flows from the app into an AppDB collection that automatically pushes structured records into a Domo dataset for analytics

- Cross-location dashboards: Pre-built views compare department revenue across all locations by day, week, and trend, with store-versus-store benchmarking

- Day-of-week analysis: Visualizations break down revenue patterns by day of the week, surfacing which days drive traffic to which departments at which locations

Standout Features

- Purpose-built simplicity: The entry interface shows only what the store manager needs — their store, today’s date, and five department fields. No training required, no complex navigation, no confusion

- Governed from entry to analysis: Data flows from the store manager’s screen through AppDB into a Domo dataset without any manual export, email, or file transfer — the chain of custody is clean

- Works on any device: Store managers can enter data from a tablet at the register, a phone in the back office, or a desktop — the responsive interface adapts to any screen

- Automatic data quality: The app validates entries at submission — no negative numbers, no missing departments, no duplicate days — ensuring the dataset stays clean without manual review

- Scalable to more locations: Adding a fourth or fifth store requires only adding the location to the app configuration — the cross-location analysis automatically incorporates the new data

Who This Agent Is For

This app is built for retail operators who have outgrown spreadsheets but don’t need (or can’t afford) an enterprise POS analytics platform.

- Store managers who need a fast, simple way to record daily department sales without spreadsheet overhead

- Regional managers who need cross-location performance comparisons without waiting for manual data consolidation

- Operations teams identifying top-performing departments, underperforming locations, and day-of-week patterns

- Finance teams tracking actual department revenue against budgets with daily granularity

- Franchise or chain operators scaling from a few locations who need consistent data capture from day one

Ideal for: thrift stores, specialty retail chains, consignment shops, small grocery chains, boutique retailers, and any multi-location retail operation where department-level sales tracking happens today in spreadsheets.

Studio Performance Dashboard AI Agent

Real-time franchise performance dashboard tracking the complete member funnel from leads through retention across multiple studio locations with churn prediction and LTV modeling.

Every location. Every metric. Every stage of the member journey.

Running a multi-location fitness franchise means managing dozens of variables that compound across sites. One studio might be converting leads at 40% but hemorrhaging members at 90 days. Another might have low lead volume but exceptional retention. A third might look healthy on revenue but is propped up by a promotional pricing wave that expires next month. Without a unified view that connects the full member funnel — from first lead to long-term retention — corporate leadership discovers these patterns too late to intervene effectively.

The Studio Performance Dashboard gives franchise operators that unified view. Built as a custom ProCode application on Domo, it tracks every location across the complete membership lifecycle: leads generated, intro appointments booked, sessions completed, memberships closed, monthly churn rates, frozen account volumes, active member counts, and projected versus actual revenue. Each location is tiered against a performance baseline so managers see not just raw numbers but context — is this studio above or below where it should be? — and corporate leadership gets a roll-up view that instantly surfaces which locations need attention and which are driving growth.

Benefits

This dashboard transforms franchise management from reactive monthly reviews into proactive daily intervention based on real-time funnel performance across every location.

- Complete funnel visibility: Track every stage from lead generation through long-term retention in one view — no more piecing together booking data from one system, membership data from another, and revenue from a third

- Early revenue risk detection: 30-day cancellation notice tracking and churn trend analysis surface revenue risk weeks before it hits the P&L, giving operators time to intervene

- Performance tiering: Each location is benchmarked against its expected performance baseline, so a studio converting at 35% is flagged if its baseline is 45% but celebrated if its baseline is 25%

- Lifetime value modeling: LTV calculations per location and membership type show which studios are acquiring high-value members versus cycling through promotional sign-ups

- Corporate roll-up: Every location-level metric aggregates into a single corporate view showing portfolio health, top performers, and at-risk studios in one dashboard

- Frozen account intelligence: Tracks frozen membership volumes separately from active churn, distinguishing between members who paused temporarily and those who left permanently

Problem Addressed

Franchise operators typically have access to pieces of member data but not the connected picture. The booking system shows appointment volume. The membership system shows active counts. The billing system shows revenue. But none of these systems connect the full journey from lead to long-term member, and none of them provide the cross-location comparison that franchise leadership needs to allocate resources and attention effectively.

The result is that corporate leadership operates on lagging indicators. They see last month’s revenue after the month closes. They discover a studio’s churn problem when quarterly reviews reveal the trend. They learn about a lead generation collapse when a studio manager mentions it in a call. By the time these signals reach the decision-makers, the operational window to course-correct has often passed.

What the Agent Does

The dashboard operates as a real-time franchise intelligence system tracking the complete member lifecycle across all locations:

- Lead tracking: Ingests lead data from marketing platforms and CRM, attributing lead volume and source by location with conversion rate calculations at each funnel stage

- Funnel analysis: Maps every member through leads → intro appointments → bookings → completions → closes, calculating conversion rates and drop-off points at each stage per location

- Retention monitoring: Tracks monthly churn, 30-day cancellation notices, frozen account rates, and active member counts with trend lines showing directional movement per studio

- Revenue projection: Compares projected revenue (based on active membership and pricing) against actual collections, flagging variances and identifying the underlying causes

- Performance tiering: Each location is assigned a performance tier based on its baseline and current trajectory, with automated escalation when a studio drops below threshold

- Lifetime value calculation: Computes member LTV by studio, membership type, and acquisition channel, showing which lead sources produce the highest long-term value

Standout Features

- 30-day cancellation pipeline: Members who have submitted cancellation notices are tracked separately as upcoming churn, giving studios a window to engage retention efforts before the member leaves

- Manager-specific views: Each studio manager sees their location’s performance with their specific targets, while corporate sees the full portfolio — same data, different context

- Seasonal adjustment: Performance baselines adjust for known seasonal patterns (January surge, summer dip) so managers are evaluated against realistic expectations, not flat annual targets

- Drill-through from corporate to studio: Corporate leaders can click from the portfolio view directly into any studio’s detailed metrics, seeing the specific funnel stage or retention metric driving the overall score

- Automated risk alerts: When a studio’s churn rate exceeds its baseline or lead conversion drops below threshold for three consecutive weeks, automated alerts notify regional and corporate leadership

Who This Agent Is For

This dashboard is built for franchise operators who need to manage member lifecycle performance across multiple locations without waiting for month-end reports to discover problems.

- Studio managers tracking their daily funnel performance and retention metrics against targets

- Regional directors overseeing multiple locations who need to quickly identify which studios need attention

- Franchise leadership making resource allocation decisions based on real-time portfolio performance

- Marketing teams measuring lead quality and conversion rates by source and location

- Finance teams projecting revenue based on active membership trends and cancellation pipeline

Ideal for: fitness franchises, wellness studios, healthcare clinics, tutoring centers, salon chains, and any multi-location service business where member acquisition and retention drive recurring revenue.

Lab Compliance & Data Collection AI Agent

Digital lab compliance system with ten interconnected forms, immutable audit records, and real-time regulatory visibility for GMP environments.

Ten forms. One compliance posture. Zero paper.

In a GMP production environment, documentation is not a suggestion — it is the difference between passing an FDA audit and receiving a warning letter. A regulated biotechnology research facility was managing this documentation across paper binders, standalone spreadsheets, and email chains. Sample intake logs lived in one binder. Equipment calibration records lived in another. Deviation reports were handwritten and filed in folders that only one person knew how to navigate. When an inspection was announced, the team spent days assembling documentation that should have been instantly accessible. Worse, calibration due dates sometimes slipped because nobody was systematically tracking them — the reminder lived in someone’s head or on a sticky note on a lab bench.

The Lab Compliance system replaces all of that with a unified digital platform built on Domo. Ten interconnected forms cover every documentation requirement in the GMP production workflow, each backed by a dedicated AppDB collection that syncs in real time to Domo datasets. Every submission is timestamped, immutable, and immediately available for audit review. Calibration due dates surface automatically. Non-conformance events trigger resolution workflows. And the QA team has a live dashboard showing the compliance status of every piece of equipment, every active batch, and every training certification in the facility.

Benefits

This system transforms lab compliance from a paper-based retrospective exercise into a continuously updated, audit-ready digital operation.

- Inspection-ready at all times: Every piece of compliance documentation is digital, timestamped, and instantly accessible — no more multi-day scrambles to assemble binders before an FDA visit

- Immutable audit trail: Every form submission creates a permanent, timestamped record in AppDB that cannot be altered after the fact, giving auditors exactly the traceability they require

- Automated calibration tracking: Equipment calibration due dates are computed and surfaced automatically — no more missed recalibrations because the reminder was on a sticky note that fell off the bench

- Non-conformance visibility: Deviation reports flow into a structured workflow that tracks resolution status, assigned investigators, and corrective actions through completion

- Real-time training status: Every employee’s training certifications, expiration dates, and completion records are visible in a single view, ensuring the facility never operates with uncertified personnel

- Connected data model: Sample intake connects to experiment logs which connect to batch records which connect to equipment use — the entire production history is linked rather than scattered across independent documents

Problem Addressed

Regulated laboratories operate under documentation requirements that assume every action is recorded, every record is retrievable, and every piece of equipment is within its calibration window at the time of use. Paper-based systems technically satisfy these requirements — if you can find the right binder, if the handwriting is legible, if the form was actually filled out at the time of the event rather than reconstructed later, and if the calibration sticker on the equipment matches the record in the log.

In practice, paper systems create gaps that compound silently. A reagent lot gets used without being logged. An environmental monitoring reading is taken but not transcribed until the next day. A training certification expires and nobody notices for two weeks because the tracking spreadsheet was not updated. Each gap on its own is minor. Accumulated across months of production cycles, they create the kind of systemic documentation weakness that regulators are specifically trained to identify.

What the Agent Does

The system operates as a unified digital documentation platform covering the complete GMP production workflow:

- Sample intake forms: Digital capture of incoming sample metadata, chain-of-custody records, and storage condition documentation with barcode or manual entry

- Experiment logging: Structured forms for recording experimental procedures, observations, results, and deviations in real time as work is performed

- Equipment use tracking: Logs which equipment was used for which procedures, automatically verifying that calibration status was current at time of use

- Environmental monitoring: Records temperature, humidity, particulate counts, and other environmental parameters with automated out-of-range alerting

- Calibration management: Tracks calibration schedules, records calibration results, computes next-due dates, and surfaces overdue instruments before they are used

- Deviation and CAPA workflow: Structured reporting for non-conformance events with assigned investigation, root cause analysis, corrective action tracking, and closure verification

Standout Features

- Ten interconnected form types: Sample intake, experiment logging, equipment use, environmental monitoring, reagent tracking, calibration records, deviation reports, batch records, audit checklists, and training certifications — all linked through a common data model

- AppDB-to-Dataset sync: Every form submission writes to a dedicated AppDB collection that automatically syncs into Domo datasets, enabling cross-form analytics without manual data movement

- Proactive compliance alerts: Calibrations approaching due date, training certifications nearing expiration, and unresolved deviations older than their SLA all trigger automated notifications to the responsible party

- Audit-ready reporting: Pre-built compliance reports generate the exact documentation packages that FDA inspectors and GMP auditors request, organized by date range, equipment, batch, or personnel

- Offline capability: Lab personnel can submit forms from tablets in areas with intermittent connectivity, with data syncing automatically when connection is restored

Who This Agent Is For

This system is built for regulated laboratories and production facilities where compliance documentation is not optional and paper-based systems are creating risk.

- QA managers responsible for maintaining audit-ready documentation across all GMP operations

- Lab supervisors who need their teams documenting work in real time rather than reconstructing records after the fact

- Compliance officers preparing for FDA inspections who need instant access to any documentation an auditor might request

- Equipment managers tracking calibration schedules across dozens or hundreds of instruments

- Training coordinators ensuring every employee operating in the GMP environment has current certifications

Ideal for: biotechnology companies, pharmaceutical manufacturers, contract research organizations, medical device manufacturers, food production facilities, and any organization operating under FDA, GMP, GLP, or ISO quality management requirements.

Retail Intelligence Hub AI Agent

Omni-channel intelligence hub consolidating performance data across eight retail channels into a unified view with sales trends, financial tracking, marketing attribution, product health, and competitive share.

Eight channels. Five data domains. One truth.

A consumer products brand selling through Amazon, Walmart, Target, Wayfair, and four additional marketplace platforms was running each channel’s reporting in isolation. The Amazon team had their dashboards. The Walmart team had theirs. Marketing had a separate set of spreadsheets tracking ROAS by platform. Finance pulled actuals from yet another system. Nobody had a unified view of where revenue was actually coming from, which marketing spend was driving sell-through, or which products were at risk of stocking out across channels. Every Monday’s leadership meeting started with 45 minutes of reconciling numbers before anyone could discuss strategy.

The Retail Intelligence Hub eliminates that entirely. Built as a custom ProCode application on Domo, it consolidates all eight retail channels into a single governed dataset model and surfaces five unified data domains: a 52-week rolling sales history with seasonal trend modeling, actual-versus-budget financial performance by product category, marketing spend efficiency including ROAS and conversion attribution by platform and campaign, product health indicators such as weeks-of-supply and buy-box percentage, and competitive market share trending by brand. Category managers open one dashboard and see everything.

Benefits

This agent transforms fragmented, channel-specific retail reporting into a unified intelligence layer that gives every stakeholder the same numbers, the same context, and the same early warnings.

- Unified cross-channel visibility: Eight retail channels consolidated into one governed view — no more reconciling Amazon numbers against Walmart numbers against Shopify numbers before every meeting

- 52-week seasonal intelligence: Rolling sales history with trend modeling that surfaces seasonal patterns, year-over-year shifts, and emerging category dynamics across all channels simultaneously

- Marketing attribution clarity: ROAS and conversion attribution broken down by platform, campaign, and product category so marketing knows exactly which spend is driving measurable sell-through and which is not

- Product health early warning: Weeks-of-supply calculations, buy-box percentage tracking, and inventory risk indicators that flag problems before they become stockouts or lost listings

- Competitive share trending: Brand-level market share data showing how your products are performing relative to competitors across every tracked category and channel

- Daily operational cadence: Data refreshes daily so category managers and channel directors operate on current numbers rather than last week’s exports

Problem Addressed

Omni-channel retail creates an omni-channel data problem. Each marketplace platform provides its own reporting portal with its own metrics, its own export formats, and its own update cadence. Amazon Seller Central shows one version of reality. Walmart Retail Link shows another. Target’s portal has its own structure. Wayfair, Shopify, and marketplace aggregators each add their own layer. The result is that nobody in the organization can answer a simple question like “what was our total revenue last week across all channels?” without pulling exports from eight systems and spending hours normalizing them into a comparison.

The problem compounds when you add marketing, inventory, and competitive data to the picture. Marketing spend flows through different platforms than sales data. Inventory feeds come from warehouse and 3PL systems. Competitive intelligence comes from syndicated data providers. Without a unified model that connects all of these, the organization makes decisions in silos — the marketing team optimizes spend without seeing inventory constraints, the sales team pushes volume without seeing margin impact, and finance discovers the gaps after the quarter closes.

What the Agent Does

The hub operates as a unified retail intelligence engine connecting every data source into a single analytical model:

- Multi-channel data ingestion: Connectors pull daily data from eight retail platform APIs — Amazon, Walmart, Target, Wayfair, Babylist, Shopify, and two additional marketplaces — normalizing different schemas into a unified product-channel-date model

- Rolling sales analysis: Maintains a 52-week rolling sales history with automated seasonal trend detection, year-over-year comparison, and category-level growth rate calculations across all channels

- Financial performance tracking: Compares actual revenue and margin against budget targets by product category and channel, surfacing variances with root-cause indicators

- Marketing attribution engine: Maps marketing spend to conversion outcomes by platform and campaign, calculating ROAS, cost-per-acquisition, and attributed revenue at the channel and product level

- Product health monitoring: Calculates weeks-of-supply from current inventory and sales velocity, tracks buy-box ownership percentage on Amazon, and flags products at risk of stockout or delisting

- Competitive share analysis: Integrates syndicated market data to show brand-level share trending within tracked categories, identifying where share is growing or eroding relative to competitors

Standout Features

- Channel-agnostic data model: Adding a ninth or tenth retail channel requires only a new connector configuration — the unified schema, dashboards, and calculations automatically incorporate the new data without rebuilding anything

- Revenue mix decomposition: Instantly see how total revenue breaks down by channel, product category, and time period — identifying which channels are growing share of the portfolio and which are declining

- Inventory risk scoring: Products are scored by stockout risk based on current velocity, weeks-of-supply, and replenishment lead times — giving operations a prioritized action list rather than a wall of SKU data

- Campaign-level drill-through: From a ROAS summary, drill directly into individual campaign performance by platform, seeing creative-level metrics alongside attributed conversions

- Governed single source of truth: Every number in every dashboard traces back to the same unified dataset — eliminating the “my numbers don’t match your numbers” problem that derails most cross-functional retail meetings

Who This Agent Is For

This hub is built for consumer products organizations selling through multiple retail channels who need a single, governed view of performance across their entire distribution footprint.

- Category managers responsible for product performance across multiple retail partners who currently reconcile data from separate portals

- Channel directors managing marketplace relationships who need daily visibility into sales velocity, inventory health, and competitive positioning

- Marketing teams allocating spend across platforms who need attribution data that connects campaigns to actual sell-through by channel

- Finance teams tracking actual-versus-budget performance who need consistent, timely revenue data without waiting for month-end reconciliation

- Executive leadership seeking a single dashboard view of the omni-channel business rather than a slide deck assembled from eight different exports

Ideal for: consumer products companies, CPG brands, DTC brands expanding into retail, marketplace sellers operating across multiple platforms, and any organization where retail revenue flows through more channels than any single team can manually track.

Development Due Diligence AI Agent

Enter an address or parcel ID and receive a comprehensive due diligence report covering zoning, environmental, title, tax, demographics, and comparable sales.

One address. One click. Complete site intelligence.

You know the drill. A new site comes across your desk — maybe a broker sent it over, maybe your land team flagged it, maybe an owner reached out directly. Before anyone can make an informed decision about whether to pursue it, someone needs to pull zoning records, check environmental history, review title, look up tax assessments, analyze demographics, and run comparable sales. That someone is usually a junior analyst who spends two to five days assembling a report from ten different county websites, GIS systems, environmental databases, and MLS platforms. And even after all that work, the report format varies depending on who compiled it, important details get missed depending on which databases they remembered to check, and the development team still has follow-up questions that require going back to the same sources.

The Development Due Diligence Agent eliminates all of that. You enter an address or parcel ID. The agent queries every connected data source simultaneously, compiles the results into a standardized report with risk scoring and development feasibility analysis, and delivers it in minutes. Every site gets the same depth of analysis. Every report follows the same structure. Every data source is checked every time.

Benefits

This agent transforms site due diligence from a multi-day research project into an on-demand intelligence service that delivers consistent, comprehensive reports from a single input.

- Minutes instead of days: A complete due diligence report that previously required two to five days of analyst research generates in minutes from a single address or parcel ID entry

- Consistent depth every time: Every site evaluation checks the same data sources, applies the same risk criteria, and follows the same report structure — no more variability based on who compiled it or which databases they remembered to check

- Comprehensive data coverage: Zoning, environmental history, title records, tax assessments, demographic profiles, comparable sales, flood zone status, and utility availability — all pulled automatically from connected sources

- Risk scoring: Each report includes a composite risk score based on environmental flags, zoning restrictions, title encumbrances, and development feasibility factors — giving deal teams a quick read on site viability before investing further resources

- Standardized output: Reports follow a consistent template that development teams, lenders, and investors expect, eliminating the reformatting and restructuring that ad-hoc research requires

- Portfolio-scale screening: Evaluate dozens of potential sites in a single day instead of committing analyst weeks to sequential deep dives, enabling a much wider initial screening funnel

Problem Addressed

Real estate development due diligence is fundamentally a data aggregation problem. The information exists — zoning codes are public, environmental records are searchable, tax assessments are filed, title history is recorded, demographics are published, and comparable sales are tracked. The problem is that this information lives in ten or more disconnected systems, each with its own interface, search methodology, and output format. An analyst performing due diligence on a single site might visit the county assessor website, the zoning department portal, the state environmental database, a title search service, the Census Bureau, a GIS platform, and an MLS system before having enough data to write the first section of the report.

The time cost is significant, but the quality cost is worse. When an analyst is checking ten sources under a deadline, some sources get thorough searches and others get cursory checks. Environmental history might get a deep review while flood zone status gets a quick glance. The report that reaches the development committee reflects not just the site’s characteristics but also the analyst’s time constraints, source familiarity, and individual judgment about what to prioritize. Two analysts evaluating the same site will produce reports with different depth, different emphasis, and potentially different conclusions.

What the Agent Does

The agent operates as an automated site intelligence engine that converts a single address or parcel ID into a comprehensive, standardized due diligence report:

- Address/parcel resolution: Accepts a street address, APN, or parcel ID and resolves it to precise geographic coordinates and jurisdictional boundaries for targeted data queries

- Multi-source data retrieval: Simultaneously queries connected data sources for zoning classification, permitted uses, environmental records, title history, tax assessment, demographic profile, comparable sales, flood zone designation, and utility availability

- Data normalization: Converts disparate source formats into a unified data model — zoning codes from different jurisdictions are mapped to standardized use categories, tax values are normalized to per-square-foot metrics, and demographic data is structured for development feasibility analysis

- Risk scoring: Applies a composite scoring model that evaluates environmental contamination risk, zoning compatibility with intended use, title encumbrance severity, flood exposure, and infrastructure adequacy

- Report assembly: Generates a structured due diligence report with executive summary, property profile, zoning analysis, environmental assessment, title summary, financial analysis (tax + comps), demographic context, and risk matrix

- Feasibility analysis: Based on the assembled data and intended development type, provides a preliminary feasibility assessment including estimated development costs, comparable project performance, and key risk factors to investigate further

Standout Features

- Single-input simplicity: The entire workflow starts from one field — enter an address or parcel ID and the agent handles everything else. No forms to fill out, no sources to select, no parameters to configure

- Jurisdiction-aware queries: Automatically identifies the county, municipality, and special district jurisdictions for the parcel and routes queries to the correct data sources for each — critical when operating across multiple markets

- Comparable sales analysis: Pulls recent sales of similar properties within configurable radius and property type criteria, calculating price-per-square-foot benchmarks and market trend indicators

- Environmental red flag detection: Scans environmental databases for brownfield designations, underground storage tanks, hazardous waste sites, and remediation history within the parcel and surrounding area

- Report versioning: Maintains a history of all reports generated for each parcel, enabling teams to see how site conditions and market data have changed between evaluation periods

Who This Agent Is For

This agent is built for real estate professionals who evaluate potential development sites and need comprehensive, consistent due diligence without the multi-day research timeline.

- Development analysts who currently spend days compiling site research from disconnected public and commercial data sources

- Acquisitions teams screening multiple potential sites who need to evaluate more opportunities in less time

- Development directors who want every site evaluation to meet the same depth and quality standard regardless of which analyst performs it

- Lenders and investors who require standardized due diligence documentation before committing capital to a project

- Land brokers who want to provide clients with comprehensive site intelligence as a value-added service

Ideal for: real estate developers, land acquisition firms, commercial real estate brokerages, REITs, construction companies evaluating new projects, municipal planning departments, and any organization where site selection decisions depend on aggregating data from multiple disconnected public and commercial sources.

Legal Review AI Agent

Reviews contracts for risk clauses, compliance issues, and non-standard terms, then routes severity-scored findings through structured review workflows.

Every clause checked. Every risk scored. Every finding routed.

Contract review at scale is a pattern recognition problem that most legal departments solve with the most expensive pattern recognizer available: attorney hours. An associate reads through a 40-page vendor agreement, mentally checking each clause against the organization’s risk policies, flagging non-standard terms, and noting compliance requirements. It works. It is also slow, inconsistent across reviewers, and economically unsustainable when contract volume exceeds the team’s capacity. The Legal Review Agent applies the same analytical framework systematically to every document. Built natively in Domo using Agent Catalyst, Workflows, Filesets, and Task Center, the agent ingests contracts from a governed document repository, scans every clause against configurable risk patterns and compliance requirements, assigns severity scores to each finding, and routes results through a structured review workflow where attorneys focus on the items that need judgment rather than reading every page of every agreement.

Benefits

This agent restructures legal review from a sequential, read-everything process into a risk-prioritized workflow where AI handles the scanning and humans handle the judgment calls.

- Systematic risk detection: Every contract is scanned against the full library of risk patterns and compliance requirements — no clause is skipped because the reviewer was tired at page 37

- Severity-scored findings: Each flagged item includes a severity classification (critical, high, medium, low) with the specific clause text, the rule it triggered, and a recommended action — reviewers know exactly where to focus

- Consistent standards: The same risk rules apply to every contract regardless of which attorney reviews it, eliminating the reviewer-dependent variability that creates compliance gaps

- Attorney time optimization: Associates spend their time on high-severity findings that require legal judgment rather than reading routine clauses that match standard templates

- Compliance traceability: Every scan, finding, severity score, and review decision is logged with timestamps, creating an auditable record that demonstrates systematic due diligence

- Faster contract cycle: Contracts that would sit in the review queue for days get their initial risk assessment in minutes, accelerating the entire procurement and vendor onboarding timeline

Problem Addressed

Legal departments face an impossible scaling equation: contract volume grows with business complexity, but adding attorneys is expensive and slow. The result is a review bottleneck where contracts wait in queue for days or weeks, procurement timelines slip, and business teams work around the process by accepting terms without proper review. When everything is urgent, nothing gets the attention it deserves.

The quality risk is equally concerning. Manual review is inherently variable. One attorney might flag an indemnification clause that another considers standard. A non-standard liability cap might be caught in a focused morning review session but missed during an exhausted late-afternoon pass through the same type of agreement. Without systematic scanning, the organization’s risk exposure depends on which attorney reviewed which contract on which day — a compliance lottery that no general counsel would accept if they quantified it.

What the Agent Does

The agent operates as a systematic contract scanning and risk routing engine:

- Document ingestion: Contracts uploaded to the governed FileSet repository are automatically queued for review, supporting PDF, Word, and structured text formats with OCR for scanned documents

- Clause extraction: The agent parses each document into individual clauses, identifying section boundaries, defined terms, and cross-references to build a structured representation of the agreement

- Risk pattern matching: Each clause is evaluated against a configurable library of risk patterns: non-standard indemnification, unlimited liability, auto-renewal terms, unilateral amendment rights, restrictive IP assignment, inadequate termination provisions, and more

- Severity scoring: Findings are classified by severity based on the risk type, the deviation from standard terms, and the financial exposure implied — critical findings surface immediately while informational items are logged for reference

- Review routing: Task Center assignments deliver findings to the appropriate reviewer based on contract type, risk category, and severity level — senior counsel gets critical items, associates handle standard deviations

- Resolution tracking: Reviewers document their decision (accept risk, negotiate change, reject term) directly in the workflow, creating a complete record of how each finding was addressed

Standout Features

- Customizable risk library: Organizations define their own risk patterns and severity thresholds — what constitutes a critical finding for a healthcare company differs from a technology vendor, and the rule library reflects those differences

- Clause comparison: When a non-standard term is detected, the agent shows the standard language side-by-side with the contract language, making it immediately clear what the deviation is and how significant it is

- Contract type classification: The agent automatically identifies the contract type (NDA, MSA, SOW, vendor agreement, lease) and applies the appropriate risk rules for that category rather than using a one-size-fits-all scan

- Precedent tracking: Records how similar findings were resolved in past contracts, giving current reviewers visibility into organizational precedent — if the same clause was accepted three times before, that context informs the current decision

- Volume analytics: Dashboards show contract review throughput, average findings per contract, severity distribution, and resolution time trends — giving legal operations data to optimize staffing and process

Who This Agent Is For

This agent is built for legal departments where contract volume has outpaced the team’s ability to review every agreement with consistent depth and speed.

- General counsel seeking systematic risk management across all contracts rather than reviewer-dependent quality

- Legal operations managers responsible for review throughput and cycle time without proportional headcount growth

- Procurement teams waiting on legal review before vendor agreements can be executed

- Compliance officers who need documented evidence that every contract was reviewed against the organization’s risk policies

- Associates who would rather spend their time on complex negotiations than reading boilerplate clauses

Ideal for: enterprises with high contract volume, regulated industries requiring compliance documentation, organizations with distributed procurement where legal cannot review every agreement manually, and any company where contract review bottlenecks delay business operations.

Employee Time Management AI Agent

Monitors time entries across the workforce, flags anomalies like missed punches and overtime, routes exceptions through approval workflows, and generates compliance-ready reports.

Zero payroll surprises. Every exception caught before the pay cycle closes.

The results speak for themselves: payroll errors from time entry anomalies drop to near zero, HR teams reclaim hours previously spent on manual exception review, and managers receive real-time alerts instead of discovering problems during payroll processing when it is too late to fix them cleanly. The Employee Time Management Agent monitors every time entry across your workforce in real time, applying configurable rules to detect missed clock punches, unapproved overtime, scheduling conflicts, and pattern anomalies before they become payroll errors. Exceptions route automatically through manager approval workflows with full context attached, so the reviewer sees exactly what the anomaly is, why it was flagged, and what the recommended resolution is. Compliance reports generate automatically at the end of every pay period, giving HR and finance a clean, auditable record without manual compilation.

Benefits

This agent eliminates the reactive cycle of discovering time entry problems during payroll processing and replaces it with continuous, proactive detection that catches every exception before it becomes a payroll error.

- Near-zero payroll errors: Anomalies are detected and resolved in real time rather than discovered during payroll processing, eliminating the corrections, adjustments, and employee complaints that follow every pay cycle

- HR time reclaimed: Manual exception review that consumed hours every pay period is replaced by automated detection and routing — HR reviews only the items that need judgment, not every time entry

- Manager accountability: Exceptions route directly to the responsible manager with full context and recommended actions, creating clear ownership rather than a centralized HR bottleneck

- Compliance confidence: Automated reports meet labor law documentation requirements for overtime tracking, break compliance, and work hour limits — generated automatically without manual assembly

- Pattern detection: The agent identifies trends that individual reviews miss — a department consistently approaching overtime thresholds, a shift pattern creating scheduling conflicts, or a location with above-average missed punches

- Real-time visibility: Instead of a weekly or bi-weekly payroll surprise, supervisors see time entry status continuously with alerts on exceptions as they occur

Problem Addressed

In most organizations, time entry problems are discovered at the worst possible moment: during payroll processing. A missed clock punch means an employee shows zero hours for a day they worked. Unapproved overtime means a budget variance that nobody accounted for. A scheduling conflict means two employees were both clocked into the same restricted-capacity role. By the time these issues surface in payroll, the pay cycle is closing, corrections are rushed, and someone ends up with an incorrect paycheck that creates an HR ticket.

The manual prevention approach — HR staff reviewing time entries before payroll — does not scale. With hundreds or thousands of employees generating time entries daily, human review catches obvious errors but misses subtle patterns. A single missed punch is easy to spot; a department trending toward overtime thresholds over three weeks is not. And the review itself consumes HR hours that should be spent on strategic workforce management rather than data auditing.

What the Agent Does

The agent operates as a continuous time entry monitoring and exception management system:

- Real-time entry monitoring: Magic ETL ingests time entries from all clock-in systems and normalizes data across locations, departments, and employee types into a unified monitoring view

- Configurable rule engine: Agent Catalyst applies organization-specific rules — overtime thresholds, mandatory break windows, maximum consecutive hours, scheduling conflict detection, missed punch identification — to every entry as it arrives

- Anomaly classification: Each detected exception is classified by type and severity — informational (approaching overtime), warning (missed punch), or critical (labor law compliance risk) — with recommended resolution actions

- Automated approval routing: Exceptions route through Workflow-driven approval chains to the appropriate manager with full context: the employee, the anomaly, the rule violated, and the recommended action

- Notification escalation: If a manager does not act within a configurable window, the exception escalates to the next level with an urgency flag, ensuring nothing falls through the cracks before payroll closes

- Compliance report generation: At the end of each pay period, the agent automatically generates compliance documentation covering overtime hours, break adherence, work hour limits, and exception resolution history

Standout Features

- Department-level trend detection: Goes beyond individual exceptions to identify team-level patterns — a department consistently approaching overtime limits, a location with rising missed punch rates, or a shift pattern creating recurring conflicts

- Pre-payroll audit: A dedicated pre-payroll checkpoint runs all time entries through the full rule set 48 hours before processing, generating a clean/exception report that gives HR confidence before the cycle closes

- Manager self-service: Managers approve or resolve exceptions directly from notification links without logging into a separate system — one click to approve overtime, one click to request employee correction

- Multi-jurisdiction compliance: Rule sets can be configured per state, country, or labor agreement, ensuring overtime thresholds, break requirements, and work hour limits reflect local regulations

- Historical benchmarking: Compares current period exception rates against historical baselines, flagging when a location or department deviates significantly from its normal pattern

Who This Agent Is For

This agent is built for organizations where time entry accuracy directly impacts payroll costs, labor compliance, and HR operational efficiency.

- HR teams spending disproportionate time reviewing and correcting time entries before every pay cycle

- Payroll administrators who need clean, exception-free time data to process payroll without last-minute corrections

- Operations managers responsible for overtime budgets who need real-time visibility into hours worked across their teams

- Compliance officers ensuring labor law adherence across multiple jurisdictions with different overtime and break rules

- Finance teams who need accurate labor cost data without waiting for payroll adjustments to settle

Ideal for: retailers, healthcare systems, manufacturers, hospitality companies, staffing firms, and any organization with an hourly workforce large enough that manual time entry review cannot catch every exception before payroll processes.

RFP/RFI Response AI Agent

Automates RFP/RFI responses by matching questions against a governed knowledge base of past responses, product docs, and pricing, then generating consistent draft answers.

Weeks of cross-functional scrambling. Replaced by a first draft in minutes.

An RFP lands in the inbox on a Tuesday. It is 47 pages long, contains 312 questions, and the deadline is two weeks away. What follows is predictable and painful: the proposal lead creates a spreadsheet, assigns sections to product, engineering, legal, and pricing teams, sends reminder emails that go unanswered for days, collects inconsistent answers written in different voices and at different levels of detail, then spends the final weekend stitching it all together into something that looks coherent. Two weeks of elapsed time. Dozens of hours of distributed effort. And the worst part is that 80% of the questions have been answered before — in last quarter’s RFP, in the one before that, in product documentation that already exists. The knowledge is there. It is just scattered, unindexed, and inaccessible under deadline pressure.

Benefits

This agent transforms RFP/RFI response from a cross-functional scramble into a governed, knowledge-driven workflow where AI handles the first draft and humans focus on strategy and differentiation.

- Response time collapsed: What took two weeks of distributed effort now produces a complete first draft within hours, giving teams time to refine and differentiate rather than scramble to assemble

- Governed knowledge base: Every past response, product document, pricing table, and compliance statement lives in a searchable FileSet that the agent queries for every new question — no more reinventing answers from memory

- Voice consistency: Every generated response follows the same professional tone and structural standards, eliminating the patchwork quality that comes from six different people writing six different sections

- Compliance accuracy: Legal and regulatory responses are pulled from approved language in the knowledge base rather than paraphrased from memory, reducing the risk of inadvertent misstatements

- Continuous improvement: Winning responses are fed back into the knowledge base, making every future RFP draft stronger than the last based on what actually resonated with evaluators

- Cross-functional time savings: Product, engineering, and legal teams spend minutes reviewing and approving AI-generated drafts instead of hours writing from scratch for every submission

Problem Addressed

RFP and RFI responses are one of the highest-effort, most repetitive workflows in enterprise sales. Every submission requires assembling answers from multiple subject matter experts who are already busy with their primary responsibilities. The proposal lead becomes a project manager, chasing down contributors, reconciling conflicting answers, standardizing formatting, and meeting a deadline that never has enough buffer.

The deeper inefficiency is that most questions are not new. Across a year of RFP responses, the same questions about security posture, integration capabilities, pricing models, SLA commitments, and compliance certifications appear over and over with slight variations. But without a systematic way to retrieve and reuse past answers, each submission starts nearly from scratch. Teams write the same answers differently each time, creating inconsistency that evaluators notice and penalize. Meanwhile, the institutional knowledge that should make each response faster and better remains locked in previous submissions that nobody has time to search through.

What the Agent Does

The agent operates as an intelligent proposal drafting engine that matches incoming questions to the best available answers in your governed knowledge base:

- Document ingestion: Imports the RFP/RFI document and extracts individual questions, requirements, and evaluation criteria into a structured format for processing

- Knowledge base matching: For each question, searches the governed FileSet containing past responses, product documentation, pricing tables, compliance statements, and approved messaging to find the most relevant existing content

- Draft response generation: Synthesizes matched content into a coherent draft answer that addresses the specific question while maintaining consistent voice, appropriate detail level, and compliance-safe language

- Confidence scoring: Each generated response includes a confidence score indicating how well the knowledge base covered the question — high-confidence answers are ready for review, low-confidence items are flagged for subject matter expert input

- Review routing: Responses are organized by section and routed to the appropriate reviewer (product, legal, pricing, technical) with the AI draft and source citations attached for efficient approval

- Knowledge base enrichment: After submission, winning responses and reviewer edits are ingested back into the knowledge base, improving match quality for future RFPs

Standout Features

- Question deduplication: The agent recognizes when an RFP asks the same question multiple ways or splits a single topic across several questions, generating consistent answers that cross-reference rather than contradict each other

- Source citation: Every generated response includes links to the specific documents, past submissions, or product pages that informed the answer, giving reviewers full traceability

- Multi-format output: Generates responses in the format the RFP requires — spreadsheet cells, narrative paragraphs, or structured compliance matrices — without manual reformatting

- Pricing table integration: Pulls current pricing directly from governed datasets rather than requiring manual lookup, ensuring quotes reflect the latest approved rates and discount structures

- Win/loss learning: Tracks which proposals won and which lost, identifying response patterns that correlate with success so future drafts can emphasize proven approaches

Who This Agent Is For

This agent is designed for organizations that respond to formal procurement requests regularly enough that the manual process has become a bottleneck on revenue capacity.

- Proposal managers coordinating multi-team RFP responses who need a faster path from questions to first draft

- Sales teams that lose deals to competitors who simply respond faster with more polished submissions

- Product and engineering teams tired of writing the same capability descriptions for every new RFP

- Legal and compliance teams who need approved language used consistently rather than paraphrased differently each time

- Revenue operations leaders who see RFP response capacity as a constraint on pipeline throughput

Ideal for: enterprise software companies, professional services firms, government contractors, healthcare providers, financial institutions, and any organization where RFP volume exceeds the team’s ability to respond manually without sacrificing quality or missing deadlines.

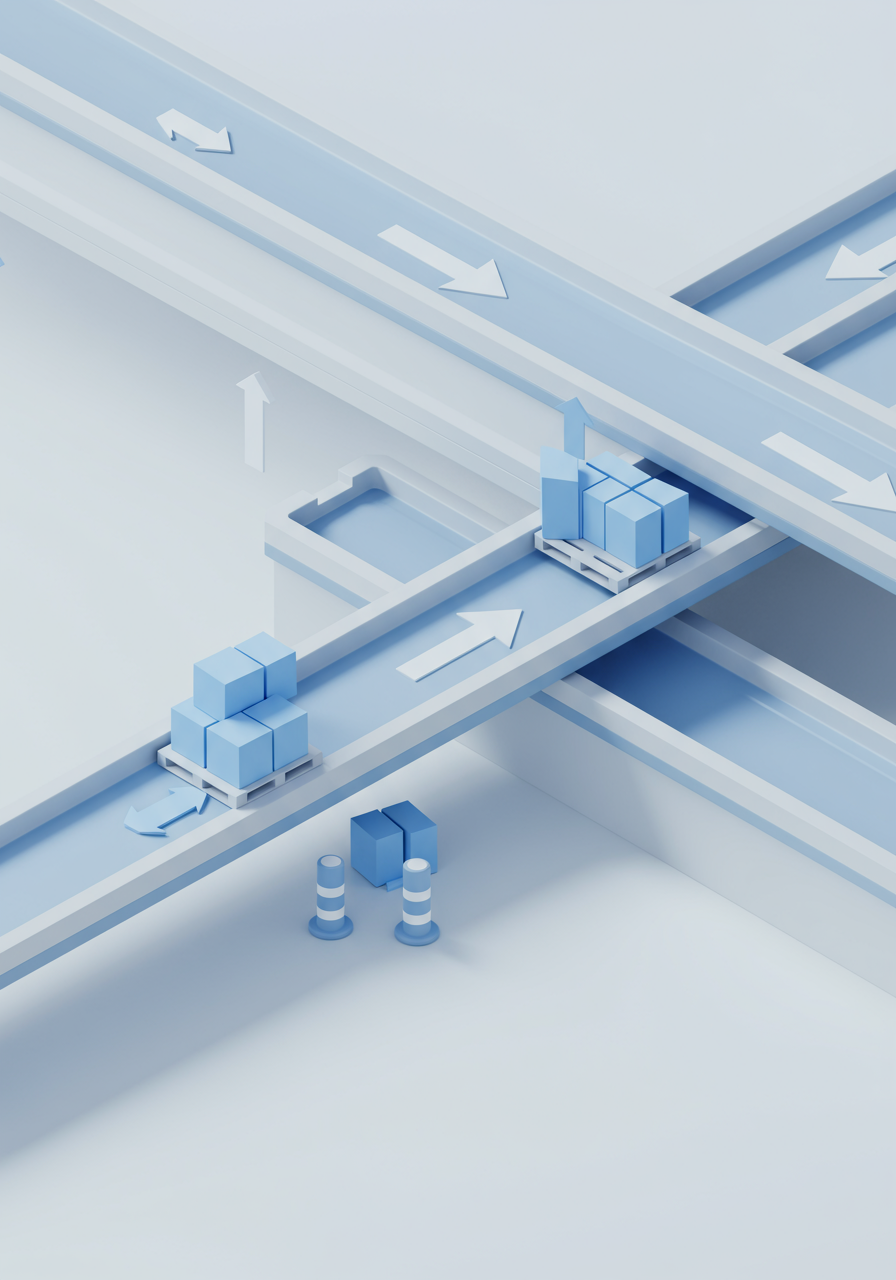

Inventory Reorder AI Agent

Monitors real-time stock levels, applies demand forecasting to predict depletion timelines, and auto-generates purchase orders before stockouts occur.

Never stockout. Never overorder. Always right-sized.

If you have ever walked into a Monday morning planning meeting and learned that a key SKU ran out over the weekend, you know the feeling. The scramble to expedite, the lost sales, the customer apologies. And the worst part is that the data was there — the depletion trend was visible weeks ago if anyone had been watching. The Inventory Reorder Agent watches for you. Built natively in Domo with Magic ETL, Workflows, Agent Catalyst, and AppDB, it connects to your real-time inventory data, applies demand forecasting models to every SKU and location, and generates purchase orders or reorder alerts before stock levels hit critical thresholds. The reorder points are not static numbers set once a year — they adapt continuously based on actual consumption patterns, seasonal trends, and lead time variability.

Benefits

This agent replaces reactive inventory management with a predictive system that keeps stock levels optimized across every location without manual monitoring.

- Predictive reorder timing: Purchase orders trigger based on forecasted depletion curves rather than static min/max thresholds, accounting for demand velocity changes that fixed reorder points miss entirely

- Stockout prevention: Continuous monitoring across all SKUs and locations means depletion warnings surface days or weeks before a stockout, giving procurement enough lead time to act without expediting

- Dynamic safety stock: Safety stock levels adjust automatically based on demand variability and supplier lead time reliability — tighter for stable items, wider for volatile ones

- Auto-generated purchase orders: When reorder thresholds are crossed, the agent generates purchase orders with calculated optimal quantities factoring in MOQs, price breaks, and warehouse capacity

- Multi-location visibility: A single view across all warehouses and stores shows which locations need replenishment, enabling transfer recommendations between overstocked and understocked sites