Hai risparmiato centinaia di ore di processi manuali per la previsione del numero di visualizzazioni del gioco utilizzando il motore di flusso di dati automatizzato di Domo.

Raw data doesn't magically turn into decisions. A data science pipeline gets you there, usually through five stages: collection, cleaning, exploration, modeling, and deployment.

This guide breaks down how data science pipelines differ from close cousins like extract, transform, load (ETL) and machine learning operations (MLOps) pipelines, how to build and monitor your own, and what to do to keep governance and reproducibility from becoming afterthoughts. If you're a data engineer keeping the lights on or an analytic engineer shaping datasets for analysis, you'll find practical moves for each stage.

Here are the main points to keep in mind as you build (or fix) your data science pipeline:

Think of it as the assembly line for insights. A data science pipeline is an end-to-end framework that transforms raw data into predictive insights through automated stages: such as ingestion, cleaning, feature engineering, modeling, evaluation, and deployment.

Companies use this process to answer specific business questions and create actionable insights based on real data, analyzing datasets from both external and internal sources. And here's something most overviews gloss past: "end-to-end" does not mean "pull every dataset you can find." Start with what your question actually needs, or you'll spend your time maintaining noise.

Say your sales team wants realistic goals for next quarter. The pipeline lets you gather inputs like customer surveys or feedback, historical purchase orders, and industry trends, then analyze that mix for patterns. From there, teams can set specific, data-driven goals that have a real shot at increasing sales.

A data science pipeline should not be confused with related but distinct concepts:

Understanding these distinctions helps you pick the right approach for your needs, and it saves a lot of painful "we thought you meant..." meetings.

Data piles up quickly. Most of it is only valuable if you can turn it into something people can act on.

The data science pipeline does the unglamorous work: it gathers data across teams, cleans it, and presents it in a way that supports decisions. The payoff is speed and consistency. Fewer handoffs, fewer one-off fixes, and fewer surprises when someone asks, "Where did that number come from?"

If you've ever inherited a hand-stitched pipeline (you know the kind), you've seen how fast "just one more data source" turns into a maintenance trap. Data engineers, analytic engineers, and IT leaders all feel that pain differently, but the fix is usually the same: end-to-end automation with governed data at every stage.

Data science pipelines help you move past manual collection. With intelligent data science tools, you can keep access to clean, reliable, updated data, the kind you can actually build decisions on.

The measurable impact shows up in several ways. Automation can reduce the time between raw ingestion and model-ready datasets from days to hours, which matters when your stakeholders expect answers before the next meeting. Automated validation checks cut down data quality incidents that stall reporting. Reproducibility means you can trace an insight back to its source data and transformations when someone challenges it, and someone will.

Data science pipelines deliver specific advantages that map to different organizational needs:

Before you dive into data science pipelines, it helps to zoom out. "Pipeline" is a broad label, and the right type depends on latency, volume, and what you're trying to do.

Batch pipelines run in chunks: hourly, daily, weekly. They're a solid fit when freshness can be "good enough" and cost control matters.

Pick batch processing when you need overnight model retraining, monthly reporting aggregations, or any workflow where data freshness is measured in hours rather than seconds. Typical examples include customer segmentation models that update weekly or sales forecasting that runs each morning before business hours.

Streaming pipelines don't wait. They process data continuously as it arrives, which is exactly what you want when a signal gets stale fast.

Choose streaming ingestion when you're building fraud detection systems that need sub-second response times, real-time personalization engines, or live inventory updates. The tradeoff in complexity and cost compared to batch processing is real, so reserve streaming for cases where latency truly matters.

These terms get used interchangeably in the wild, but they're not the same thing in practice:

A data science pipeline covers the full journey from raw data to deployed insights. An ML pipeline is usually a component inside it, focused on training. An MLOps pipeline picks up after training to manage production reality: deployment, monitoring, retraining, and rollback.

ETL is a type of pipeline, but it is not the same as a data science pipeline. Both move data between systems, but they end in different places, and that difference changes how you design them.

The ETL pipeline stops when data is loaded into a data warehouse or database. The data science pipeline continues from there and often triggers more work, including model training, evaluation, and deployment.

Data transformation is always part of an ETL pipeline. In a data science pipeline, some stages may pass data through with minimal transformation at all. And while ETL traditionally transfers data in scheduled chunks, data science pipelines increasingly run in near-real-time for use cases like fraud detection or dynamic pricing.

Start with the question. Seriously.

Before you move raw data through anything, get specific about what you want the data to answer. This helps people focus on the right inputs and prevents a pipeline from becoming an expensive "maybe we'll use it later" project.

The data science pipeline has several stages, and each one produces an output that feeds the next.

First, you pull in data from internal, external, and third-party sources and land it in a usable format, such as Extensible Markup Language (XML), JavaScript Object Notation (JSON), comma-separated values (CSV), and so on).

Ingestion is also where reliability tends to crack. Connector coverage and schema stability determine how quickly a pipeline can be stood up and how often it breaks later. Common sources include transactional databases, application programming interface (API) endpoints, cloud storage buckets, streaming platforms, and third-party providers.

Just because you can ingest a source does not mean you should. High-volume sources with unclear ownership (or shifting schemas) can turn your staging area into a junk drawer before you've had a chance to notice.

The output of this stage is raw data consolidated in a staging area, ready for cleaning.

This is where time goes. A lot of it.

Data may include anomalies such as duplicate parameters, missing values, or irrelevant information, so your team should clean it before creating a data visualization.

You can divide data cleansing into two categories:

Modern pipelines treat data cleaning as more than a manual grind. This is the stage where automated validation checks belong. Data contracts (definitions of the expected schema and quality thresholds between stages) are an emerging best practice that reduces downstream errors. When data fails validation, the pipeline should halt rather than quietly pushing bad inputs forward.

Teams sometimes "clean" by overwriting raw data. Don't. Keep an immutable raw layer so you can reprocess when definitions change, because they will change, and probably sooner than you expect.

This is also where analytic engineers often step in to turn raw inputs into pipeline-ready data. Tools like Domo's Magic Transform (with Structured Query Language, or SQL, and no-code options) can standardize transformation logic and reduce the "did we clean this the same way last time?" problem.

You may need to recruit a domain expert during this stage to help understand data and the impact of specific features or values. In our experience, this is the fastest way to catch "technically correct, practically wrong" transformations.

After the data is cleaned, you explore it for patterns, then turn it into features suitable for modeling. This is where data science pipelines diverge most clearly from general data pipelines. You're not just shaping data for storage; you're creating variables that capture the signals your models need. For churn prediction, that might mean days since last purchase, average monthly spend over six months, support ticket count in the past quarter, and product category diversity.

Two important concepts to understand:

Teams often stumble by defining these twice, once for training and once for serving, with slightly different logic. That's training-serving skew waiting to happen. Treat feature definitions as shared assets, not "whatever worked in this notebook."

Point-in-time correctness matters here. When training a model to predict churn on January 1, you should only use data available through December 31. Using future data, even accidentally, creates data leakage that inflates model performance during training but fails in production.

As feature logic grows, governance starts to matter as much as math. If multiple teams define "active customer" or "monthly revenue" differently, models and dashboards drift apart. A governed semantic layer in your BI environment can help keep feature definitions and business metrics aligned with what stakeholders see and trust.

This is where the algorithms earn their keep. Using approaches like classification, regression, and clustering, you can find patterns and apply rules to the data or data models.

You can then test those rules on sample data to estimate how they might affect performance, revenue, or growth. It's tempting to chase one metric (accuracy, area under the curve (AUC), whatever is easiest to report), but production success usually depends on the tradeoffs: false positives, latency, interpretability, and cost.

Model selection is rarely a one-time decision. Pipelines should support iterative retraining as new data becomes available. Experiment tracking tools help you compare model versions and hyperparameter configurations, keeping a record of what was tried and what actually improved outcomes.

The output of this stage is a trained model artifact, along with metadata about training data, hyperparameters, and performance metrics.

Here's the reality check: a model that can't be evaluated, deployed, and monitored isn't finished.

The objective of this stage is to first evaluate model performance, then deploy to production, and finally establish ongoing monitoring.

Evaluation requires more than a single accuracy number. Use metrics appropriate to your problem type: precision and recall for classification, mean absolute error for regression, and slice metrics that show how the model performs across different segments of your data. "Great average performance" is not the same as "safe to ship." The worst-performing segments matter, and they're the ones most likely to surface in a post-launch review.

Deployment patterns vary by use case. Batch scoring runs predictions on a schedule and writes results to a database. Online inference serves predictions via API in real time. Many organizations use canary deployments, gradually shifting traffic to new model versions while monitoring for problems.

After deployment, model monitoring becomes critical. Data drift happens when input data distributions change over time. Concept drift shows up when the relationship between features and outcomes shifts. Performance decay looks like declining accuracy on recent data. Alerts help, but only if someone owns the response. Otherwise, you've built a very polite system that watches itself fail.

If you're deploying AI agents as part of this stage (not just models), you'll also want centralized management and human-in-the-loop controls so the agent behavior stays compliant with your policies and data access rules.

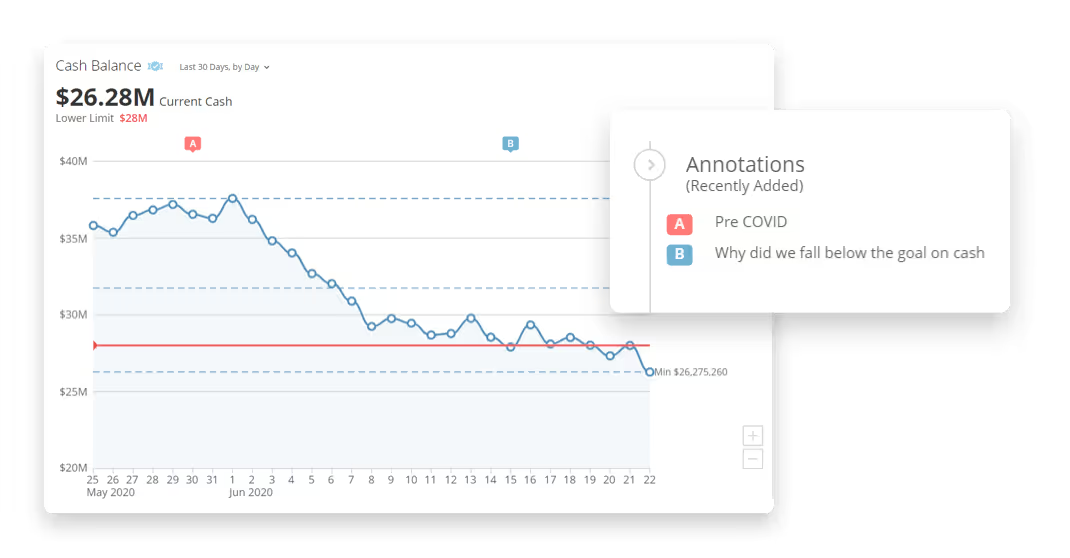

You can then communicate your findings to business leaders or fellow colleagues using charts, dashboards, or reports.

Building a data science pipeline means treating each stage like a checkpoint. Data quality, schema validity, and access controls should be verified before anything moves forward.

Here's a practical approach.

What decisions will this pipeline support? What predictions or insights do you need? How fresh does the data need to be? Start there, before selecting tools or writing a single line of code.

This step supports both strategic alignment and governance. Pipelines built without clear objectives often produce outputs that look impressive but can't be trusted or acted on. Document requirements like data sources, acceptable latency, and success metrics, and define what "success" means in production, not just in a prototype.

It also helps to clarify who you're building for. Data engineers want reliable ingestion and fewer integration headaches. Analytic engineers want reusable transformation logic. BI leaders want consistent metrics and timely dashboards. IT leaders want pipeline-wide compliance and auditability. These are not the same set of requirements, and pretending they are is how you end up with a pipeline that technically works and practically satisfies no one.

Tool choice can make your pipeline feel effortless, or feel like a monthly subscription to chaos.

The data science ecosystem includes tools for each stage. Before committing to a platform, be clear about what you need at each layer:

A common selection mistake: teams pick orchestration first and assume everything else will "plug in." In practice, governance, identity, and metric definitions are the parts you'll regret skipping, so factor those in early, not as a follow-up conversation.

When evaluating platforms, consider connector coverage for your data sources, transformation capabilities, governed model deployment, and real-time access. Some organizations prefer assembling best-of-breed tools; others prefer consolidated platforms that handle multiple stages under unified governance.

If AI agents are part of your pipeline plan, add one more question: how will the agent get governed access to your data? For example, Domo's Agent Catalyst can link AI agents directly to governed Domo datasets using retrieval-augmented generation (RAG), which reduces the need for custom integrations just to get an agent working with trusted data.

Monitoring is the final implementation step, and skipping it is how "it worked in testing" becomes your team's least favorite phrase.

Set up monitoring for several key signals:

Establish alert thresholds and response procedures. When precision drops below a defined threshold, what happens next? Automated rollback to a previous model version can prevent bad predictions from reaching people while your team investigates.

Even well-designed pipelines hit potholes. Knowing the common failure modes helps you build systems that survive contact with reality.

Data leakage remains one of the most costly mistakes. It happens when information from the future influences model training, like using the target variable in feature creation or including data that wouldn't be available at prediction time. The result is a model that performs brilliantly in testing but fails in production.

Training-serving skew happens when the features computed during training differ from those computed during inference. This often shows up when training uses batch-computed features but serving requires real-time computation with slightly different logic. It's a subtle failure mode, and by the time you notice it, you've usually already shipped something that's quietly underperforming.

Fragile pipelines break when upstream sources change schemas, go offline, or deliver data in unexpected formats. Validation checks and graceful failure handling at each stage reduce the blast radius.

Governance gaps create compliance and auditability issues. Without lineage, you may not be able to explain how a prediction was generated or trace a data quality issue back to its source.

Tool fragmentation is another common culprit. When ingestion, transformation, model delivery, and BI live in disconnected systems, teams lose end-to-end visibility. IT and data leaders often see this as risk; data teams feel it as slowdowns and one-off fixes.

A few habits make pipelines easier to trust, and easier to maintain when requirements shift.

Start with modular, testable components. Each stage should be independently testable with clear inputs and outputs. That makes debugging easier and lets you update one component without rebuilding the whole pipeline.

Implement validation gates between stages. Rather than letting bad data flow through and corrupt downstream outputs, stop the pipeline when data fails quality checks. Fail fast. Fix early.

Version everything. Data, code, model artifacts, and configuration should all be versioned and traceable. When something goes wrong, you need to know exactly what version of each component was running.

Document as you build. Capture schemas, feature definitions, model assumptions, and deployment procedures. This documentation becomes essential when onboarding new team members or debugging issues months later.

Governance in data science pipelines means embedding controls into the workflow rather than relying on manual reviews. That includes access controls that limit who can modify components, audit trails that log transformations and model updates, and approval workflows for promoting models to production.

Reproducibility requires versioning at multiple levels. Track dataset versions so you can recreate the exact data used for any training run. Lock environment dependencies so code runs the same way months later. Log random seeds and hyperparameters so experiments can be replicated.

Lineage tracking connects outputs back to their sources. When a dashboard shows an unexpected number, lineage lets you trace that value through each transformation to the original source data. This is essential for debugging, compliance, and building trust.

For enterprise teams, governance also means pipeline-wide compliance: centralized control and visibility from ingestion through transformation to AI model inputs (and, if you're using them, agent workflows). There's a real difference between "we think it's right" and "we can prove it's right," and most organizations don't close that gap until something goes wrong.

A practical reproducibility checklist includes dataset versioning with tools like DVCData Version Control (DVC) or Delta Lake, code versioning with Git, environment locking with Docker or Conda, seed control for random operations, experiment tracking with MLflow or similar tools, and lineage metadata captured at each transformation.

Tools matter, but fit matters more. The goal is to combine machine learning, data analysis, and statistics in a way that people can actually use, often through visualizations and reporting.

When evaluating tools, map your needs to each pipeline stage:

Selection criteria should include latency requirements, team skills, governance needs, and cost constraints. Organizations with strong Python expertise may prefer Prefect or Dagster for orchestration. Teams focused on SQL-based transformations often choose dbt. Enterprises with strict compliance requirements may need managed services with built-in audit capabilities.

Domo's data visualization tools feature easy-to-use, customizable dashboards that enable people to create rich stories using real-time data. From pie charts and graphs to interactive maps and other visualizations, Domo helps you create detailed models with just a few clicks. Plus, Domo's Analyzer tool makes it easy to get started by suggesting potential visualizations based on your data.

Powered by machine learning and artificial intelligence, Domo's data science suite includes tools that simplify data gathering and analysis. With a large connector library, data from across teams is brought into Domo's platform and kept governed as it moves through the pipeline. From there, tools like Magic Transform can automate transformation logic, and Agent Catalyst can connect AI agents to governed datasets (including through RAG) so your pipeline-native AI experiences don't depend on one-off integrations.

Pipelines show up differently by industry because constraints change: latency, regulation, risk tolerance, and what "good" looks like.

Risk analysis in financial services requires processing large, unstructured datasets to understand where potential risks from competitors, the market, or customers lie and how they can be avoided. These pipelines typically use batch processing for historical analysis combined with streaming for real-time fraud detection. Organizations have utilized Domo's data science and machine learning (DSML) tools and model insights to perform proactive planning and risk remediation.

Medical research relies on data science to aid with research. One study relies on machine learning algorithms to aid with research on how to improve image quality in MRIs and x-rays. These pipelines emphasize data quality and reproducibility given regulatory requirements. Companies outside the medical profession have seen success using Domo's Natural Language Processing and DSML to determine how specific actions will affect the customer experience, enabling them to address risks ahead of time and maintain a positive experience.

Demand forecasting in transportation and supply chain uses data science pipelines to predict the impact on traffic that construction or other road projects will have. This also helps professionals plan efficient responses. These pipelines often combine batch processing for training with real-time inference for operational decisions. Additional business teams have seen success using Domo's DSML solutions to forecast future product demand. The platform features multivariate time series modeling at the stock keeping unit (SKU) level, enabling them to properly plan across the supply chain.

A data pipeline focuses on moving and transforming data from source systems to storage destinations like data warehouses. A data science pipeline covers data movement plus exploration, feature engineering, model training, evaluation, and deployment. The data science pipeline produces actionable insights and predictions, not just clean data.

Timeline varies significantly based on complexity. A simple pipeline with a single data source and batch processing might take a few weeks. Enterprise pipelines with multiple data sources, real-time requirements, and strict governance needs can take several months. Starting with a minimal viable pipeline and iterating is often more effective than attempting to build everything at once.

Building pipelines typically requires a combination of data engineering skills for data movement and transformation, data science skills for feature engineering and modeling, and some software engineering skills for deployment and monitoring. Many organizations distribute these responsibilities across specialized roles rather than expecting one person to handle everything.

Effective monitoring includes data quality checks at each stage, model performance metrics tracked over time, and alerts for anomalies like data drift or performance decay. If you can trace any output back to its source data and explain how it was generated, your pipeline has good observability.

The answer depends on your team's expertise, timeline, and specific requirements. Open-source tools offer flexibility and avoid vendor lock-in but require more engineering effort to integrate and maintain. Managed platforms reduce operational burden but may constrain customization. Many organizations use a hybrid approach, combining open-source components with managed services where operational simplicity matters most.