What Is Real-Time Data? Definition, Benefits, and Best Practices for 2026

Real-time data enables decisions in milliseconds rather than hours. Getting there, though, requires understanding the full pipeline from ingestion to serving, plus the tradeoffs around cost, complexity, and data quality. This article breaks down the definition of real-time data, explains how processing works across each layer, compares it to batch and near-real-time approaches, and provides a roadmap for getting started with quick wins that build momentum across your organization.

Key takeaways

Here are the core ideas to keep in your back pocket as you read:

- Real-time data is information captured, processed, and delivered within a defined latency window (typically under one second), enabling decisions in milliseconds rather than hours or days

- Unlike batch processing, real-time data flows continuously through ingestion, stream processing, and serving layers, with each stage contributing to the overall latency budget

- Key benefits include more timely decision-making, proactive alerting, fraud detection, and competitive advantage, though each benefit comes with implementation considerations around cost and data quality

- Implementation challenges include scalability, data quality management (handling late events, duplicates, and out-of-order data), and cost considerations

- Getting started requires understanding your data sources, identifying quick wins where delayed data causes visible business friction, and building a phased roadmap

- Real-time only works when people trust the data, so governance (security, compliance, and access controls) has to travel with the data, not chase it

What is real-time data?

Real-time data is information that is captured, processed, and delivered within a defined latency window (typically under one second) so it can trigger decisions or automated actions the moment conditions change. Batch data works differently. It is collected and processed at set intervals, which means you're always looking at what happened rather than what's happening. This speed gap matters enormously for time-sensitive applications like financial trading, healthcare monitoring, and logistics tracking, where quick decisions can make all the difference.

The term "real-time" exists on a spectrum. True real-time systems deliver data in sub-second timeframes, while near-real-time systems may take anywhere from a few seconds to several minutes. Understanding where your use case falls on this spectrum helps determine the right architecture and investment level.

Key characteristics of real-time data

Real-time data comes with unique qualities that make it indispensable for time-sensitive decision-making. Before diving into specific characteristics, it helps to understand the distinction between "real-time" and "live" data. Real-time refers to an end-to-end latency guarantee, meaning data moves from source to action within a defined service-level agreement (SLA) (such as sub-one-second). Live describes a user interface (UI) state where a dashboard or display refreshes on a schedule, which may actually be delayed by minutes. A "live" dashboard that refreshes every five minutes? Not real-time by this definition. That distinction trips up many teams when setting stakeholder expectations.

Here's why real-time data matters:

- Immediate availability: Real-time data is available almost instantly after being generated. Businesses and organizations can act quickly, making decisions based on the most up-to-date information. Whether responding to a sudden market shift or analyzing customer activity as it happens, immediate access provides a competitive edge.

- Time-sensitive value: The value of real-time data lies in its timing. Decisions made quickly based on this data can have a significant impact, while delays in action may render the information less useful. In fast-paced industries like finance, healthcare, or e-commerce, acting on real-time insights often determines success or failure.

- Dynamic updates: Real-time data is delivered as a steady stream of fresh information, ensuring you are always working with the latest insights. This is especially critical for tasks like monitoring system performance, analyzing customer behavior, or tracking market trends, where outdated information can lead to poor decisions.

- Low-latency processing: To maximize its impact, real-time data requires rapid analysis and immediate response. Efficient systems for processing this data ensure businesses can act quickly, reducing delays that might compromise success. This speed is crucial for applications such as fraud detection, live supply chain management, or personalized customer experiences.

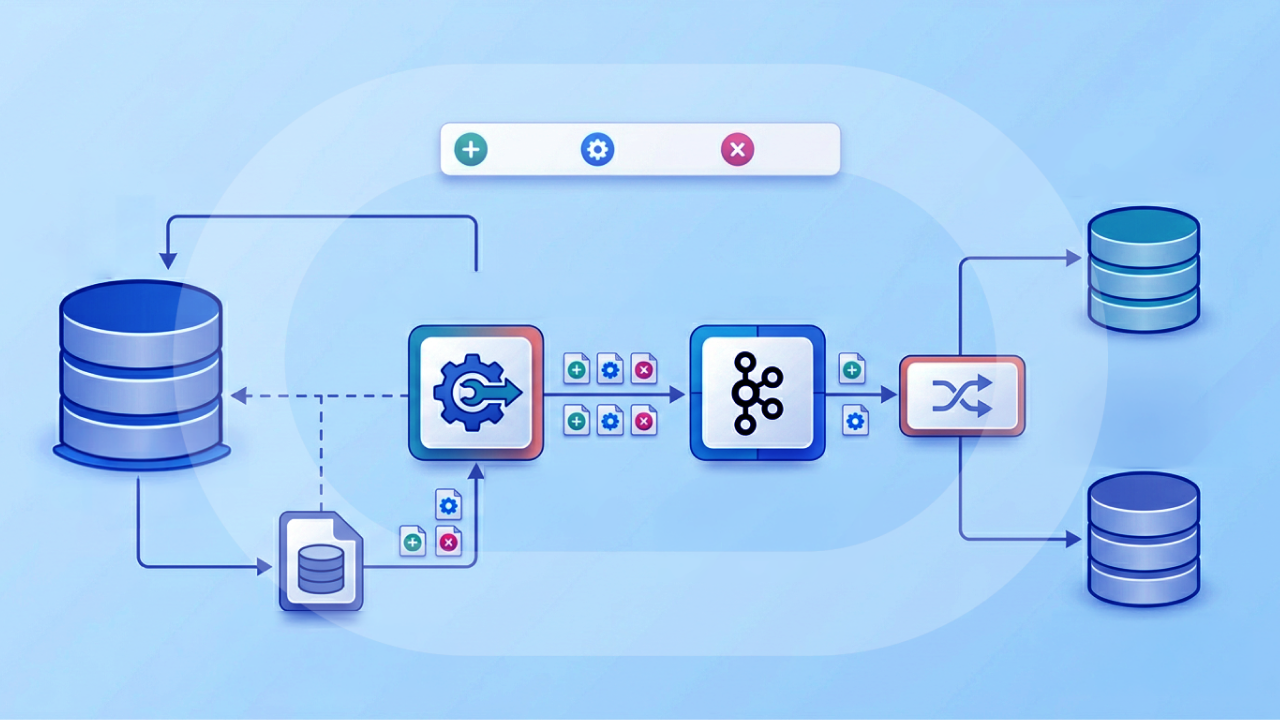

How real-time data processing works

Understanding how real-time data moves from source to action helps clarify where latency is introduced and how to optimize each stage. The end-to-end pipeline consists of several layers, each contributing to the total time between when an event occurs and when your system can respond.

Think of it as a latency budget. If your SLA requires sub-second response times, you need to allocate that budget across ingestion (perhaps 50 milliseconds), processing (200 milliseconds), and serving (100 milliseconds), leaving headroom for network variability. Knowing where delay accumulates helps you identify bottlenecks and make targeted improvements.

Feeling the "too many tools, too many handoffs" pain? You're not alone. Many teams end up with separate tooling for ingestion, governance, monitoring, and serving. A fancy way of saying: more things to babysit at 2:00 am. Centralized pipeline management helps reduce manual intervention while keeping security and compliance intact.

Data ingestion

Data ingestion is the entry point where information flows into your real-time system. Three primary patterns handle this stage:

- Application programming interface (API) pulls and webhooks: Applications push events to your system via hypertext transfer protocol (HTTP) endpoints or your system polls APIs on a schedule. Webhooks provide lower latency since they push data immediately when events occur.

- Change data capture (CDC): CDC tools monitor database transaction logs and stream changes as they happen. This approach captures inserts, updates, and deletes from online transaction processing (OLTP) databases without impacting source system performance.

- Event streaming via brokers: High-volume systems use message brokers like Apache Kafka or Amazon Kinesis to handle continuous data flows. These brokers provide durability, ordering guarantees, and the ability to replay events when needed.

The ingestion pattern you choose depends on your data sources, volume, and latency requirements. CDC works well for database changes. Webhooks suit application events. Streaming brokers handle high-volume continuous data.

In practice, ingestion also comes down to how many sources you're wrangling and how often those connections break. For platform buyers and data leaders, that's why connector coverage and centralized governance matter: you want to ingest data from the systems you already run (think cloud platforms and business systems like Oracle NetSuite) without signing up for constant custom pipeline maintenance. Domo's Data Integration, for example, supports integration with over 1,000 data sources so teams can automate ingestion across the ecosystem and keep pipelines governed and secure as they scale.

Stream processing

Once data enters the pipeline, stream processing handles filtering, transformation, and aggregation while data is in motion. Rather than storing data first and processing later (the batch processing approach), stream processors analyze records as they flow through.

This stage introduces its own challenges around data correctness. Late-arriving events, duplicate records, and out-of-order data are common in distributed systems. Stream processors distinguish between event time (when something actually happened) and processing time (when the system received it). A sensor reading that arrives 10 seconds late should be evaluated against when it occurred, not when it was received.

Techniques like watermarking and windowing help manage these scenarios, though they require careful configuration to balance latency against accuracy. And honestly, getting this wrong is where a lot of teams stumble. Setting windows too tight or watermarks too aggressive means either dropping valid data or accepting stale results as current.

One more 2026-era twist: real-time data is not just for dashboards anymore. Data engineers increasingly need governed, current data feeding downstream systems like AI agents, where stale context can create confident-sounding answers that are simply wrong. Tools like Agent Catalyst can connect AI agents to governed Domo datasets and FileSets using retrieval-augmented generation (RAG) so agents pull from approved data instead of improvising.

Storage and serving

Processed data needs a home where downstream applications and dashboards can query it quickly. Storage selection depends on your latency requirements:

- Real-time OLAP stores: Systems like ClickHouse, Apache Druid, or Apache Pinot deliver sub-second query latency for operational dashboards and interactive analytics. (OLAP stands for online analytical processing.)

- Time-series databases: Tools like InfluxDB or TimescaleDB optimize for sensor telemetry, metrics, and other timestamped data with high write throughput.

- Data warehouses: Platforms like Snowflake or BigQuery handle near-real-time workloads (minutes to hours) where the freshest data is not critical for every query.

The serving layer connects storage to visualization tools, APIs, and alerting systems. Materialized views and caching strategies help maintain fast query performance as data volumes grow.

This is also where a lot of value shows up for line-of-business teams. The "serve" step is not only about query latency; it's about getting current key performance indicators (KPIs) into the places people already work. Domo BI can surface up-to-date, interactive dashboards, and Domo Apps can package role-specific views so leaders and managers can take action without digging through a maze of reports.

Real-time data vs batch data vs near-real-time data

Choosing the right processing approach depends on your business requirements, not just technical preferences. The following comparison provides concrete latency thresholds and decision criteria to help you evaluate which model fits your use case.

Real-time data vs live data

These terms get used interchangeably. They shouldn't be.

Real-time refers to an end-to-end latency guarantee that applies to the full pipeline from event generation to action trigger. If a transaction occurs and your fraud model evaluates it within 200 milliseconds, that's real-time processing.

Live describes a user interface or dashboard that updates on a refresh schedule. A "live" dashboard that polls for new data every five minutes is not real-time by this definition, even though it displays current information. A live view may be sufficient for executive dashboards, while fraud detection requires true real-time processing.

When to use each approach

Not everything needs true real-time processing. Consider these decision criteria when selecting an approach:

For true real-time, ask whether a delayed decision causes measurable harm. Fraud detection, safety alerts, and algorithmic trading require sub-second response because waiting even a few seconds can result in financial loss or safety incidents.

For near-real-time, evaluate whether your people need data that's minutes old rather than hours old. Operational dashboards, inventory tracking, and customer behavior analytics often work well with data that is a few minutes behind, and the reduced infrastructure complexity can be worth the tradeoff.

For batch processing, consider whether your use case involves periodic reporting, historical analysis, or processes that naturally run on a schedule. Payroll, monthly financial close, and data archival don't benefit from real-time infrastructure.

Common sources of real-time data

Real-time data is created through a wide range of systems and technologies that capture and transmit live information. These sources generate either streaming data (a continuous series of values) or event data (discrete state changes). Here are some key sources:

- Sensors and IoT devices (streaming): These include tools like environmental monitors, smart home devices, and industrial sensors. They gather real-time data on temperature, humidity, equipment performance, and more, enabling immediate action when needed.

- Global Positioning System (GPS) and location services (streaming): Used extensively in transportation and logistics, these systems track vehicles, shipments, or individuals in real time, ensuring efficient navigation and delivery.

- Websites and mobile apps (event): These platforms continuously monitor user behavior, such as clicks, purchases, and time spent, providing valuable insights into customer interactions as they happen.

- Cameras and video systems (streaming): Security cameras, facial recognition systems, and visual analytics tools deliver live video feeds and analyze data in real time for enhanced surveillance and decision-making.

- Social media platforms (event): Networks like Twitter, Instagram, and Facebook offer immediate insights into emerging trends, public sentiment, and breaking news as people post and engage.

- Point-of-sale systems (event): Retail and hospitality businesses rely on these systems to record live transaction data, enabling instant inventory updates, sales tracking, and performance analysis.

Benefits of real-time data

Whether you like it or not, your organization needs access to its data in order to be run efficiently. When delays in getting the data occur, this can cause severe issues within the organization, causing delays in reporting, slow decision making, and poor choices. Businesses of all sizes can benefit from having real-time data in their organization, though each benefit comes with implementation considerations.

Timely alerting and exception management

When exceptions occur that can be detrimental to the business, they can usually be identified through the data. Businesses use so many different software systems to keep track of their customers, employees, and partners. When these systems provide real-time data to a real-time BI tool, exception reporting can be built out to manage these irregularities when they occur.

BI tools can use email, text message, or push notifications to notify a person when action needs to be taken. For example, let's say your business operates a call center to answer questions for customers. By feeding real-time data from your call center software, you could create an alert that notifies a manager anytime the average call wait time exceeds 10 minutes. The manager can then take action to reduce the wait time, intervening before customers abandon calls or escalate complaints.

Organizations that implement real-time alerting often see measurable improvements in mean time to detect (MTTD) issues, sometimes reducing detection from hours to minutes. Without proper thresholds and data quality, though, teams can experience alert fatigue from false positives.

Empowered employees and informed decisions

Employees with more data tend to perform more effectively in their job responsibilities. We know this. The challenge many organizations face is the dependency problem: managers and frontline workers wait on weekly reports or analyst availability before they can act on information that is already outdated.

By enabling employees with real-time data, they will be able to make informed decisions, help more customers, and operate more efficiently in their day-to-day responsibilities.

An example of this is a sales rep who wants to keep closer track of their prospects and current customers. By having access to real-time phone call, email, and customer relationship management (CRM) data, they will be able to make informed decisions to help their customer. Purchasing decisions can be quite complicated, so making sure the sales rep has access to all the data they need is critical.

For a lot of teams, the win is not just "we have data." It is "I can answer my own question right now." Tools like Domo BI are designed for that self-service moment with intuitive dashboards, and features like AI chat can help managers explore current performance without waiting on an analyst to run a query. Domo Apps can take it a step further by embedding role-specific insights and actions into day-to-day workflows.

Companies with real-time data are well equipped to make informed business decisions and execute on company strategy. Traditional reporting usually is only performed as frequently as once a quarter, or sometimes only once a year. By giving executives access to real-time data and reporting, they will be able to make more informed decisions for the business.

An example of this is using real-time data to generate financial reports such as a profit and loss (P&L) statement or balance sheet. When executives have access to these reports in real-time, they will be able to understand the current financial health of the organization at this very moment, rather than what it was last quarter.

For finance teams running on an enterprise resource planning (ERP) system like Oracle NetSuite, the gap can be especially painful: getting up-to-date numbers too often means manual exports or an IT ticket. Integrations that bring NetSuite financial and operational data into governed dashboards can give finance leaders a live view of P&L and cash flow without depending on scheduled report runs.

Competitive advantage and fraud prevention

Using advanced systems and tools allows businesses to stay ahead of the competition by enabling shorter product development cycles, delivering enhanced customer experiences, and adapting quickly to changing market demands. Organizations can innovate more effectively and capture emerging opportunities before competitors, securing their position as industry leaders.

Advanced technologies play a critical role in detecting and preventing fraudulent activity in realtime. By analyzing patterns and identifying anomalies, these systems can flag suspicious transactions or behaviors instantly, helping to protect sensitive data and prevent breaches.

Consider the cost of delayed data in fraud detection: a transaction approved before a fraud signal is processed becomes a loss that's difficult to recover. Financial institutions using real-time fraud detection can evaluate transactions within 200 milliseconds, blocking suspicious activity before it completes. This sub-second response window is the difference between preventing fraud and documenting it after the fact.

Reduced downtime

Proactive monitoring systems help identify and address operational issues before they escalate into major disruptions. By pinpointing potential problems in advance, businesses can minimize downtime, maintain operations, and ensure productivity. This is especially critical in industries where even brief interruptions can lead to significant losses.

Challenges of real-time data

Real-time data capabilities come with implementation challenges that organizations should anticipate. Understanding these obstacles upfront helps teams plan appropriately and set realistic expectations.

Scalability and infrastructure requirements

Real-time systems must handle variable data volumes while maintaining consistent latency. Unlike batch systems that can queue work for later processing, streaming infrastructure needs capacity for peak loads at all times.

Infrastructure complexity grows not just with data volume, but with the number of sources and downstream consumers. Each new data source requires connectors, schema management, and monitoring. Each new consumer adds query load and potential contention. Organizations often underestimate this operational overhead when planning real-time initiatives.

Mitigation strategies include horizontal scaling through partitioning, auto-scaling policies based on throughput metrics, and careful capacity planning that accounts for growth projections.

Data quality and governance

Real-time systems introduce unique data quality challenges that batch systems handle more gracefully. Late-arriving events. Duplicate records. Out-of-order data. All common in distributed environments.

A sensor reading that arrives after its processing window has closed requires decisions about whether to drop it, reprocess, or accept inaccuracy.

Data governance requirements do not disappear just because data moves quickly. Organizations still need to handle personally identifiable information (PII) appropriately, maintain access controls, and preserve audit trails. The challenge is implementing these controls without adding latency that defeats the purpose of real-time processing.

Effective approaches include schema registries that enforce data contracts, in-stream PII tokenization, role-based access controls at the serving layer, and retention policies that balance compliance with storage costs.

Cost considerations

Real-time infrastructure typically costs more than batch alternatives. Streaming platforms require always-on compute resources, specialized storage systems, and often premium pricing tiers from cloud providers.

However, the ROI calculation should include the cost of delayed decisions. If a 30-minute delay in fraud detection costs more than the infrastructure to prevent it, real-time processing pays for itself.

How to get started with real-time data

While it can seem quite daunting to start generating real-time data for your business, there are so many tools widely available for businesses of all sizes to get started. Real-time data can benefit your organization in so many ways, and the most important thing is to get started. Here are a few suggestions we recommend for starting with real-time data:

Understand your data sources

Understanding what data is most important to your business is a critical first step. Take some time to understand the different software and systems your company uses on a daily basis, and which ones would benefit from having real-time data streams. Common systems can include customer relationship management (CRM) software, email, marketing tools, HR systems, or accounting software.

If your sources are spread across departments, aim for a single source of truth that stays current. That's what helps finance, sales, and operations compare KPIs with confidence instead of arguing about whose spreadsheet export is "the right one."

Evaluate vendors and technology

Partnering with a vendor that possesses the capabilities of supporting real-time data streams matters. If you don't have dedicated IT or development teams, you can partner with a BI vendor that makes it easy to integrate your source systems. New tools are available that make data integration as easy as a few clicks. In minutes, you will be able to create real-time data streams to power your business.

When evaluating vendors, consider connector breadth (does the platform support your existing data sources?), governance controls (can you maintain compliance while moving data in real time?), latency service-level objective (SLO) support (does the vendor guarantee the freshness levels you need?), and ease of use for non-technical people (can business teams access insights without engineering support?). (SLO stands for service-level objective.)

If you're trying to avoid a "point solution pileup," it also helps to evaluate the full loop: ingestion to governed datasets to dashboards to action. Platforms like the Domo Platform are built around that loop, and partner integrations with Snowflake, Databricks, Google BigQuery, and Oracle NetSuite can extend real-time access to cloud and financial data in a single governed environment.

Identify quick wins and build a roadmap

In many organizations, there are usually some quick ways you can make great use of your real-time data. Identify the one or two data sources where delayed access is causing the most visible business friction. Some teams may be incredibly eager to get access to the data. Identify some quick wins that will allow you to gain momentum with the project. You can start by working with a few data sources that will provide real-time data to certain teams. As these teams start to see the benefits of real-time data, they will be more motivated and will share their excitement with other teams.

A good "quick win" often looks like this: a leader is about to make a high-stakes call, but the numbers are a week old. Making a $10M decision on last month's numbers is a risk you don't have to take. For many finance teams, starting with an ERP data stream (like NetSuite) into a live P&L or cash flow dashboard creates immediate trust and momentum.

Gaining access to real-time data is easy, but understanding how your organization will use that data can take time. By creating a roadmap outlining how different teams and organizations will start to use real-time data, you can ensure a greater adoption and application of your data. A data steering committee can be helpful here, as they will guide the organization through the process of creating and using their real-time data.

Real-time data use cases and examples

Real-time data is essential in industries and applications where speed and accuracy make all the difference. The following examples illustrate the full loop from data generation to decision to action.

Financial services and fraud detection

Financial institutions rely on real-time data to analyze transactions as they happen. The pattern follows a clear sequence: a card swipe generates a transaction event, the fraud model evaluates it against behavioral patterns and risk signals, a risk score is calculated within milliseconds, and the system either approves the transaction, blocks it, or triggers step-up authentication.

This end-to-end process typically completes within 200 milliseconds. The alternative (processing transactions in batches and reviewing fraud signals hours later) means approving fraudulent transactions that become difficult to recover.

Operations and supply chain

A vibration sensor detects an anomaly in a motor. The stream processor flags the reading as outside normal parameters. A maintenance alert is generated with severity and location details. A technician is dispatched before the equipment fails.

This proactive approach reduces unplanned downtime and extends equipment life. Organizations implementing predictive maintenance often report 25 to 30 percent reductions in maintenance costs and significant improvements in equipment availability. You'll notice these savings compound over time as teams shift from reactive repairs to scheduled interventions based on actual equipment condition.

Additional use cases

Real-time data drives value across many other applications:

- Stock trading: Constantly updated market prices allow investors to make quick, informed decisions. The ability to act in real time can mean the difference between a profit or a loss in the fast-paced world of trading.

- Navigation systems: Live traffic updates ensure directions are always optimized. Navigation apps dynamically adjust routes to avoid delays, helping drivers save time and reduce stress.

- Weather monitoring: Real-time data provides highly accurate, up-to-the-minute information about changing weather conditions. This is crucial for industries like aviation, agriculture, and disaster management where precision is key.

- Customer experience: Digital platforms use real-time insights to instantly respond to user behavior. This creates highly personalized experiences, such as tailored product recommendations or targeted offers that enhance customer satisfaction.

- Healthcare: Continuous monitoring of patient vitals allows for rapid intervention in critical situations.

- E-commerce: Tracking inventory and order fulfillment to improve delivery speed and customer satisfaction.

- Executive KPI visibility: Interactive dashboards help leaders spot risks early, stay accountable to stakeholders, and keep teams aligned on what's happening right now.