What Are ETL Tools? How They Work, Benefits, and Future Trends

Application sprawl has become a serious challenge for companies in 2026. According to a recent report from MuleSoft, the average organization manages more than 950 applications.

If that number alone doesn’t make your head spin, imagine being asked to work with nearly 1,000 potential streams of data. And only about a quarter of these applications are integrated or connected, so at best you’re still operating with rampant data silos across your business.

You may not be able to stop the sprawl, but you don’t have to surrender to it either. What you need are tools to consolidate, clean, and organize that data from whichever application it’s housed in, so that you can actually start to make meaning from the mass quantity of information you’re collecting. And one of the most useful weapons in your arsenal may be ETL tools.

How can they help? ETL tools handle three essential tasks: pulling data from multiple sources, transforming it into a standardized format, and loading it into a destination like a data warehouse or analytics platform. So if you’re struggling to keep your data sources straight, let’s explore how different ETL tools on the market could streamline your organization’s data management.

Key takeaways

Here are the big ideas to keep in mind as you evaluate ETL tools.

- ETL tools are software platforms that automate the extraction of data from multiple sources, transform it into a standardized format, and load it into a destination like a data warehouse, analytics platform, or operational system.

- Four main types exist: legacy, open-source, cloud-based, and real-time ETL tools, each suited to different organizational needs and technical maturity levels.

- Key benefits include automation of manual data work, unified historical context for decision-making, metadata collection for governance (lineage and audit logs), and self-service capabilities for people who don't write code.

- Choosing the right ETL tool depends on connector coverage (and how many sources you need to support), transformation flexibility (no-code plus SQL/Python/R), governance needs, and whether you need batch or real-time processing.

- AI and machine learning are changing ETL by automating data validation, suggesting transformations, and enabling ML-based enrichment (like classification or forecasting) directly inside data pipelines.

What are ETL tools?

ETL stands for Extract, Transform, Load. ETL tools are software platforms that automate the movement and preparation of data across your organization. They extract data from different sources, transform it by cleaning and standardizing it into a more usable format, and then load the data into a target destination. This destination could be a solution like Domo, a data warehouse, data lake, or database.

So what actually qualifies something as an ETL tool? Not just a script. Not a manual process cobbled together over time. The distinction matters because ETL tools provide capabilities that manual approaches simply cannot deliver at scale.

A product qualifies as an ETL tool when it includes these core capabilities:

- A connector library for extracting data from multiple source systems

- A transformation engine for cleaning, standardizing, and reshaping data

- Scheduling and orchestration to automate pipeline runs

- Monitoring and alerting to catch failures before they impact downstream reports

- Data lineage tracking to understand where data came from and how it changed

- Security controls including access management and encryption

Raw data becomes actionable information. That is the point.

Why ETL tools matter for modern data teams

Business intelligence is impossible without data integration. And data integration? Nearly impossible without ETL tools. First designed in the late 1980s and early 1990s to work with on-premise data storage infrastructure, these tools remain essential in the age of cloud storage and cloud technologies.

The cost of fragmented, manual ETL processes is real. Dashboards go stale. Compliance gaps emerge. Data engineers spend more time maintaining scripts than building new capabilities. When pipelines are hand-coded and scattered across teams, you get tool sprawl: multiple teams building redundant pipelines, inconsistent transformation logic, and no single source of truth for how data moves through the organization. I've seen organizations burn entire quarters trying to untangle this mess.

Modern ETL tools address these challenges by centralizing pipeline management, standardizing transformation logic, and providing visibility into data flows.

Different roles feel the pain in different ways:

- Data engineers want fewer brittle scripts and more time for data architecture and reliability.

- Analytic engineers want a "build once, reuse everywhere" approach so clean datasets do not turn into one-off projects.

- Business analysts want analysis-ready data without waiting on IT for every change.

- IT and data leaders want governance, security, and compliance confidence without managing a maze of point tools.

- Architectural engineers want hybrid connectivity that fits the existing stack, not a forced rebuild.

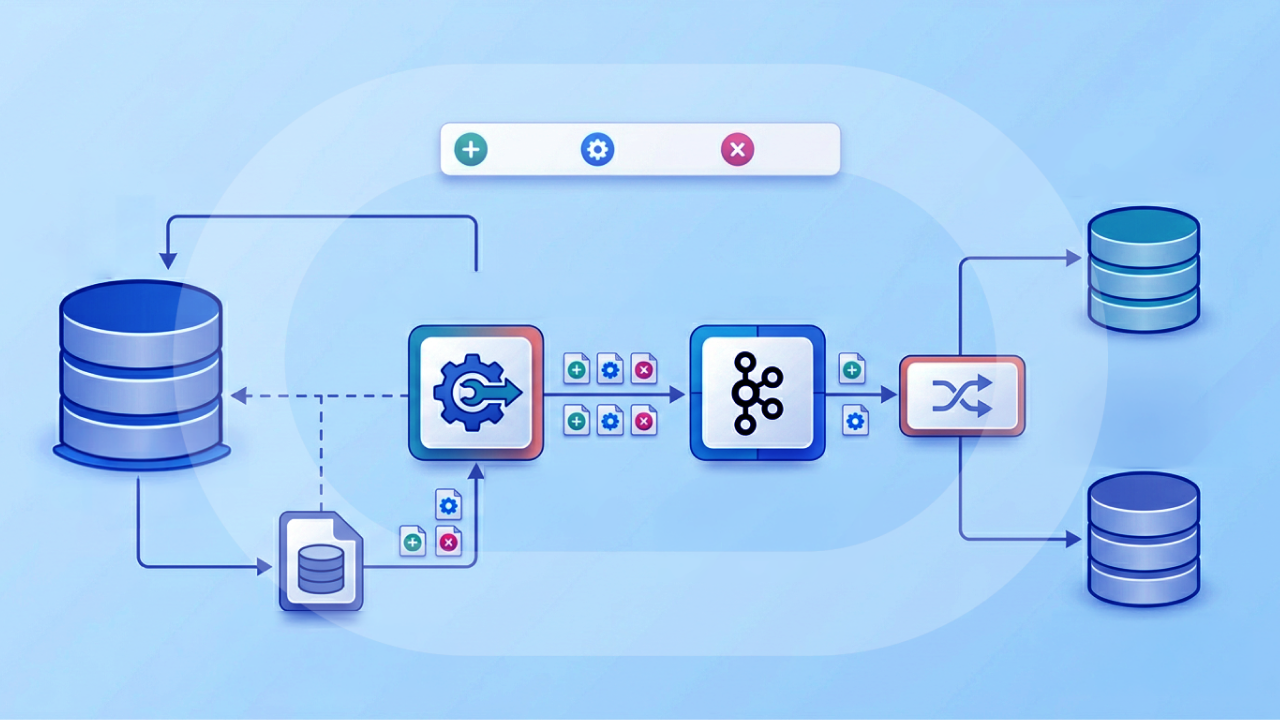

How do ETL tools work?

ETL tools follow a three-step process. It's all in the name: extract, transform, load.

Extraction

Finding a modern organization that only uses one source of data? Good luck. Many organizations use multiple data analysis tools too. The first step in the process is extracting data from its source.

Common extraction sources include:

- Customer relationship management (CRM) platforms like Salesforce and HubSpot

- Marketing automation tools like Marketo and Mailchimp

- Support ticketing systems like Zendesk and Intercom

- Billing and payment platforms like Stripe and NetSuite

- Product analytics tools like Amplitude and Mixpanel

- Data warehouses and databases

- Cloud and on-premise data storage

- Mobile apps and Internet of Things (IoT) devices

ETL tools gather raw data (structured and unstructured) into a single location and consolidate it. Most modern tools connect to sources through application programming interfaces (APIs), pulling new or updated records on a scheduled basis or in real time.

If you're dealing with dozens of systems, or hundreds, connector coverage stops being a nice-to-have. It becomes the whole game. Some platforms, like Domo Integration, include 1,000+ prebuilt connectors across cloud apps, databases, flat files, and on-premises systems, which removes a lot of custom ingestion work.

Transformation

The second step applies an organization's rules and regulations to the data to make it meet requirements and be easily accessible.

Common transformation patterns include:

- Deduplication: Merging duplicate records based on key fields like email or customer ID

- Type casting: Converting data types such as strings to dates or integers to decimals

- Null handling: Replacing missing values, flagging incomplete records, or dropping unusable rows

- Referential integrity checks: Validating that foreign keys match existing records

- Personally identifiable information (PII) masking: Tokenizing or hashing sensitive fields like social security numbers or email addresses

- Schema standardization: Renaming columns and aligning formats across sources

This step is arguably the most important part of the ETL process because it improves the quality and integrity of the data an organization collects. And honestly, this is where things go sideways for a lot of teams: applying transformations inconsistently across sources, which creates downstream reconciliation headaches when the same field means different things depending on where it originated.

Modern transformation layers also tend to split into two lanes (and you may want both):

- No-code transformations: Visual, drag-and-drop workflows that help teams move quickly.

- Code-based transformations: SQL and scripting (often Python or R) for advanced logic.

Tools like Domo's Magic Transform support both approaches in the same environment. That matters when a business analyst needs to prep a dashboard dataset without writing SQL, and a data engineer needs to add custom logic right next to it.

Loading

Transformed data gets loaded into a new destination. This could be a solution like Domo or a standard data warehouse.

Depending on the ETL tool and use case, data may be loaded in different ways:

- Full loads replace the entire target table with fresh data, useful for smaller datasets or complete refreshes

- Incremental loads add only new or changed records, reducing processing time and warehouse costs

Schema drift is another consideration during loading. When source systems add new columns or change data types, ETL tools need to detect and handle these changes gracefully rather than failing silently or corrupting downstream tables.

Loading is not always one-way, either. Some ETL platforms can write transformed data back into operational systems (think CRM or support tools), turning pipelines into workflow drivers.

ETL pipeline example: from source to destination

Seeing ETL in action makes the process concrete. Consider a common scenario: combining Salesforce CRM data with Stripe billing data in Snowflake to analyze customer churn.

The extraction phase pulls customer records from Salesforce (name, email, account status, last activity date) and subscription data from Stripe (customer ID, plan type, payment status, subscription start date). Both sources update incrementally, so the ETL tool only pulls records changed since the last sync.

During transformation, the pipeline applies several rules:

- Deduplicate customers by matching on email address

- Cast Stripe's Unix timestamp to a standard date format

- Handle null values in last activity date by defaulting to account creation date

- Standardize account status values (Salesforce uses "Active/Inactive" while Stripe uses "active/canceled")

Here's what the data looks like before and after transformation:

The loading phase upserts this combined record into Snowflake's customers table, using email as the merge key. If Jane's record already exists, it updates; if not, it inserts. This idempotent approach prevents duplicate rows even if the pipeline runs multiple times.

Tools like Domo automate this entire flow with visual drag-and-drop interfaces. Manual scripts would require hundreds of lines of Python plus custom error handling, retry logic, and monitoring.

How ETL tools work in modern platforms

ETL looks simple on a whiteboard. In production, the "simple" part usually ends around week two.

Most teams don't just need a pipeline. They need a system for running pipelines repeatedly, safely, and at scale. That typically includes:

- Centralized workflows: Visual DataFlows you can standardize and reuse across teams, instead of re-implementing the same logic in five different places.

- Scheduling and failure alerts: Automated runs plus notifications when a job fails, runs late, or produces unexpected output.

- Performance at scale: Execution engines designed to process large datasets efficiently (for example, Magic Transform runs on Domo's Adrenaline engine).

- Governed transformation: Consistent definitions and transformation history so people stop arguing about what "active customer" means.

- Validation and enrichment: Automated checks that help ensure the data is ready for analytics and AI, not just technically "loaded."

ETL vs ELT: understanding the difference

Extract, load, transform (ELT) tools have the same purpose as ETL tools, but the process order differs. ELT loads data into the central repository immediately after extraction, then transforms it using the destination's compute power. This approach has become popular with the rise of cloud data warehouses like Snowflake and BigQuery, which can handle transformation workloads efficiently.

The choice between ETL and ELT depends on your specific constraints:

Beyond ETL and ELT, several related patterns address different needs:

- Reverse ETL moves transformed data from your warehouse back into operational systems like CRMs, marketing platforms, or support tools

- Change Data Capture (CDC) tracks incremental changes at the database level, enabling near-real-time replication

- iPaaS (Integration Platform as a Service) focuses on application-to-application integration rather than analytics-focused data movement

Some organizations need multiple patterns. A company might use ELT to load raw data into Snowflake, transform it with dbt, then use reverse ETL to push customer scores back into Salesforce for the sales team. The mistake I see most often? Choosing a pattern based on what's trendy rather than what fits your compliance requirements and team capabilities.

Some platforms also support data federation, which lets you query data in a warehouse or data lake without physically moving it.

Benefits of ETL tools

Historical context and unified data views

With ETL tools, organizations can bring together legacy data and new data coming in from many sources. The result is a deep historical context for the numbers in front of you. Your view of data is long-term, which increases the ability to make informed decisions.

Bringing data sets together and standardizing the format provides a single view of data that eliminates delays and inefficiencies. With one point of view, it's easier to visualize data sets and analyze results.

Automation and accuracy

Without ETL tools, the alternative is hand-coded data migration. Time intensive. Open to human error. ETL automation frees teams to devote their time to analysis and innovation instead. Automation means greater accuracy and better compliance with data regulations and standards.

For data engineers and analytic engineers, automation also means fewer late-night "why did this break?" fire drills. Central scheduling, retries, and failure alerts shift pipeline work from constant babysitting to predictable operations.

Metadata collection and governance

Knowing where a data set comes from can provide valuable insights as well. When an ETL tool extracts data, it also collects metadata that helps business intelligence tasks like business process modeling.

Modern ETL tools capture several types of metadata that matter for governance:

- Data lineage: Where data originated and every transformation it passed through

- Audit logs: Who accessed or modified data, and when

- Transformation history: What rules were applied and what values changed

This metadata is stored in its own repository so it can easily be queried, manipulated, and retrieved. For IT leaders managing pipelines across the organization, this centralized oversight provides compliance confidence and reduces the risk of data governance gaps.

Self-service capabilities

Outside of breaking down silos, ETL tools give self-service capabilities to team members who typically would not have the background to dive into data analytics.

For business analysts, self-service ETL means getting analysis-ready data without filing a ticket or waiting on the data engineering team. Drag-and-drop interfaces replace custom coding for faster, more scalable solutions, which increases your return on investment (ROI). Building your own data prep workflow without writing SQL removes the dependency that traditionally bottlenecked analytics projects.

Types of ETL tools

There are many different ETL tools on the market today. Some tools may work better for one organization than another. An ETL tool's effectiveness depends on a variety of factors including data governance practices and an organization's current data technology solutions. In general, most modern ETL tools fall into these broad categories:

Legacy ETL tools

These ETL tools are the most traditional in their approach. They provide the essential function of ETL for data integration but tend to be more difficult to scale, slower to deploy, and more code intensive with less automation than other tools on the market. Examples include Informatica PowerCenter and IBM DataStage, which remain common in large enterprises with established on-premise infrastructure, but they often require heavier setup and maintenance than Domo.

Open-source ETL tools

While legacy ETL tools typically work exclusively with structured data, open-source ETL tools can process data in a wider variety of structures and formats. They're also more flexible, scalable, and quicker to deploy. Popular options include Apache Airflow for orchestration and Airbyte for connector-based extraction, though these often require engineering resources to configure and maintain, which can make Domo a simpler fit for teams that want self-service ETL in the same platform.

Cloud-based ETL tools

Cloud-based ETL tools are the most agile option on the market. The nature of the cloud means data is more readily available and that tools can scale with increased flexibility and speed. As more data sources move to the cloud or become a hybrid of on-premise and cloud data, cloud-based ETL tools are becoming essential for data integration. Examples include AWS Glue, Azure Data Factory, and Domo, though the first two often work best for teams already committed to one cloud ecosystem while Domo supports broader end-to-end analytics.

For architectural engineers working in hybrid environments, cloud-based tools often win when they can connect to both legacy and cloud-native systems without forcing an overhaul of your current stack.

Real-time ETL tools

Even if an ETL tool is cloud-based, it may still be processing data in batches. Real-time ETL tools capture data constantly, delivering results and reports in real time. With real-time ETL tools, organizations can query streaming data sources like social media searches or Internet of Things (IoT) sensors and provide immediate responses. Striim and AWS Glue Streaming are examples of tools built for these use cases, though teams that also want built-in BI and governed self-service may prefer Domo.

Top ETL tools to consider in 2026

The ETL tool landscape includes options for every team size and technical maturity level. Rather than ranking tools, it is more useful to understand which category fits your needs.

When evaluating tools, consider whether your team is analyst-led (prioritize no-code interfaces and pre-built connectors) or engineering-led (prioritize API access, custom transformations, and extensibility).

How to choose the right ETL tool

Selecting an ETL tool requires matching capabilities to your organization's specific needs. The right criteria depend on who owns your data pipelines: an analyst-led team with limited engineering resources needs different features than an engineering-led team building complex, custom pipelines.

Consider these evaluation criteria:

- Connector coverage: Does the tool support your current sources? Check for the specific CRM, marketing, finance, and product systems you use today, plus room for future additions.

- Transformation style: Do you need no-code visual transformations, SQL-based logic, or Python/R scripting? Match this to your team's skills and the complexity of your transformation requirements.

- Orchestration and scheduling: Can you schedule pipelines, set dependencies between jobs, and trigger runs based on events? This matters more as your pipeline count grows.

- Observability and alerting: How does the tool surface failures? Look for row count monitoring, latency alerts, and integration with your existing notification systems.

- Data lineage: Can you trace data from source to destination and understand what downstream dashboards are affected by changes?

- Deployment model: Do you need cloud-native, on-premise, or hybrid deployment? Regulated industries often have specific requirements here.

- Cost model: Understand whether pricing is consumption-based (rows, credits, compute hours), seat-based, or flat-fee. Model your expected data volumes to avoid surprises.

A helpful gut-check question: can one tool satisfy both the "I want drag-and-drop" crowd and the "I need SQL/Python/R" crowd? Tools that support both (in one governed environment) reduce handoffs, duplicate logic, and the classic "who owns this pipeline?" debate.

For analyst-led teams, prioritize ease of use, pre-built connectors, and visual interfaces. Engineering-led teams should prioritize API access, custom transformation support, and integration with existing orchestration tools.

How different industries use ETL tools

Across industries, ETL tools can help manage data and offer more complete views of customers, transactions, and key performance indicators (KPIs).

Retailers can combine customer information like name, location, and purchase history with transactional data like sales. Healthcare providers can bring together patient information, healthcare history, and ongoing insurance claims.

ETL tools also consolidate data from different organizations, like in the case of a business merger or between an organization and its partners or vendors.

Cross-team reporting is one of the most common use cases. Organizations pull data from CRM systems, marketing automation platforms, finance tools, and support ticketing systems into a single warehouse. From there, teams can calculate unified metrics like customer acquisition cost, churn rate, and revenue by region without reconciling conflicting numbers from different source systems.

Other common use cases for ETL include migrating current data stores to the cloud, incorporating machine learning and AI into an organization's data strategy, and collecting customer data from multiple platforms to offer better personalization and deliver improved experiences.

In many cases, the end goal is not just a dashboard. It is action. That's why bidirectional flows (where transformed data can be written back into systems like a CRM) matter for teams that want ETL outputs to trigger operational workflows.

The future of ETL: AI and automation

ETL tools will continue to be essential as the volume of data organizations collect grows exponentially. IoT will contribute to this vast data collection with streaming data.

Organizations will rely more heavily on ETL tools as they prepare to deploy machine learning and AI processes that require extensive data stores.

AI is already changing how ETL tools work. Modern platforms include capabilities that would have required custom development just a few years ago:

- Built-in classification and forecasting actions that apply ML models directly within ETL flows

- Automated data validation that checks pipeline output for anomalies before it reaches downstream systems

- AI-suggested transformations based on data patterns and historical pipeline behavior

- Integration points for externally hosted ML models, allowing predictions to be generated as part of the transformation step

The shift toward no-code ETL represents more than a feature trend. It's a structural change in how organizations think about data infrastructure. When people in the business can build and run their own ETL workflows without engineering support, data access becomes democratized. Bottlenecks shrink. Time to insight accelerates. Data engineers get to focus on more complex challenges.

Domo's Magic Transform is one example. Traditional ETL processes require IT professionals to manage the technical aspects of data integration and analytics. Magic Transform uses visual DataFlows plus optional SQL, Python, and R scripting, so teams can keep one workflow system even when transformation logic gets complicated. Automatic scheduling and failure alerts also help prevent the classic "everything looked fine until the 9:00 am dashboard" surprise.