AI Data Pipelines: What They Are and How to Build One

With generative AI spitting out everything from the perfect recipe for carrot cake to emotionally supportive pick-me-ups for when you’re feeling like the sound of a phone ringing is about to make you snap, it’s no wonder so many people treat AI like it’s magic. But there are no fairies, genies, or wizards working behind the scenes. All of the capabilities that people see and don’t see are the result of sophisticated algorithms trained by data. And in order to get outputs that feel like you're talking directly to Ina Garten or Oprah Winfrey, those algorithms need a lot of data.

Unfortunately, when it comes to powering their AI innovation, a lot of large businesses can miss the mark. Even with big annual data budgets, problems with their infrastructure can put significant amounts of potential business value at risk every year. And one of the main culprits of this bad math are underperforming, fragile, and broken data pipelines.

How can they make the math work a little more in their favor? Well, oddly enough, to power better AI, you might need to rely on AI—specifically AI data pipelines.

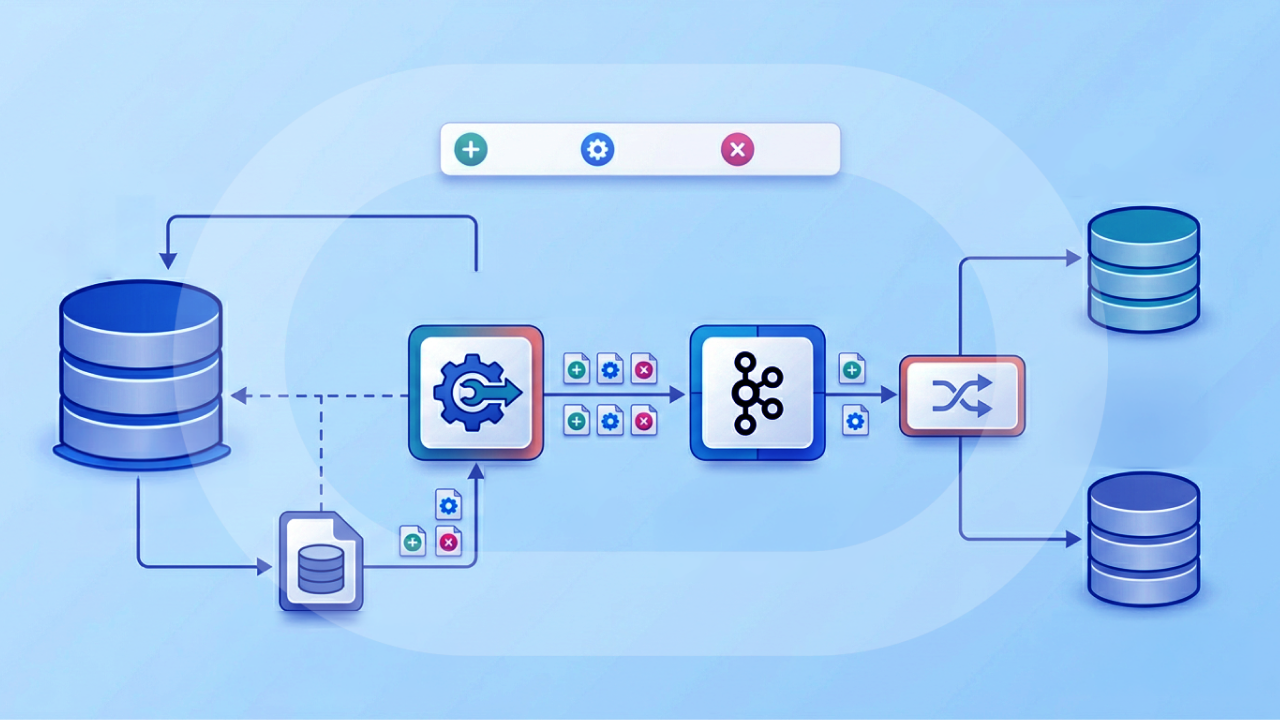

AI data pipelines automate the journey from raw data to trained models, handling ingestion, transformation, feature engineering, and monitoring in ways traditional extract, transform, load (ETL) pipelines can’t. They require specialized architecture patterns, governance from the start, and attention to challenges like training-serving skew that can silently break production systems. In this guide, you can explore the core components, common pitfalls, and practical steps for building pipelines that deliver real results so that you don’t leave money on the table.

Key takeaways

Here are the main points to remember:

- An AI data pipeline is an automated system that collects, transforms, and delivers data specifically optimized for training and running machine learning models, producing artifacts like curated datasets, feature sets, and model outputs at each stage.

- Unlike traditional data pipelines focused on reporting, AI pipelines include specialized stages like feature engineering, model training, and continuous feedback loops that enable iterative improvement.

- Common architecture patterns include ETL, extract, load, transform (ELT), Lambda, Kappa, micro-batch, and retrieval-augmented generation (RAG) pipelines, each suited to different latency, volume, and use case requirements.

- Successful AI pipelines require attention to data quality, training-serving consistency, governance, and monitoring from the start (not as afterthoughts).

- Platforms like Domo help teams build governed AI-ready data pipelines with automated ingestion from 1,000+ sources, no-code and Structured Query Language (SQL)-based transformation in Magic Transform (Magic ETL), and agent-driven orchestration with Domo Workflows and Agent Catalyst.

What is an AI data pipeline?

An AI data pipeline moves and prepares data for use in AI systems through a series of automated processes. Everything from collecting raw data to transforming it into clean, structured formats and feeding it into machine learning (ML) models or other AI tools. That is what these pipelines handle.

At its core, an AI data pipeline takes raw inputs and produces a trained, deployable model along with the monitoring infrastructure to keep it performing well. The key artifacts produced along the way include raw data (owned by data engineers), curated datasets (owned by analytics teams), feature sets (owned by ML engineers), model artifacts (owned by data scientists), and monitoring metrics (owned by machine learning operations (MLOps) teams). Each handoff represents a trust boundary where quality gates should exist.

Why does this matter? AI is only as good as the data it learns from. Poor-quality data results in inaccurate predictions and missed insights. A well-designed pipeline ensures that data is accurate, timely, and ready to support decision-making or automation.

A minimum viable AI pipeline includes five core components: ingestion (pulling data from sources), transformation (cleaning and preparing data), feature engineering (creating model inputs), training and inference (building and running models), and monitoring (tracking performance over time).

AI data pipelines vs traditional data pipelines

"How is this different from what I already have?" The question comes up constantly. Traditional data pipelines and AI data pipelines share DNA. Both move data from point A to point B. But they serve fundamentally different purposes and operate with different rhythms.

Traditional pipelines are designed for reporting and analytics. They follow a linear flow: extract data, transform it according to business rules, load it into a warehouse, and serve it to dashboards. The output is a report or visualization that humans interpret.

AI pipelines extend this foundation with additional stages and (critically) with iteration built into their design. They do not just move data. They prepare it for algorithms that will learn from it, make predictions, and improve over time based on outcomes.

At scale, the difference is also operational. Traditional pipelines can tolerate more tool sprawl because the output is usually a dashboard refresh. AI pipelines feed production systems, models, AI agents, and automated workflows, so gaps in governance, auditability, or access control tend to show up quickly. And loudly.

The following comparison highlights the key differences:

The ETL pipeline is not obsolete. It is foundational. AI data pipelines build on ETL principles while adding the machinery needed for machine learning: feature stores, model registries, evaluation gates, and continuous monitoring.

How AI data pipelines work

AI data pipelines typically include several stages, each designed to handle a specific part of the data journey. Understanding these stages helps you identify where bottlenecks occur and where quality gates should exist.

Data ingestion

Data ingestion pulls data from sources such as databases, application programming interfaces (APIs), Internet of Things (IoT) devices, or files. This can be done in batch mode (scheduled intervals) or real time (streaming data).

The sheer diversity of sources is what trips up most data engineers at this stage. Customer data might live in a customer relationship management (CRM) system, transaction data in a billing system, and behavioral data in web analytics. Each has different formats, update frequencies, and connectivity requirements. A well-designed ingestion layer abstracts these differences so downstream stages receive consistent inputs regardless of where the data originated.

Hybrid environments add another layer of complexity. Plenty of organizations still run critical systems in on-premises enterprise resource planning (ERP) systems and databases, while newer workloads live in cloud apps and cloud data warehouses. If your ingestion layer cannot reliably pull from both without months of custom integration work, your AI timeline gets... ambitious.

Connector breadth and governance matter here. Domo Data Integration includes 1,000+ prebuilt connectors (plus custom options) for cloud apps, databases, files, and on-prem systems, so teams spend less time maintaining connectors and more time designing a pipeline that holds up in production.

Data transformation and feature engineering

Data transformation cleans, filters, normalizes, or enriches the data so it's useful. Removing duplicates. Standardizing formats. Creating calculated fields. Applying business rules.

Feature engineering is where AI pipelines diverge most significantly from traditional ETL. This stage creates the specific inputs that machine learning models need, converting raw data into signals the algorithm can learn from. A "days since last purchase" feature, for example, might be more predictive than a raw timestamp. Creating features that look predictive in isolation but introduce subtle data leakage when combined with other signals? That happens more often than you'd think. Always validate feature logic against your prediction timeline before adding new features to production.

For organizations running multiple models, a feature store becomes essential. A feature store is a centralized repository that stores feature definitions and computed values, ensuring the same feature is calculated identically during training and when the model runs in production. Feature stores typically have two components: an offline store for batch training (where you need historical feature values) and an online store for real-time inference (where you need the latest feature values with low latency).

Dataset versioning matters here too. When you retrain a model, you need to know exactly which version of the data and features were used.

Data storage

Data storage places data in a system where it can be accessed by AI models, such as a cloud data warehouse or data lake. Cloud-based options are popular for their scalability and accessibility. Warehouses (e.g., Snowflake, BigQuery) support structured data and fast queries; lakes (e.g., AWS S3, Azure Data Lake) handle semi-structured or unstructured data.

In some architectures, you don't have to copy everything into a new storage layer. Data federation lets you query data in a warehouse or lake in place, which can reduce duplication and keep pipelines simpler (especially when multiple teams need governed access to the same source of truth). Domo supports data federation as part of its Data Integration layer.

Model training and inference

This stage uses the prepared data to train models or generate predictions. This may involve supervised learning (with labeled data), unsupervised learning (for pattern detection), or reinforcement learning. Outputs may flow back into dashboards or business applications.

Monitoring and feedback loops

Monitoring tracks model performance and uses outcomes to improve future predictions. But effective monitoring goes beyond checking whether your model is "working." A three-layer monitoring framework helps you catch problems before they impact business outcomes:

- Data layer monitoring tracks the health of incoming data. Key metrics include schema freshness (has the structure changed?), completeness (are expected fields populated?), and distribution shift (has the statistical profile of the data changed?). Example metric: percentage of records with null values in required fields, with an alert threshold of greater than five percent.

- Feature layer monitoring ensures consistency between training and serving. Key metrics include training-serving parity (are features computed the same way?), distribution drift (have feature distributions shifted since training?), and correlation stability (do feature relationships still hold?). Example metric: Kullback-Leibler (KL) divergence between training and production feature distributions, with an alert threshold of greater than 0.1.

- Prediction layer monitoring tracks model outputs and business impact. Key metrics include calibration (do predicted probabilities match actual outcomes?), performance degradation (has accuracy dropped?), and bias signals (are predictions fair across segments?). Example metric: weekly accuracy compared to baseline, with an alert threshold of greater than five percent degradation.

This layered approach ensures the model evolves alongside your data and that you can trace problems back to their source, whether that's bad input data, drifting features, or a model that needs retraining.

A retail business might ingest sales and customer behavior data daily, transform and enrich it to include customer segments, store it in a warehouse, and use it to forecast inventory needs via predictive models.

How AI enhances and automates data pipelines

Will AI replace ETL? No. But AI is transforming how ETL works.

AI-powered automation handles many of the tedious, error-prone tasks that used to require manual effort. Intelligent systems can now detect schema changes and adapt mappings automatically. They identify anomalies and data quality issues before they corrupt downstream analytics. They generate transformation code for repetitive patterns. They optimize query performance based on usage patterns.

AI can also change what the end of a pipeline looks like. Instead of stopping at a dashboard or a weekly model run, some teams now use AI agents to watch pipeline outputs, spot exceptions, and kick off the next step automatically (think: open a ticket, enrich a dataset, or trigger an approval workflow). That's the idea behind Agent Catalyst: pipelines that act, not just move data.

There's a clear boundary between what AI can automate reliably and what still requires human judgment.

AI can automate:

- Schema mapping and field matching across sources

- Anomaly detection and data quality flagging

- Code generation for standard transformations

- Performance optimization and resource allocation

- Pattern recognition in data flows

Still requires human judgment:

- Domain modeling and business rule definition

- Governance decisions about data access and retention

- Accountability for data quality and compliance

- Edge case handling and exception logic

- Strategic decisions about what data matters

The practical implication: AI automation frees data engineers to focus on architecture, optimization, and model outcomes rather than maintenance work.

Why AI data pipelines matter

AI data pipelines bring several advantages to businesses and teams. The value looks different depending on your role, but the underlying benefits apply across the organization:

- Time savings: Automate repetitive tasks like data cleaning and formatting

- Scalability: Handle large volumes of data without overwhelming analysts or systems

- Improved data quality: Reduce errors by applying consistent rules and validations

- Quicker insights: Get results from AI models in real time or near real time

- Confident decisions: Use accurate data to power more confident choices

Consider a sales team trying to track campaign performance. Without a pipeline, they would pull reports manually, clean spreadsheets, and hope for accuracy. With a pipeline, all of this is done automatically. Data flows in, gets cleaned, and is visualized in a dashboard. The team spends less time wrangling data and more time using it.

Here's how different roles benefit:

- HR: Use pipelines to monitor engagement metrics and forecast attrition

- Marketing: Automatically segment audiences and measure campaign impact

- Finance: Track spending in real time and flag anomalies instantly

- Operations: Monitor logistics and flag disruptions as they happen

Types of AI data pipelines

No one-size-fits-all approach exists for AI data pipelines. Your data strategy, infrastructure, and real-time needs will influence which architectural pattern best supports your goals.

Here are the most widely used AI data pipeline models, their characteristics, and when to use each:

ETL (extract, transform, load)

This traditional pattern involves extracting data from source systems, transforming it into a usable format, and then loading it into a centralized storage system. It's ideal when you want strict data quality checks before loading or when the transformation process is computationally intensive.

Best for:

- Compliance-heavy environments

- Historical analysis

- Pre-aggregated, clean data sets

Example: A healthcare provider consolidates patient records from multiple clinics, cleanses and anonymizes the data, and then loads it into a secure data warehouse for reporting.

ELT (extract, load, transform)

A modern approach for cloud-native systems, ELT loads raw data first and performs transformations within the data warehouse. This makes the most of the power and scale of modern databases and allows more flexibility for downstream use.

Best for:

- High-volume, schema-diverse data

- Organizations using cloud warehouses like Snowflake or BigQuery

- Teams who want quick ingestion with later transformation

Example: A retail chain loads all raw sales transactions into BigQuery, then uses SQL to model different views for finance, operations, and marketing.

Lambda architecture

This hybrid model combines batch and real-time data processing. The batch layer processes historical data for accuracy, while the real-time layer provides immediate insights on fresh data. Results are merged to deliver comprehensive views.

Best for:

- Companies that want both real-time monitoring and deep historical analysis

- Use cases like fraud detection, personalized recommendations

Example: A financial services company monitors credit card transactions in real time while analyzing a week's worth of historical data every night to improve fraud models.

Kappa architecture

Designed to simplify Lambda, Kappa architecture processes all data as a real-time stream. It discards the batch layer and assumes that incoming data flows continuously and can be replayed as needed.

Best for:

- Organizations prioritizing real-time applications

- Teams with advanced stream-processing capabilities

Example: A logistics company monitors GPS signals from its delivery fleet to update routes dynamically and estimate delivery times in real time.

Micro-batch pipelines

A hybrid between batch and real-time. Micro-batching collects small amounts of data over short intervals (every minute, say) before processing it. This balances the lower complexity of batch processing with the timeliness of streaming.

Best for:

- Moderate latency tolerance (seconds to minutes)

- Companies that want near-real-time insights without managing stream infrastructure

Example: An e-commerce platform updates product inventory every two minutes based on online purchases.

RAG pipelines

Retrieval-Augmented Generation (RAG) pipelines represent an emerging pattern for organizations building applications with large language models. Unlike traditional ML pipelines that work with structured, tabular data, RAG pipelines process unstructured content (documents, PDFs, support tickets, knowledge bases) and make it searchable for AI systems.

A RAG pipeline follows a distinct workflow:

- Ingestion: Collect documents from file systems, APIs, or content management systems

- Parsing: Extract text from PDFs, Word documents, HTML, or other formats (often using optical character recognition (OCR) for scanned documents)

- Chunking: Split long documents into smaller segments that fit within model context windows

- Embedding generation: Convert text chunks into vector representations using embedding models

- Vector store indexing: Store embeddings in a vector database (like Pinecone, Weaviate, or Chroma) for similarity search

- Retrieval evaluation: Test that relevant content is actually retrieved for sample queries

Best for:

- Customer support automation using internal knowledge bases

- Document Q&A applications

- Search and discovery over unstructured content

RAG pipelines don't train a model on your data. They make your data retrievable so a pre-trained language model can reference it. This means refresh strategies matter differently. You need to decide when to re-embed content (after updates? on a schedule?), how to handle document deduplication, and how to evaluate retrieval quality over time.

In practice, RAG also benefits from governance the same way BI does. If your AI agent can retrieve a document, that document needs access controls, audit logging, and clear ownership, especially when you're pulling from shared drives and internal knowledge bases. Agent Catalyst is designed around this pattern by connecting AI agents to governed Domo datasets and FileSets using RAG capabilities, so teams can keep the "what can this agent see?" conversation grounded in policy instead of guesswork.

Common challenges and how to overcome them

Building AI data pipelines isn't just about connecting tools. It's about anticipating where things go wrong. The following challenges appear consistently across organizations, regardless of industry or scale.

Challenge: Data silos and fragmented sources

Detection: Teams maintain separate spreadsheets or databases for the same entities; reconciliation requires manual effort; no single source of truth exists for key metrics.

Prevention: Implement a centralized data layer that connects to all sources. Establish data ownership and define which system is authoritative for each entity. Use data contracts between teams to specify schema, freshness, and quality expectations.

Challenge: Hybrid legacy and cloud complexity

Detection: A pipeline works for cloud sources but breaks on on-prem data; teams maintain separate patterns for legacy systems vs modern platforms; adding one more legacy table turns into a quarter-long project.

Prevention: Standardize on hybrid connectivity that can ingest from on-prem and cloud systems through the same governance and monitoring model. The goal is one pipeline architecture that spans both environments, not two parallel stacks that drift apart over time.

Challenge: Tool sprawl and governance gaps

Detection: Different teams build pipelines in different tools; access policies vary by system; audit requests turn into a scavenger hunt across logs, warehouses, and workflow engines.

Prevention: Consolidate where it makes sense. Centralized AI pipeline governance (access control, audit trails, and consistent policies from ingestion to model output) helps IT and data leaders scale AI without turning compliance into a full-time job.

Challenge: Data quality degradation

Detection: Model performance drops without code changes; downstream reports show unexpected nulls or outliers; business people report "the numbers don't look right."

Prevention: Implement automated validation gates at each pipeline stage. Define quality service level objectives (SLOs) (e.g., completeness greater than 99 percent, freshness within four hours) and alert when thresholds are breached. Use tools like Great Expectations or dbt tests to codify quality rules.

Challenge: Scaling bottlenecks

Detection: Pipeline run times increase as data volume grows; jobs fail due to memory or timeout limits; teams wait hours for data refreshes.

Prevention: Design for horizontal scaling from the start. Use partitioning strategies to process data in parallel. Consider streaming or micro-batch patterns for high-volume sources. Monitor resource utilization and set up auto-scaling where infrastructure supports it.

Challenge: MLOps and data engineering silos

Detection: Data scientists build models that can't be deployed; production models use different feature logic than training; no clear handoff process exists between teams.

Prevention: Establish shared ownership of the feature engineering layer. Use feature stores to ensure training and serving consistency. Create deployment checklists that require sign-off from both data engineering and ML teams.

Training-serving skew and data leakage

Two challenges deserve special attention because they're often invisible until models fail in production.

Training-serving skew occurs when features are computed differently during model training than during inference. The model performs well in testing but degrades in production because it's seeing inputs it wasn't trained on.

Watch for these detection signals:

- Model accuracy in production is significantly lower than in validation

- Feature distributions differ between training logs and production monitoring

- The same feature has different values for the same entity at the same timestamp

Use these steps to prevent training-serving skew:

- Use shared feature definitions stored in a feature store

- Version transformation code alongside model code

- Implement continuous integration (CI) tests that compare feature outputs between training and serving paths

- Run shadow deployments that compute features both ways and compare results

- Monitor feature distributions in production against training baselines

Data leakage occurs when information from outside the training window contaminates the feature set, producing artificially inflated model performance that doesn't hold in production.

Here are the most common types of data leakage:

- Target leakage: Using the outcome (or a proxy for it) as a feature

- Temporal leakage: Using data from after the prediction point

- Preprocessing leakage: Fitting scalers or encoders on the full dataset before train/test split

These signs often point to data leakage:

- Model performance is "too good to be true" (e.g., 99 percent accuracy on a hard problem)

- Performance drops dramatically when deployed

- Features have suspiciously high correlation with the target

Use these steps to prevent data leakage:

- Use time-aware train/test splits that respect the prediction timeline

- Implement point-in-time joins that only use data available at prediction time

- Define labeling windows carefully. When did you know the outcome?

- Audit feature availability: for each feature, ask "would I have this value at prediction time?"

- Use holdout sets with strict time cutoffs that simulate production conditions

How to design an AI data pipeline

Designing a pipeline doesn't have to be overwhelming. Here's a practical framework:

1. Define your goal

Start with a clear purpose. Are you predicting customer churn? Personalizing marketing offers? Understanding employee engagement trends? Your objective determines what data you should have and how it should flow.

2. Identify your data sources

Map out all the systems where your data lives, like email platforms, sales databases, or survey tools. Make sure these sources are accessible and secure.

If you're working in a hybrid environment, call that out early. Your pipeline plan should include both cloud apps and legacy on-prem systems (like ERP) so you do not end up with a "cloud-only" AI model that ignores half the business.

3. Clean and transform your data

Use tools to clean out duplicates, fix errors, and standardize formats. This is where ETL or ELT tools come in handy. You will also want to enrich data, for example, by adding customer segmentation or calculating averages.

4. Choose the right storage

Depending on how much data you have and how fast you want access to it, you may use a cloud warehouse, data lake, or a hybrid solution.

5. Integrate with AI tools

Feed your clean data into AI models. This could be through built-in tools like Domo AI or custom Python models. Make sure the models get the right data at the right time.

If you're building with large language models, this is also where your RAG pipeline choices show up: what content you ingest, how you store it (often in a vector database), and how you enforce access controls for retrieval.

6. Automate and schedule

Set up your pipeline to run on a schedule or in response to triggers (like a new file upload). Automation ensures data is always fresh and insights stay relevant.

For operational use cases, it helps to plan for bidirectional flows. Some pipelines don't just ingest data. They also send scored outputs back into business systems (for example, pushing churn risk scores into a CRM) so teams can act where they already work. Domo's Integration Suite supports these send-back patterns as part of Domo Data Integration.

7. Monitor and improve

Track how your pipeline performs and how accurate your models are. Make improvements when you want, especially when new data sources are added or business goals change.

Example: predicting customer churn

Say a telecom provider wants to reduce customer churn. Here's how the pipeline might look:

- Goal: Predict which customers are likely to cancel their service

- Sources: CRM, billing system, customer support tickets

- Transformation: Clean up missing values, create features like "number of complaints" or "late payments"

- Storage: Load into Snowflake

- Model: Train a logistic regression model in Python

- Deployment: Run weekly predictions and surface at-risk customers in Domo dashboards

- Monitoring: Track model accuracy and update features quarterly

This approach helps the customer success team proactively reach out before customers leave.

Example: audience segmentation for targeted marketing

A retail brand wants to improve email campaign performance. Here's how they could design a pipeline:

- Goal: Segment customers based on behavior for targeted offers

- Sources: E-commerce platform, email marketing tool, website analytics

- Transformation: Create features like "last purchase date," "email open rate," and "average cart size"

- Storage: Centralize in BigQuery

- Model: Apply clustering algorithms to create behavioral segments

- Deployment: Sync segments to the marketing platform

- Monitoring: Track open rates, conversions, and segment performance over time

Data governance and pipeline security

Governance must be part of your pipeline design from the start. Not bolted on at the end. And honestly, that's the part most guides skip over. Effective governance addresses security, privacy, and accountability at every stage from ingestion through model output.

For many organizations, governance also doubles as a tool-sprawl fix. When access policies, audits, and controls live in one place, teams spend less time reconciling "who can see what" across five different systems. IT leaders get centralized oversight, and delivery teams get fewer last-minute surprises.

Core governance requirements include:

- Data lineage: Track where data originates, how it transforms, and where it flows. Tools like Apache Atlas or dbt docs can automate lineage tracking across your pipeline.

- Access control: Implement role-based access controls (RBAC) with least-privilege principles. Use row-level and column-level security to restrict sensitive data by role. Avoid static credentials. Use workload identity or short-lived tokens with rotation.

- Encryption: Encrypt data at rest and in transit. Use customer-managed keys (CMK) through your cloud provider's key management service for sensitive workloads.

- Secrets management: Never hardcode credentials in pipeline code. Use secrets managers (HashiCorp Vault, AWS Secrets Manager, Azure Key Vault) with automatic rotation.

- Data contracts: Establish agreements between pipeline producers and consumers that specify schema, freshness SLOs, and quality requirements. When contracts are violated, alerts should fire before bad data reaches production.

- Compliance alignment: Map your controls to relevant regulations (General Data Protection Regulation (GDPR), Health Insurance Portability and Accountability Act (HIPAA), California Consumer Privacy Act (CCPA)) and maintain documentation that demonstrates compliance. Define retention schedules and deletion procedures for each data category.

- Bias mitigation: Ensure your AI outputs aren't reinforcing biased data patterns. Monitor model predictions across demographic segments and flag disparities.

- Audit logging: Maintain immutable logs of data changes, access events, and model decisions. Centralize logs in a security information and event management (SIEM) system for security monitoring and incident response.

Domo supports these requirements with governance features like user access management, row-level security, and built-in data audits.

AI pipeline use cases by department

AI data pipelines create value across the organization:

- HR: Predict turnover, monitor employee sentiment, forecast workforce needs

- Sales: Identify warm leads, score opportunities, forecast quotas

- Marketing: Create personalized content, optimize campaigns, run A/B tests

- Finance: Detect fraud, model cash flow, optimize budgets

- Customer support: Route tickets, predict resolution times, measure satisfaction

- Operations: Predict inventory needs, optimize logistics, monitor equipment

How Domo supports AI data pipelines

Domo makes it easy to build, automate, and monitor AI data pipelines, all without having a team of engineers. With built-in tools and pre-integrated AI services, you can:

- Use Domo Data Integration to connect to 1,000+ prebuilt data sources (plus custom options) across cloud apps, databases, files, and on-prem systems. It also supports data federation when you want to query data in place, and it includes an Integration Suite for sending data back into source systems for operational workflows.

- Use Magic Transform (Magic ETL) to transform data without writing code, while still supporting SQL, Python, and R in the same pipeline environment. Magic Transform can also run externally hosted ML models inside ETL flows, so AI inference becomes a pipeline step rather than a separate system. Transform jobs run on Domo's Adrenaline engine and can be scheduled with failure alerts, so teams know quickly when something breaks.

- Orchestrate multi-step pipeline actions with Domo Workflows, including agent-triggered steps when you want an automated loop from insight to action.

- Train, deploy, and interpret models using Domo AI services, with flexibility to work with DomoGPT, third-party models, or custom models depending on your team's needs.

- Apply security, compliance, and governance with built-in policies including row-level security and audit logging.

- Use Agent Catalyst to add human-in-the-loop oversight and autonomous monitoring. Agent Catalyst gives pipelines "agency." AI agents can query governed Domo datasets and FileSets, detect anomalies in outputs, and trigger corrective actions or downstream processes using prebuilt agent templates and workflow orchestration.

- Visualize outputs in dashboards with alerts and storytelling tools.

Whether you're forecasting trends or finding anomalies, Domo helps your team go from raw data to real results, without months of custom integration cycles.

AI pipelines aren't just for data scientists. With the right platform, anyone can harness the power of AI-ready data.