What Is Machine Learning? How It Works, Types, and Applications

Machine learning enables computers to recognize patterns in data and improve their accuracy over time, all without human intervention for each new situation. This article explains the core concepts behind machine learning (ML), breaks down the differences between supervised, unsupervised, semi-supervised, and reinforcement learning, and shows how businesses apply these techniques to automate decisions and uncover insights that would be impossible to find manually.

Key takeaways

Here's the short version of what you'll learn:

- Machine learning is a subset of AI that enables computers to learn from data and improve without explicit programming

- The four main types are supervised, unsupervised, semi-supervised, and reinforcement learning

- Businesses use ML for recommendations, fraud detection, forecasting, and process automation

- Deep learning extends ML capabilities using neural networks for complex pattern recognition

- Getting started with ML requires understanding your data and choosing the right tools for your goals

- ML only creates value when teams can govern and operationalize model outputs into decisions and workflows

What is machine learning?

Machine learning is a method of teaching computers to recognize patterns in data and make decisions based on what they find, without being explicitly programmed for each scenario. Think of it like teaching a child to recognize dogs: instead of listing every possible dog characteristic, you show them hundreds of pictures until they learn to identify dogs on their own.

Here's a concrete example. In spam detection, emails go in as input, and the model returns a classification of spam or not spam as output. The algorithm learns which patterns (certain words, sender behaviors, formatting) tend to appear in spam messages by studying thousands of labeled examples.

Unlike traditional software where a programmer writes specific rules for every situation, machine learning algorithms discover the rules themselves by analyzing data. This makes ML particularly powerful for problems where the rules are too complex to write manually or where patterns change over time.

Machine learning vs artificial intelligence

All machine learning is AI, but not all AI is machine learning. That's the simplest way to cut through the confusion.

Artificial intelligence is the broader concept of machines performing tasks that typically require human intelligence. This includes everything from playing chess to understanding speech to making recommendations. Machine learning is a specific approach to achieving AI, one that relies on learning from data rather than following pre-programmed rules.

Consider two approaches to building a spam filter. A rule-based AI system (not ML) might use explicit rules like "if the email contains 'free money,' mark it as spam." A machine learning system instead learns which patterns indicate spam by studying thousands of examples, discovering rules that humans might never think to write.

The following comparison clarifies how these terms relate to each other:

TermDefinitionTypical ApproachBest ForExamplesArtificial IntelligenceMachines performing tasks requiring human-like intelligenceRule-based systems, expert systems, or learning algorithmsAny task requiring decision-making or pattern recognitionChess engines, virtual assistants, recommendation systemsMachine LearningSubset of AI that learns patterns from dataStatistical algorithms trained on datasetsProblems with complex patterns or changing conditionsSpam filters, fraud detection, image recognitionDeep LearningSubset of ML using layered neural networksNeural networks with multiple hidden layersUnstructured data like images, audio, and textVoice assistants, facial recognition, language translationGenerative AIApplication of deep learning that creates new contentLarge models trained on massive datasetsContent creation and creative tasksChatGPT, image generators, code assistants

Examples of AI that is not machine learning include early chess programs that used decision trees with hand-coded rules, and expert systems from the 1980s that encoded human expertise as if-then statements. These systems could perform intelligent tasks but did not learn or improve from data.

Why machine learning matters for business

Insights from machine learning can improve business intelligence and help organizations make data-driven decisions. With machine learning, organizations can quickly automate processes and analyze more complex data more quickly than before. It can help businesses identify opportunities for growth and profit as well as identify previously unnoticed risks.

For finance teams, ML means more timely demand forecasting and reduced fraud losses. Marketing departments can automatically segment customers and predict which accounts are likely to churn. Operations leaders can optimize inventory levels and anticipate equipment failures before they happen.

The technology itself is not the value. Automated decision-making is. That's what helps teams move quickly and compete more effectively. As the volume and variety of data that organizations collect grows, machine learning tools offer a more efficient, more powerful, and more affordable way to learn from and apply data.

One practical detail that gets missed in a lot of ML conversations: predictions do not magically turn into business outcomes. Teams need a way to govern who can see model outputs, audit how those outputs get used, and route high-impact predictions into the next step of work (alerts, approvals, case management, and so on).

How does machine learning work?

Training and inference. Two distinct phases drive any machine learning system, and understanding this distinction matters before diving into the mechanics.

Training is when the model learns from historical data. During this phase, the algorithm adjusts its internal parameters to minimize errors on known examples. Think of it like studying for a test: the model reviews examples and refines its understanding.

Inference is when teams apply the trained model to new, unseen data to generate predictions. This is like taking the test on questions you haven't seen before. The model uses what it learned during training to make decisions on fresh inputs.

Here's a simple example: imagine training a model to predict house prices based on square footage. You start with random guesses about the relationship between size and price. The model makes predictions on houses where you already know the sale price, measures how far off those predictions are, and adjusts its parameters to reduce the error. After thousands of these adjustments, the model learns a reliable relationship it can apply to new listings.

There are hundreds of new machine learning algorithms published each day, but a machine learning algorithm's learning system contains the same general components:

- Decision process: The learning process begins when an algorithm makes an estimate based on a pattern it finds in labeled or unlabeled input data.

- Error function: The goal of the error function is to evaluate the estimate or prediction that the algorithm generated. Often, an error function compares the model with a known example to determine how accurate the model is.

- Model optimization process: If the error function determines that the model can improve to fit the data points more closely, then the algorithm adjusts the model to reduce discrepancies.

- Repeat until accurate: As part of the learning process, the algorithm will repeat the error function and model optimization processes autonomously until it meets a predetermined level of accuracy.

In business settings, there's usually another "hidden" component: the data pipeline that feeds training and inference. Data engineers spend a lot of time making sure the data is clean, current, and access-controlled (think row-level security) so models learn from the right signals and only see what they are allowed to see.

4 types of machine learning

While there are countless machine learning algorithms, they are often sorted into four main categories. You may notice that some sources list three types while others list four or five. The core paradigms are supervised, unsupervised, and reinforcement learning. Semi-supervised learning is a hybrid approach, and generative AI is sometimes listed separately because it represents a distinct learning technique called self-supervised learning. The differences come down to whether a source is listing foundational paradigms or including application families built on top of them.

Supervised learning

Supervised learning algorithms receive a set of inputs and the correct outputs. The algorithm learns by comparing its own output with those correct outputs to identify any errors. When the algorithm finds errors, it modifies its model. Teams use these types of algorithms when historical data is likely to predict future events. Strong performance on training data does not guarantee strong performance on new data, so always validate on a held-out test set that the model has never seen.

Common examples include detecting fraudulent financial transactions, identifying spam in your inbox, and predicting customer churn based on account activity.

Unsupervised learning

In unsupervised learning, developers do not tell the algorithm what the correct answer is. Instead, the algorithm has to explore the data, find structure and patterns, and figure out what is being shown.

Common examples include identifying customer segments for marketing campaigns, detecting anomalies in network traffic, and grouping similar products for recommendation systems.

Semi-supervised learning

Teams can use semi-supervised learning algorithms for many of the same applications as supervised machine learning algorithms. The difference is that unlike supervised machine learning algorithms, which use only labeled data, semi-supervised machine learning algorithms can use both labeled and unlabeled data. The cost of gathering unlabeled data is lower than the cost of gathering labeled data, and unlabeled data is easier to acquire. So, these algorithms come in handy when the cost of using solely labeled data is too high for a complete training process.

A common example is image classification where you have a small set of labeled images and a large collection of unlabeled ones.

Reinforcement learning

Reinforcement learning algorithms are similar to supervised machine learning algorithms, but developers do not train them on sample data. Instead, developers train reinforcement learning algorithms through trial and error. Over time, the algorithm discovers which actions deliver the desired rewards.

Common examples include game-playing AI that learns winning strategies, robotics systems that learn to navigate environments, and autonomous vehicles that learn to make driving decisions.

Where generative AI fits in machine learning

Generative AI has become one of the most visible applications of machine learning, but its place in the taxonomy can be confusing. Generative AI builds on self-supervised learning, a technique where models learn from vast amounts of unlabeled data by predicting missing or next elements. For example, a language model learns by predicting the next word in a sentence, training on billions of text examples without human labeling.

This is why some sources list generative AI as a type of machine learning while others treat it as an application family. Technically, deep learning and self-supervised learning techniques create this application layer rather than a fundamentally different learning paradigm.

Examples of generative AI include text generation through large language models, image generation through diffusion models, and code generation through specialized programming assistants.

Deep learning and neural networks

Deep learning uses layered neural networks to learn complex patterns in large data sets. While traditional machine learning algorithms work well for structured data with clear features, deep learning excels at unstructured data like images, audio, and text.

Engineers loosely modeled neural networks on biological brains. They consist of layers of interconnected nodes that process information. The "deep" in deep learning refers to networks with many layers, which allows them to learn increasingly abstract representations of data.

How deep learning differs from machine learning

The key distinction between traditional machine learning and deep learning comes down to feature engineering versus representation learning.

In traditional machine learning, humans must identify and extract relevant features from data before feeding it to an algorithm. For example, to classify images of cats and dogs, you might manually define features like ear shape, fur texture, or face proportions.

Deep learning automatically learns which features matter from raw data. You can feed it raw pixels, and the network discovers on its own that edges, shapes, and textures are useful for classification. And honestly, that's where things get interesting, because you're no longer limited by what a human thought to measure.

However, deep learning is not always the right choice. It requires large amounts of data and significant computing resources. For smaller datasets, interpretability requirements, or latency-sensitive applications, classical machine learning methods often perform more effectively and are easier to explain.

Deep learning forms the foundation for generative AI and large language models, which is why these technologies have advanced so rapidly as computing power and data availability have increased.

Common types of neural networks

Engineers design different neural network architectures for different types of problems.

Convolutional Neural Networks (CNNs) work best with image and video data. They use filters that scan across images to detect patterns like edges, textures, and shapes. CNNs power applications like facial recognition, medical image analysis, and self-driving car vision systems.

Engineers design Recurrent Neural Networks (RNNs) for sequential data where order matters, like text or time series. They maintain a form of memory that allows them to consider previous inputs when processing current ones. Teams use RNNs in speech recognition and language translation.

Transformers are a newer architecture that has revolutionized natural language processing. Unlike RNNs, transformers can process entire sequences in parallel and use attention mechanisms to focus on relevant parts of the input. Developers build large language models like Generative Pre-trained Transformer (GPT) models on transformer architecture.

Benefits and challenges of machine learning

Machine learning offers significant advantages for organizations willing to invest in it, but it also comes with challenges that require careful management.

The benefits of machine learning include:

- Automation of repetitive decisions that would otherwise require human review

- Pattern recognition at scales impossible for humans to match

- Improved accuracy over time as models learn from new data

- Cost reduction through efficiency gains and error prevention

- Ability to personalize experiences for millions of customers simultaneously

The challenges of machine learning require equal attention:

- Data quality requirements: ML is only as good as the data behind it. Poor data leads to poor predictions. Organizations often underestimate the cost and effort of acquiring clean, labeled datasets.

- Labeling costs: Supervised learning requires labeled examples, and creating accurate labels at scale is expensive and time-consuming. A model trained to detect defects needs thousands of images labeled by experts.

- Data leakage: When training data contains information that would not be available at prediction time, models produce misleadingly optimistic results during testing but fail in production. For example, using future sales data to predict past demand creates leakage. This is one of the most common reasons models that look great in development fall apart when deployed.

- Model drift: A model's accuracy degrades as conditions change after deployment. A fraud detection model trained on 2023 patterns may miss new fraud techniques that emerge in 2024. Regular monitoring and retraining are essential.

- Bias and representativeness: When training data does not reflect the population the model will serve, predictions can be systematically unfair or inaccurate for underrepresented groups.

- Interpretability: Complex models, especially deep learning, can be difficult to explain. In regulated industries or high-stakes decisions, the inability to explain why a model made a particular prediction can be a dealbreaker.

- Governance and security: Teams need to control access to model outputs, maintain audit trails, and support human-in-the-loop review for high-risk decisions so experimentation does not turn into compliance headaches.

How businesses use machine learning

Machine learning can provide insight into complex business problems, enable predictive analytics for more informed decision-making, and automate business processes. Understanding how data drives business is essential for any organization across industries. Plus, the ability to automate processes and decisions can improve outputs and increase value. At a high level, businesses use machine learning by finding patterns and applying those patterns to make decisions and future predictions.

Individuals interact with businesses that rely on machine learning every day, even though they may not realize it. A major example is any service or system that provides recommendations. Streaming and entertainment services, music services, search engines, social media, and voice assistants are all powered by machine learning. These platforms collect data about each individual based on behaviors and content interactions. Through machine learning, they can then make recommendations based on a data-informed guess about what you might like to consume next.

There are other common examples of machine learning organized by what data goes in and what the model returns:

- Spam email filters: email content and metadata in, spam or not spam classification out

- Financial fraud detection: transaction data in, fraud risk score out

- Dictation software: audio signals in, transcribed text out

- Self-driving vehicles: camera and sensor data in, steering and braking decisions out

- Personal Internet of Things (IoT) health devices: biometric readings in, health insights and alerts out

- Mapping applications: location and traffic data in, estimated arrival times out

- Ride-sharing pricing: demand signals and driver availability in, dynamic pricing out

- Smart email replies: message content in, suggested responses out

- Mobile check deposits: check images in, verified deposit amounts out

- Plagiarism detectors: document text in, similarity scores out

- Customer service chatbots: customer questions in, relevant answers out

- Automated stock trading: market data in, buy and sell decisions out

From model output to business action

A lot of teams nail the model and still get stuck at the finish line. The prediction exists... but it lives in a notebook, a batch job, or a dashboard that nobody checks at 4:30 on a Friday.

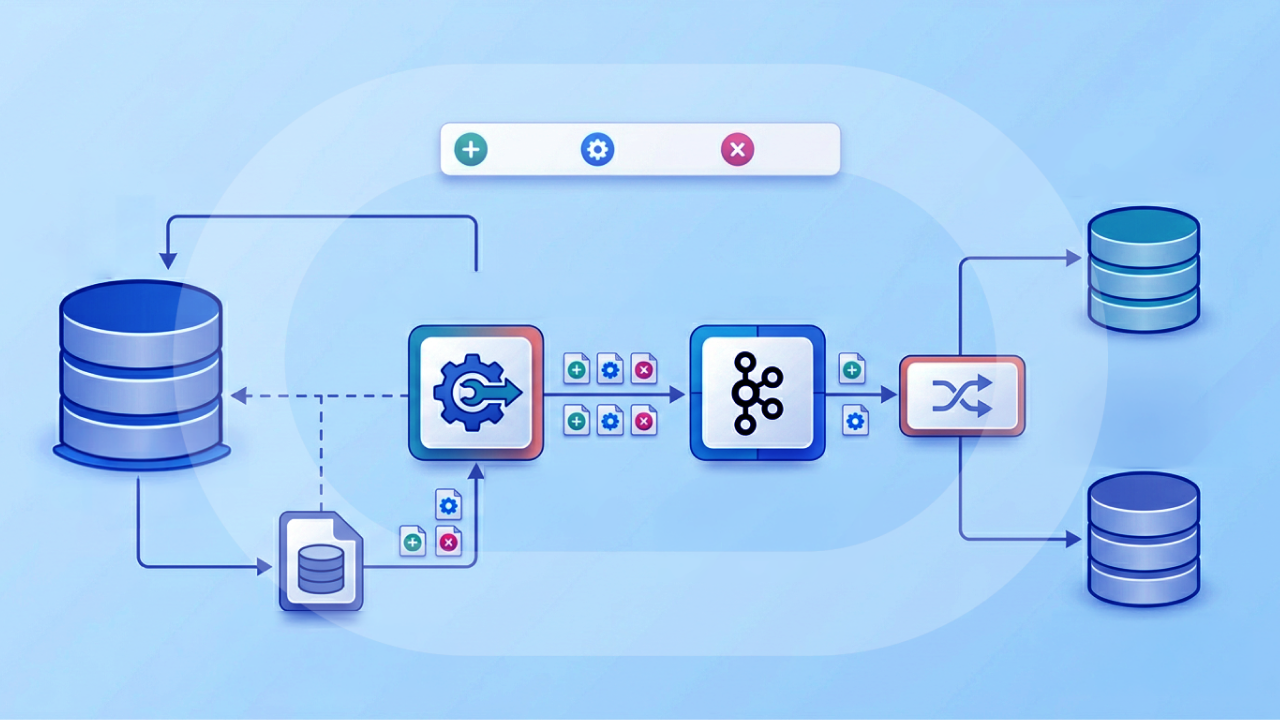

That's why many organizations treat machine learning as a full pipeline problem:

- Data preparation and governance so the model trains and runs on trusted data

- Model execution that fits into existing extract, transform, load (ETL) and extract, load, transform (ELT) pipelines and schedules

- Orchestration that routes high-impact scores into the next step of work, with human-in-the-loop review when it matters

In Domo, this is where Magic Transform (Magic ETL) and Agent Catalyst can work together: Magic Transform can run machine learning actions (like classification and forecasting) or execute bring-your-own-model predictions inside transformation flows, and Agent Catalyst can route those outputs into Domo Workflows so teams can act on them through alerts, approvals, and automated steps.

How machine learning is used in business forecasting

ML forecasting models learn from historical data like sales records, demand signals, and economic indicators to predict future business outcomes such as revenue, inventory needs, customer churn, and resource requirements. Forecasting is one of the most consistently valuable applications of machine learning in business.

The advantage over traditional forecasting methods is significant. ML handles many variables simultaneously, captures nonlinear relationships that spreadsheet models miss, and adapts as new data arrives. A traditional forecast might use last year's sales plus a growth factor. An ML model can incorporate seasonality, promotional calendars, competitor activity, weather patterns, and dozens of other signals to produce more accurate predictions.

The practical workflow for ML forecasting follows a consistent pattern: define the metric you want to forecast, gather and prepare historical data, train and validate the model against held-out data, deploy the model to production, and retrain as conditions change.

Common forecasting applications include demand planning for inventory management, revenue projection for financial planning, churn prediction for customer retention, and resource allocation for workforce planning.

AutoML tools have made forecasting more accessible to teams without dedicated data science resources.

What to look for in a machine learning platform

More and more businesses are recognizing the importance of machine learning in moving forward in their analytics capabilities. But many organizations don't have either the data science expertise or the personnel to quickly create machine learning models for their data. As you select a data platform to fuel your machine learning, a solution to these challenges should be top of mind.

When evaluating ML platforms, consider these capabilities:

- Connect your data from any cloud, on-premises, or proprietary system without being locked into a single vendor's ecosystem

- Prepare and cleanse datasets so they are ready for machine learning training

- Allow you to choose the best-fitting model for your data by delivering model options for your review

- Support provider-agnostic models, including the ability to bring externally trained models into the platform

- Build machine learning models into your data pipelines so new data will use your selected model

- Write predictive data back to your source systems

- Enable you to share data insights with your organization through data visualization tools

- Employ personalized data permissions and role-based access to model outputs for data governance

- Provide audit trails for model outputs and predictions

- Support orchestration that routes model outputs into governed workflows, including human-in-the-loop review for sensitive decisions

- Offer multi-model flexibility so teams can work with models from providers like Databricks ML/AI, Amazon SageMaker, Google Vertex AI, Gemini, open-source options (including Hugging Face), and custom models in the same program

Many platforms constrain ML capabilities to their own ecosystems or a single cloud provider. This limits flexibility as your ML needs evolve. Look for platforms that let you deploy models trained elsewhere and control who sees model outputs and how they are used.

If you're trying to reduce tool sprawl, it helps to think in stages. The Domo Platform frames this as a connected journey: unify data, establish context, apply intelligence, coordinate action, and observe outcomes. That last step matters more than you'd think.

Getting started with automated machine learning

Domo's automated machine learning (AutoML) capabilities help organizations augment analytics with machine learning insights. It makes AI and machine learning accessible to everyone, data novices and data scientists alike. By employing deep integration with Amazon SageMaker Autopilot, Domo enables teams to determine the best machine learning models for their data and share insights in hours instead of weeks or months.

The most effective AutoML implementations rely on clean, governed data, which is why the data preparation layer matters as much as the model selection layer.

Domo's Magic Transform supports embedded ML model execution, including bring-your-own-model capabilities, Hugging Face integrations, and Python/R scripting, directly within ETL pipeline flows. Teams can apply model outputs to their data at the transformation stage before it reaches dashboards or downstream systems, rather than treating ML as a separate post-processing step.

Magic Transform also includes native actions for common ML tasks like classification and forecasting, and it can execute externally hosted models inside pipeline flows. For teams training in Databricks ML/AI, that "train there, run here" pattern can reduce handoffs when you need predictions to show up in the same governed data pipeline your business already depends on.

Turning predictions into workflows with Agent Catalyst

AutoML is a great start. But most teams eventually ask the same question: what happens after the model scores something?

Agent Catalyst is designed for that moment. It can take model outputs (from Domo, third-party providers, or custom models) and route them into Domo Workflows orchestration so people can review, approve, escalate, or automate the next step. It also includes pre-built AI Agent Templates for ML-adjacent use cases like risk and fraud analysis, waste pattern detection, and retail promotion effectiveness.

For data teams, Agent Catalyst can also connect agents and workflows directly to governed datasets, FileSets, and unstructured documents using retrieval-augmented generation (RAG). RAG is a method where an AI system retrieves relevant source material and uses it as context when generating an answer or taking an action, which helps keep responses grounded in what your organization has approved.

For organizations just beginning their ML journey, the practical starting point is your data pipeline. Not a separate ML environment. Start with a clear business question, ensure your data is clean and accessible, and use AutoML to test which approaches work best for your specific problem.

The future of machine learning in 2026

As the need for data continues to increase, data scientists who understand machine learning will be more in demand than ever. Organizations will adopt tools that democratize machine learning and make it easy to identify business questions and use data to answer them.

Several specific trends are shaping ML in 2026:

Responsible AI and model governance are becoming first-class requirements rather than afterthoughts. Regulated industries and high-stakes decisions require the ability to explain why a model made a particular prediction. Organizations are implementing model cards, feature importance tracking, and audit trails as standard practice.

Explainability is moving from nice-to-have to essential. Stakeholders want to understand not just what a model predicts but why. Techniques like Shapley Additive Explanations (SHAP) values and feature importance scores are becoming standard parts of ML workflows, not specialized tools for data scientists.

Operationalized ML is replacing experimental ML. The focus is shifting from building models to maintaining them in production, including monitoring for drift, managing retraining schedules, and ensuring consistent performance over time.

Data integration combined with domain knowledge tools will create even more opportunities to automate business processes and put machine learning into action. The organizations that succeed will be those that treat ML governance with the same rigor they apply to data governance.

A practical signal to watch: multi-model programs are becoming the norm. Teams might train in one place (like Databricks ML/AI), deploy through an ML service (like Amazon SageMaker or Google Vertex AI), and then need a governed layer that can share outputs in BI and coordinate workflows. That's where "machine learning without the deployment bottleneck" starts to feel less like a slogan and more like sanity.