What Is a Key Performance Indicator (KPI)? Definition, Types, Examples, and Best Practices

.png)

A KPI is only valuable when people trust it and can see it when it matters. This guide explains what a KPI is (and isn't), walks through common KPI types and how to set them, and shares examples and best practices for building measurement programs that create real accountability. You'll learn how to move from tracking numbers to driving action.

Key takeaways

Here are the key takeaways from this guide:

- A KPI is a measurable value tied directly to a business objective, not just any metric you track

- Effective KPIs follow specific, measurable, achievable, relevant, and time-bound (SMART) criteria and pair lagging outcomes with leading indicators you can influence

- The best KPI programs keep indicators few and focused, with clear ownership, targets, and review cadences

- KPI dashboards turn measurement into action by making performance visible, trusted, and easy to act on

What is a KPI?

A KPI is a measurable value tied to an outcome that's important to your company. Not "every number you track." KPIs focus attention on the few indicators that best represent progress toward a goal like growth, efficiency, customer experience, or risk reduction.

Think in a simple chain:

- : the outcome you want, like "improve customer retention."

- : how you'll measure progress (e.g., churn rate).

- Target: what "on track" looks like; for example, "churn ≤ 3 percent monthly."

- Initiatives: what you'll do to influence the KPI, such as onboarding improvements or support coverage changes.

KPIs can be financial (profit margin), non-financial (customer satisfaction), or even "intangible" but measurable (patents filed). The direct connection to business objectives and decision-making is what separates them from everything else.

KPI vs metric vs measure

These terms get mixed together constantly. Knowing the differences helps you avoid KPI overload.

The following table breaks down how each term differs and when to use it:

In practice, while you might monitor dozens of metrics, only a small number should be KPIs. If a number wouldn't change what you do next, it probably isn't "key."

Here's a helpful gut check: If leadership would be concerned (or excited) enough to ask "why?" when the number moves, it's a KPI candidate. If the number is mostly used for curiosity or background, keep it as a supporting metric.

OKRs (Objectives and Key Results) are a related but distinct framework. Where KPIs measure ongoing performance against a target, OKRs set ambitious objectives with measurable results for a specific time period. Many organizations use both: OKRs for goal-setting and KPIs for continuous performance monitoring. Treating OKR key results as KPIs or vice versa is a mistake I see constantly. They serve different purposes, and conflating them dilutes the value of both.

Here's how the same business goal might be expressed across all four terms:

- Measure: 847 customers canceled last month

- Metric: Churn increased 15 percent compared to the prior month

- KPI: Monthly churn rate of 4.2 percent (target: ≤ 3 percent)

- OKR: Objective: Become the retention leader in our category; Key Result: Reduce monthly churn from 4.2 percent to 2.5 percent by Q3

Why KPIs matter

KPIs are a key component of a company's business intelligence strategy and are the "metrics that matter most." Used well, they become the steering system for the business, showing what's working, what's off-track, and where to focus next.

A KPI program also has a very human job: help executives, managers, analysts, and frontline teams stop debating the number and start debating what to do about it. That only happens when you get to one version of the truth across departments.

Alignment with business goals

KPIs translate strategy into shared, measurable outcomes. They clarify what the organization is optimizing for and help teams align their day-to-day work with those priorities. Visible. Measurable. Actionable. When goals have all three qualities, coordinating cross-functional work gets a lot easier.

Clarity, focus, and accountability

Expectations become visible. When teams know what matters and what "good" looks like, it's easier to focus effort and assign ownership for outcomes. Clear KPIs also reduce "priority drift," where teams over-index on urgent tasks that don't move the business forward.

Better decisions

KPIs improve strategic, tactical, operational, and financial decisions; data-driven decisions across the business get sharper when KPI data is trusted and visible.

This is where many organizations get stuck: fragmented systems can produce conflicting KPI values across departments, which erodes confidence and slows decision-making. Tight definitions, governed access, and consistent calculation logic are what keep KPI conversations productive.

Benchmarking and context

KPIs help organizations benchmark performance against industry standards or competitors. Benchmarks aren't the goal, but they add context for target-setting and can highlight where your business is outperforming (or falling behind) relative to peers.

Benchmarking also applies internally. If you can't compare a KPI across regions, teams, or business units because the definitions differ, you end up with blind spots and late course corrections.

Types and categories of KPIs

There's no single "right" taxonomy. The best KPI program uses a few simple categories so teams know how to interpret (and act on) each indicator.

Strategic vs operational vs functional KPIs

Strategic KPIs reflect company-wide outcomes, typically quarterly or annually:

- Revenue growth rate

- Net revenue retention

- Customer churn

- Profit margin

- Cash flow from operations

Operational KPIs track performance of specific processes on shorter horizons (daily/weekly/monthly):

- On-time delivery

- Support response time

- Defect rate

- Production throughput

- Website conversion rate (weekly)

Functional (departmental) KPIs are owned by teams like sales, marketing, finance, or HR and should ladder up to strategic goals:

- Sales win rate

- Marketing sourced pipeline

- Days sales outstanding (DSO)

- Employee retention

- Time to hire

A practical rule: if you can't explain how a KPI supports strategy, it's likely just a metric.

Leading vs lagging indicators

Lagging KPIs measure results after the fact (revenue, profit, churn).

Leading KPIs are drivers that tend to predict outcomes (pipeline coverage, adoption rate, preventive maintenance completion).

To determine whether a KPI is leading or lagging, ask: Does this measure an outcome that has already happened, or does it measure an activity or condition that influences future outcomes? If you can act on it before the result is locked in, it is likely a leading indicator.

Strong KPI sets pair one lagging "scoreboard" KPI with a few leading "levers" teams can influence earlier. Here's how the same business objective might be expressed as both:

- Objective: Improve customer retention

- Lagging KPI: Renewal rate (measures the outcome after contracts come up for renewal)

- Leading KPIs: Product adoption milestones, executive sponsor engagement, support resolution time (all influence whether customers renew)

This pairing gives teams both a scoreboard to track results and levers to pull before outcomes are determined.

Input, process, output, and outcome KPIs

This framework helps teams avoid focusing only on outcomes (which can be slow to change):

- Inputs: resources invested (spend, headcount, capacity)

- Processes: how work is done (cycle time, service-level agreement (SLA) adherence)

- Outputs: what gets produced (tickets resolved, units shipped)

- Outcomes: business results (customer satisfaction score, or CSAT, retention, margin)

If you only track outcomes, you might not see problems until it's too late.

Common KPI categories

At a high level, KPIs often fall into categories like financial, marketing, customer-focused, operations, and pipeline metrics. You can also organize by audience (executive vs frontline), by business unit (regional KPIs), or by business model (software as a service, or SaaS, retail, manufacturing, services).

How to develop and set KPIs

Well-designed KPIs come from strategy first, then data. Not the other way around.

Start with objectives and success questions

Define three to seven strategic objectives for the next 6 to 18 months, then convert each into a measurable question:

- "Are we growing efficiently?"

- "Are customers adopting and staying?"

- "Are we delivering with consistent quality?"

- "Are we managing cash well enough to fund priorities?"

This prevents "KPI shopping" based on what's easiest to measure.

Select meaningful KPIs

A KPI should be:

- Specific

- Measurable

- Achievable

- Relevant

- Time-bound

Domo recommends KPIs follow these SMART guidelines to align with overall strategy.

Practical selection filters help you evaluate candidates:

- Decision trigger: Will this KPI drive a decision or action?

- Controllability: Can a team influence it through real levers?

- Signal strength: Does it reliably indicate progress toward the objective?

- Stability: Is it resistant to "gaming" and one-off noise?

Write a clear KPI definition

KPI confusion kills trust. For each KPI, document a complete specification that includes:

- Name and purpose

- Formula (exact calculation with numerator and denominator rules)

- Data sources (systems of record with a freshness service-level agreement, or SLA)

- Segmenting rules (region, product, channel, customer type)

- Inclusions/exclusions (edge cases and business rules)

- Owner (definition owner and performance owner)

- Approval workflow (who must approve definition changes)

- Audit cadence (how often the definition is reviewed for accuracy)

- Semantic layer or metric store reference (where the KPI is defined once and reused across dashboards)

This prevents "same KPI, different number" debates and keeps reporting consistent across teams. When definitions change, document the change, the reason, and the effective date so historical comparisons remain valid. And honestly, teams consistently underestimate how quickly undocumented definition changes create confusion. What seems like a minor tweak can produce months of misaligned reporting before anyone notices.

Set targets, thresholds, and cadence

Targets make KPIs actionable. A target without a method is just a guess, so use a baseline-to-target approach:

- Establish a baseline using 8 to 12 weeks of historical data

- Understand variance by calculating the range or standard deviation of recent performance

- Set three target bands: threshold (minimum acceptable), goal (desired outcome), and stretch (aspirational)

- Define action triggers that specify what happens when performance crosses a threshold

For each KPI, document:

- Unit ($, percent, count, time, score)

- Timeframe (weekly/monthly/quarterly)

- Baseline (current performance)

- Thresholds (green/yellow/red)

Here's a worked example: A logistics team wants to improve on-time delivery. Their baseline over the past 12 weeks is 92 percent, with a range of 88 to 94 percent. They set their threshold at 90 percent (below this triggers escalation), their goal at 96 percent, and their stretch at 98 percent. The action trigger states: "If on-time delivery drops below 90 percent for two consecutive weeks, review carrier performance, warehouse staffing, and top delay codes; propose corrective actions within five business days."

If benchmarks exist, use them as input, but tailor targets to your strategy, constraints, and maturity.

Assign owners and build the review rhythm

A KPI without an owner is just a number. Assign a responsible owner who can validate definitions, monitor performance, and coordinate follow-through.

Domo also notes KPIs should be communicated broadly and reviewed regularly so course corrections happen early.

Think of your review rhythm as a KPI operating system. Each review should answer four questions:

- What changed since the last review?

- Why did it change?

- What are we doing next (owner and timeline)?

- What decisions or support are needed?

Document decisions and action items from each review so there's a clear record of how the organization responded to KPI movements.

A typical cadence looks like this:

- Daily or weekly standups: operational KPIs and exceptions

- Weekly business review: execution drivers and near-term decisions

- Monthly/quarterly reviews: strategic outcomes and resource shifts

How to measure and monitor KPIs

Connect to reliable data sources

KPIs commonly pull from customer relationship management (CRM) systems, enterprise resource planning (ERP) and finance platforms, marketing platforms, support systems, product analytics, and HR systems. The goal is to connect KPIs to systems of record so the organization trusts the numbers and doesn't waste meeting time debating definitions.

In practice, that often means connecting sources like Salesforce for CRM, Oracle NetSuite for financial and operational ERP data, and Workday for HR, plus cloud data platforms like Google BigQuery or a Databricks Lakehouse where teams keep shared datasets.

KPI reliability depends on the pipeline feeding it, not just the dashboard displaying it. Standardized data sources and certified datasets are the foundation of trustworthy KPI reporting. When different teams pull the same KPI from different systems, conflicting numbers erode confidence and slow down decisions.

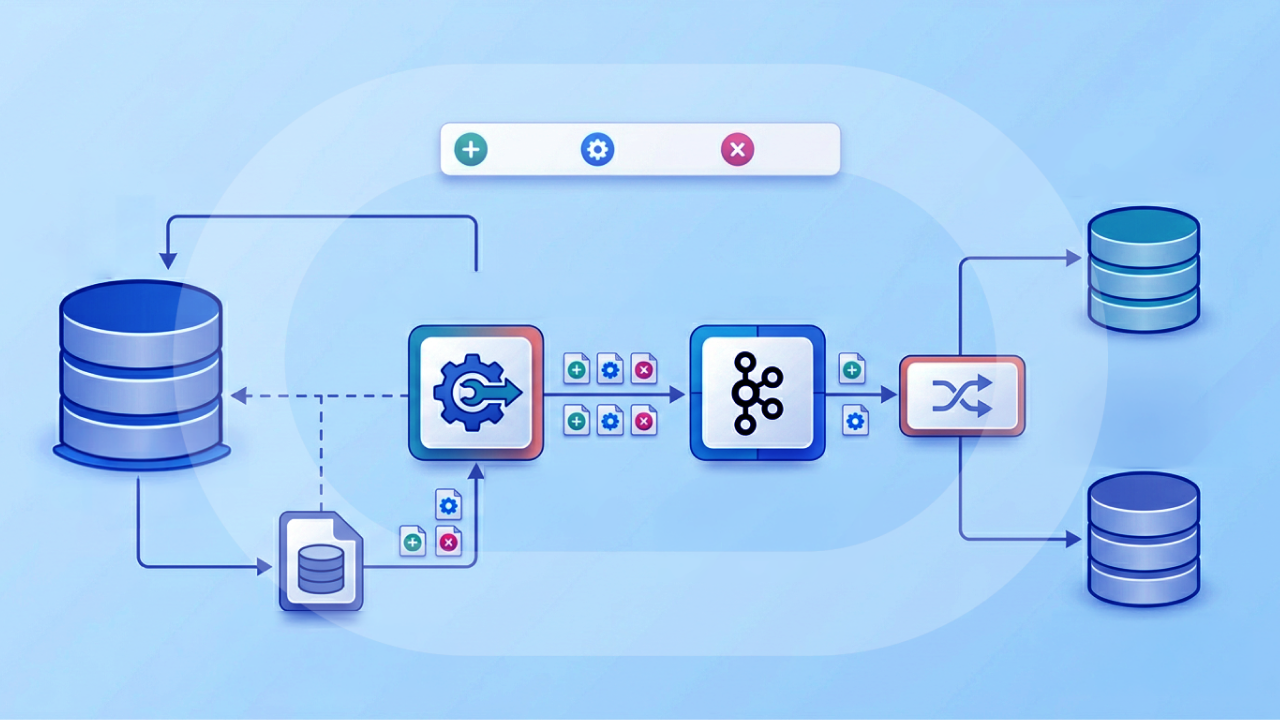

If you're dealing with a lot of disconnected systems, it helps to start with integration first. For example, Domo's Data Integration includes over 1,000 prebuilt connectors so teams can consolidate KPI source data into one governed environment instead of maintaining a patchwork of point-to-point feeds.

Match monitoring frequency to actionability

How quickly can you act? That determines monitoring frequency.

- Daily/weekly: operational KPIs (support, fulfillment, uptime)

- Weekly/monthly: pipeline and execution KPIs

- Monthly/quarterly: strategic outcomes (retention, profitability)

For slow-moving outcomes, add leading KPIs so you don't wait too long to intervene. Also consider rolling windows (for example, 28-day churn) to balance responsiveness with stability.

Use dashboards and alerts to operationalize KPIs

A KPI dashboard is a centralized visual display of your organization's most critical indicators, pulling data from multiple sources and turning it into interactive visualizations.

Dashboards work best when they're role-based and actionable: targets, thresholds, owners, and drill-down for diagnosis. Effective dashboards include role-specific views (not one-size-fits-all), threshold-based alerts that notify owners when a KPI breaches a defined band, and natural language query tools that allow non-technical people to ask questions about KPI data directly.

Alerts can notify owners when performance dips below target so teams don't have to "babysit" dashboards. Self-service access reduces dependence on analysts for ad hoc KPI lookups and helps teams make faster decisions.

If you want KPIs to drive real work, not just meetings, connect dashboards to actions. That can look like embedded workflows in role-specific apps (so a sales leader can follow up when quota attainment drops) or pushing KPI signals back into operational systems through reverse extract, transform, and load (ETL), like sending an "at risk" flag into Salesforce or a staffing signal into Workday.

Choose visualization formats that fit the decision

Different decisions call for different visual formats:

- KPI card: current value, variance to target, and directional change

- Trend line: whether performance is improving over time

- Bars: compare regions/teams/products

- Funnel: diagnose conversion by stage

- Tables with conditional formatting: quickly spot exceptions

More charts is not the goal. Faster understanding is: what changed, where, and what to do next.

KPI data quality and governance checklist

KPI tracking is only as reliable as the data feeding it. Before trusting a KPI for decisions, verify that the underlying data meets quality and governance standards.

Use this checklist to ensure your KPIs are built on a solid foundation:

- Designate a certified data source for each KPI and document it in the KPI spec

- Define data freshness SLAs (e.g., "sales data refreshed daily by 8 am") and monitor for compliance

- Set up pipeline health alerts that notify owners when data feeds are stale, broken, or missing

- Document inclusions and exclusions in the KPI definition to prevent calculation drift over time

- Schedule periodic quality assurance (QA) audits (monthly or quarterly) to catch data quality issues before they reach decision-makers

- Log all changes to KPI definitions, data sources, or calculation rules with effective dates

- Maintain data lineage audit trails so teams can trace a KPI from dashboard back to the source fields and transformations

- Monitor for schema changes (like renamed columns or new values) that can silently break KPI calculations

Decisions made on ungoverned metrics carry hidden risk. A KPI that looks accurate but pulls from an uncertified source or uses an outdated definition can lead teams in the wrong direction without anyone realizing it.

This is also where standardization pays off. In Domo, teams often use Magic Transformation (Magic ETL) to standardize KPI calculation logic with reusable DataFlow templates, certify the datasets that feed executive dashboards, and set freshness alerts so stale KPI data doesn't surprise anyone at 9:00 am on Monday.

KPI dashboards and reporting

Dashboards and reporting are how KPIs become part of the operating rhythm.

What KPI dashboards do

Done well, a KPI dashboard acts as a shared source of truth: it shows current performance, highlights exceptions, and supports fast decisions. Because dashboards are dynamic and actionable, teams can drill into drivers without relying on static reports.

A well-built KPI dashboard eliminates the need to manually reconcile conflicting numbers from different departmental systems. When executives and managers can trust that everyone is looking at the same data, meetings shift from debating numbers to deciding what to do about them.

Dashboard best practices

Effective dashboards follow a few key principles:

- Start from decisions (what must we act on?)

- Keep it scannable (KPI cards + trends)

- Make targets obvious (variance to goal)

- Design for the audience (exec vs operator)

- Enable drill-down (from summary to diagnosis)

- Use consistent definitions and governed data sets

- Add alerts for threshold breaches

Role-based KPI experiences (yes, this matters)

A dashboard isn't "one thing." It's a set of views and workflows different people rely on for different decisions.

Here's what role-based KPI visibility usually looks like when it's working:

- Executives: a unified, cross-functional view (sales, marketing, finance, operations) with always-current numbers and clear accountability

- Line-of-business (LOB) managers: tailored views for their team's goals, plus quick ways to drill into drivers without waiting on an analyst

- Analysts and BI teams: centrally managed KPI definitions that propagate across dashboards (define once, use everywhere), so they spend less time fixing calculated fields and more time explaining what changed

- Frontline teams: simple, role-specific KPI views they can trust, with conversational tools to ask questions in plain language

This is the thinking behind products like Domo Apps, which can deliver role-specific KPI experiences (for a Sales VP, a Finance Director, or a store manager) and include automations that help close the loop between insight and execution.

And sometimes the "audience" isn't even internal. If your product includes performance reporting for customers or partners, embedded analytics (like Domo Embed) can deliver branded KPI dashboards externally, complete with user-defined alerts and custom KPI logic.

Reporting rhythm

For weekly/monthly reviews, keep it simple:

- What changed?

- Why did it change?

- What are we doing next (owner + timeline)?

- What decisions or support are needed?

This keeps KPI meetings focused on action and not "spreadsheet archaeology."

If routine KPI reporting eats up analyst time, consider automating distribution for common cadences (weekly business reviews, monthly executive packs) so people get the same governed numbers without a manual scramble each cycle.

KPI examples by function

Use these as starting points, then tailor to your strategy and business model.

Sales KPIs

A sales leader focused on pipeline health might track these KPIs to monitor performance in real time:

- Annual recurring revenue (ARR) / monthly recurring revenue (MRR) growth = outcome KPI for subscription businesses

- Win rate = deals won ÷ deals closed

- Average deal size = revenue ÷ number of deals

- Sales cycle length = time from first touch to close

- Pipeline coverage = pipeline value ÷ quota (benchmark: 3x to 4x coverage)

- Pipeline velocity = (pipeline × win rate × average deal size) ÷ cycle length

- Stage-to-stage conversion rates = opportunities advancing from one stage to the next

- Quota attainment = actual ÷ target

- Slippage rate = deals with close dates pushed out (forecast risk signal)

Marketing KPIs

A marketing leader evaluating campaign effectiveness and pipeline contribution might focus on these indicators:

- Customer acquisition cost (CAC) = sales + marketing spend ÷ new customers

- Marketing qualified leads (MQLs) / sales qualified leads (SQLs) = leading indicators (only if definitions are aligned with sales)

- Lead-to-customer conversion rate

- Website conversion rate

- Return on ad spend (ROAS) = revenue attributed to ads ÷ ad spend (be clear about attribution)

- Pipeline sourced vs influenced helps separate "created" demand from "assisted" demand

Avoid the vanity trap: traffic, impressions, or followers are not KPIs unless they connect to pipeline, revenue, or retention decisions.

Operations KPIs

Operations teams typically track these indicators:

- On-time delivery rate

- Cycle time

- Throughput

- First-pass yield / defect rate

- Downtime

- Cost per unit / cost per transaction: useful when paired with quality and timeliness metrics

Operational KPIs are often the most actionable day to day.

Customer success and support KPIs

Customer-facing teams monitor these KPIs:

- CSAT

- First response time

- Time to resolution

- Retention rate / churn rate

- Expansion revenue (for account growth)

- Product adoption milestones: leading indicator for retention in many models

Financial and HR KPIs

Finance and HR teams track these indicators:

- Gross margin

- Operating margin

- DSO

- Forecast accuracy

- Employee retention/turnover

- Time to hire

- Offer acceptance rate

- Time to productivity: how quickly new hires reach expected performance

If your finance KPIs live in an ERP like Oracle NetSuite, it's common to track profit and loss (P&L), cash flow, and revenue recognition in the same view as operational drivers (inventory, project profitability, utilization) so finance, sales, and operations aren't running three separate "truths."

KPIs should be easy to understand while still meaningful at multiple levels in the organization.

KPI best practices and common pitfalls

Keep KPIs few and focused

If everything is "key," then nothing is. As a baseline, many organizations do well with:

- Three to five strategic KPIs for leadership

- A small set of operational KPIs per function and role

Separate "KPIs to act on" from "metrics to analyze" so dashboards stay usable.

Pair outcomes with drivers

Pair a lagging outcome KPI with leading drivers you can influence:

- Churn Drivers: activation, adoption, support response time

- Revenue Drivers: pipeline coverage, win rate, cycle length

Here's how a KPI tree might connect operational drivers to strategic outcomes for a SaaS business:

- Outcome (strategic): ARR

- Output: New customers acquired

- Process: SQL-to-close rate, sales cycle length

- Inputs: Pipeline created, demo-to-SQL conversion

This makes KPI reviews more actionable because teams can diagnose causes and pull levers, not just report results.

Define ownership and next steps

For each KPI, document:

- Owner

- Target and thresholds

- Review cadence

- What happens when the KPI is off-track (the action playbook)

Even a lightweight playbook helps: "If on-time delivery drops below 95 percent for two weeks, review carrier performance, warehouse staffing, and top delay codes; propose corrective actions within five business days."

Review and refine

KPIs should evolve with strategy. Regular reviews help you retire outdated KPIs, update targets, and improve definitions as business rules change.

Common pitfalls to avoid

KPI programs fail in predictable ways. Understanding these failure modes helps you build safeguards:

Vanity metrics are impressive numbers that don't guide action. Symptoms include high traffic but no conversions, or lots of followers but no engagement. The fix: pair vanity metrics with action-oriented KPIs (e.g., impressions plus click-through rate plus conversion rate).

Goodhart's Law describes what happens when a measure becomes a target and ceases to be a good measure. Teams optimize the KPI but harm the outcome, like closing tickets fast while tanking CSAT. The fix: pair KPIs (e.g., tickets closed plus CSAT), use counter-metrics, and conduct periodic KPI audits.

Too many KPIs dilute focus. Symptoms include cluttered dashboards and teams that do not know which KPIs to prioritize. The fix: limit to three to five strategic KPIs per team and separate KPIs (act on) from metrics (analyze).

Unclear definitions lead to inconsistent formulas, filters, or sources. The symptom is the same KPI showing different numbers across teams. The fix: document KPI specs with formula, data sources, inclusions/exclusions, and owner.

No governance means no owner, no cadence, and no accountability. KPIs are tracked but never reviewed or acted upon. The fix: assign KPI owners, set review cadences, and define action triggers.

Activity inflation is a form of gaming where teams inflate activity metrics (calls made, emails sent) without improving outcomes. The fix: pair activity KPIs with outcome KPIs and periodically audit whether activity correlates with results.

KPI maturity and evolution over time

KPI programs aren't static. As organizations grow, mature, or change strategy, the KPIs that once worked well can lose relevance. Understanding KPI maturity helps teams avoid clinging to outdated indicators and ensures measurement evolves alongside the business.

Early-stage or less mature organizations often focus on foundational KPIs, like basic financial health, pipeline creation, customer acquisition, and operational stability. At this stage, KPIs help answer simple questions: Are we growing? Can we deliver consistently? Do we have enough cash to operate?

As organizations mature, KPIs tend to become more predictive and diagnostic. Instead of only tracking outcomes, teams add leading indicators and driver metrics to understand why results are changing. Revenue remains a core KPI, but it's paired with indicators like product adoption, expansion opportunities, or customer engagement.

Advanced KPI maturity emphasizes cross-functional alignment and optimization. KPIs are shared across departments, tradeoffs are explicit, and dashboards support scenario analysis and decision-making at scale. At this stage, organizations regularly retire KPIs that no longer serve strategy and introduce new ones as priorities shift.

The key takeaway: effective KPIs are reviewed not just for performance, but for relevance.

How AI is changing KPI management

AI is reshaping how organizations create, monitor, and act on KPIs. What once required analysts and manual reporting can now happen faster and with less friction.

Natural language query tools reduce the bottleneck of analyst dependency. Instead of submitting a request and waiting for a report, managers and executives can ask questions about KPI data in plain language and receive governed answers immediately. This self-service access speeds up decision-making and frees analysts to focus on deeper analysis.

Anomaly detection surfaces unexpected KPI shifts before they escalate. AI can monitor KPIs continuously and alert owners when performance deviates from expected patterns, even when the deviation does not cross a predefined threshold. This catches emerging issues earlier than traditional threshold-based alerts.

Predictive insights help teams understand whether a KPI is on track to hit its target. Rather than waiting until the end of a quarter to see results, AI can forecast likely outcomes based on current trends and historical patterns, giving teams time to course-correct.

AI also simplifies KPI creation by suggesting relevant indicators based on business objectives and available data. This helps teams move from "what can we measure?" to "what should we measure?" more quickly.

These capabilities do not replace the fundamentals. Clear definitions, ownership, and review cadences still matter. But AI makes it easier to operationalize KPIs at scale and reduces the gap between measurement and action.

Building a KPI program that drives decisions

A KPI is a metric that measures success against your most important goals. When KPIs are few, clearly defined, and consistently monitored, they create focus, accountability, and faster decision-making.

To build an effective KPI program:

- Define your objectives

- Pick a small KPI set that reflects progress

- Document definitions and assign owners

- Set targets and review cadences

- Monitor with dashboards and refine over time

Turn KPIs into action with Domo

KPIs work best when they're visible, trusted, and easy to act on. Domo helps you connect data across the business, define and govern key metrics with consistent logic, and share always-on KPI visibility through real-time dashboards, role-based apps, and alerts.

If you're ready to replace spreadsheets and static reports, explore Domo and see how quickly you can build KPI dashboards your organization will actually use.