ETL vs ELT: Key Differences, Use Cases, and Best Practices

Extract, transform, and load (ETL) transforms data before loading it into a warehouse. Extract, load, and transform (ELT) flips that sequence, loading raw data first and transforming it on demand. Which approach fits your situation? That depends on data volume, infrastructure, compliance requirements, and transformation complexity. This article covers the key differences, walks through real-world use cases, and explains how many organizations now combine both approaches based on specific pipeline needs.

Key takeaways

Here's what you need to know about ETL vs ELT:

- ETL transforms data before loading into a warehouse, making it ideal for structured data and strict quality requirements

- ELT loads raw data first and transforms on-demand, offering flexibility and scalability for cloud environments

- Your choice depends on data volume, infrastructure (cloud vs. on-premises), and transformation complexity

- Many organizations now use hybrid approaches, combining ETL and ELT based on specific pipeline needs

What sets ETL and ELT apart

Both ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform) move data from source systems to a destination for analysis. The difference? When and where transformation happens. ETL transforms data in a separate staging environment before loading it into the target system. ELT loads raw data directly into the destination and transforms it there.

This ordering difference matters. It determines which system handles the computational work of transformation. ETL relies on a dedicated transformation layer (often a separate server or service) to clean, reshape, and enrich data before it reaches the warehouse. ELT pushes that work to the destination system itself, typically a cloud data warehouse with scalable compute resources.

The practical impact? ELT can reduce processing time when your destination warehouse has elastic compute capacity. Modern cloud warehouses like Snowflake, BigQuery, and Redshift can handle heavy transformation workloads through massively parallel processing, but teams often need additional tooling around them for broader pipeline management that Domo combines in one platform. ETL makes more sense when you need to control exactly what data lands in your warehouse, particularly when compliance requirements demand that sensitive data be masked or tokenized before it ever reaches the destination.

So how do you know which data processing method fits your needs? It depends on your data volume, infrastructure, and objectives. ETL is generally preferred for complex data transformation projects, legacy systems, and extensive data cleansing. ELT works well for processing large volumes of data and real-time processing requirements.

What is ETL?

ETL, or Extract, Transform, and Load, has been the standard approach to data integration for decades. Many data engineers and analytic engineers inherit ETL pipelines built for older, on-premises architectures that simply cannot push transformation workloads to a cloud warehouse. In these environments, ETL is not just a preference. It is an architectural constraint.

Here's how the process works:

- Extract: Data pulls from source systems such as databases, files, application programming interfaces (APIs), and other data repositories.

- Transform: The pipeline then transforms the data into the target format that aligns with the target data warehouse schema. The pipeline may also clean and enhance it at this stage.

- Load: In the last step, the pipeline loads the transformed data into its final system, where teams can query and analyze it.

Popular ETL tools

Several tools are available on the market designed to support ETL processes, including enterprise software, open-source, cloud-based, and hybrid options. Here are some of the most popular picks:

- IBM InfoSphere DataStage: An ETL tool for enterprise data integration and transformation, though teams may still need separate tools around it for broader workflow visibility that Domo keeps in one platform.

- Integrate.io: A low-code data integration platform with hundreds of connectors, though teams may still need separate transformation and monitoring tools that Domo combines.

- Domo Magic Transform (Magic ETL): A drag-and-drop ETL builder that also supports structured query language (SQL)-based transformations, so teams can transform where it makes sense (before or after the load).

- Informatica: A data integration tool with advanced ETL capabilities and data governance, though some teams prefer Domo when they want integration, transformation, and visibility in one place.

- Microsoft's SQL Server Integration Services (SSIS): A component of Microsoft SQL Server that can handle data migration tasks, though teams often choose Domo when they want a less Microsoft-centric workflow in one platform.

Pros and cons of ETL

Wondering about the pros and cons of ETL? Here are the most significant advantages and disadvantages of the data processing method:

ETL pros:

- Prioritizes data quality before the loading phase

- Works with on-premises systems

- Flexible regarding environment

- Mature process with extensive documentation

- Delivers a structured, cleaned dataset

- Enables pre-load compliance controls like tokenization and masking before data lands in the warehouse

ETL cons:

- Preprocessing stage can slow operations

- Heavy use of computational resources for transformation

- Not as useful for changing data requirements

- Does not work well for handling large volumes of data

Overall, ETL works well in environments with strict data quality standards, such as finance or healthcare. It is also suitable for scenarios involving extensive data transformation and the use of legacy systems. If your organization's policy prohibits raw personally identifiable information (PII) from landing in the warehouse, use ETL with pre-load tokenization or masking.

What is ELT?

Unlike ETL, data transformation occurs last with ELT. And it happens on an as-needed basis. Here's how it works:

- Extract: The pipeline first extracts data from disparate sources.

- Load: The pipeline then loads the raw data into a storage system such as a data lake or cloud-based data warehouse.

- Transform: Finally, the system transforms the data within the storage system.

When might you choose ELT over ETL? If you're managing large volumes of data, the scalability of the cloud can work in your favor. This method is also more flexible because teams can transform data as needed, which works well for evolving data requirements. Organizations can also enjoy real-time data processing with the use of ELT.

Popular ELT tools

ELT has grown alongside the modern data stack, and several tools have emerged to support this approach:

- Snowflake: A cloud data warehouse that handles transformation workloads through scalable compute clusters, though teams often pair it with separate tools for pipeline orchestration and monitoring that Domo combines.

- Google BigQuery: A serverless data warehouse with built-in SQL transformation capabilities, though teams may still need separate tools for broader data workflow management that Domo brings together.

- Amazon Redshift: A cloud warehouse that supports in-database transformations at scale, though teams may need extra tooling for end-to-end visibility that Domo includes in one platform.

- Databricks: A lakehouse platform that combines data lake storage with warehouse-style transformations, though teams may still need additional tools for business-facing reporting and monitoring that Domo provides together.

- Fivetran: An automated data integration tool that loads raw data into warehouses for downstream transformation, though teams may need separate tools for transformation governance and observability that Domo combines.

- dbt (data build tool): A transformation tool that runs SQL-based transformations directly in the warehouse, though teams often pair it with other tools for ingestion and monitoring that Domo unifies.

Pros and cons of ELT

As with ETL, ELT has its pros and cons. Review the following advantages and disadvantages carefully to determine the best method for your needs.

ELT pros:

- Useful for flexible data formats

- Transformation only occurs as needed, safeguarding resources

- High speed of loading in a cloud-based environment

- Quicker data availability

- Raw data retention enables historical reprocessing when business logic changes without re-extracting from source systems

ELT cons:

- Works best in a cloud environment (limiting for on-premises setups)

- Concerns over storing raw data and meeting compliance requirements

- Newer method, so some stakeholders may question its value

Big data analytics represents one of the most common scenarios where ELT makes sense. ELT has the needed processing power if you're working with massive volumes of disparate data. The use of cloud environments also allows for scalable, flexible storage. Real-time analytics? That's where ELT really shines.

A brief history of ETL and ELT

ETL began in the 1970s. Organizations dealing with data across multiple locations needed an efficient way to consolidate all of this information in one place, so ETL became the go-to method. It was originally mostly manual but evolved to include automation in the late 1980s.

ELT emerged as cloud computing advanced. By the 2010s, it had grown in popularity as this method used the scalability and processing capabilities of cloud-based tools more effectively.

ETL vs ELT: side-by-side comparison

Understanding the differences between ETL and ELT becomes clearer when you see them side by side. The following table breaks down the key factors that matter when choosing between these approaches:

Key factors that differentiate ETL and ELT

The comparison table highlights the differences, but understanding why these differences exist helps you make more informed decisions.

ELT's speed advantage comes from how modern cloud warehouses process data. These systems use massively parallel processing (MPP) to distribute transformation workloads across multiple compute nodes simultaneously. They also offer elastic compute scaling, meaning you can spin up additional processing power on demand rather than being constrained by a fixed transformation server. Columnar storage optimizes analytical queries, and query pushdown moves computation to where the data lives rather than moving data to where computation happens.

That said, ELT does not reduce processing time in every scenario. Here's when the speed advantage disappears:

- Small warehouse sizes that can't parallelize effectively

- High concurrency environments without workload isolation

- Expensive full-table backfills that scan massive datasets

- Complex transformations that don't benefit from columnar storage

ETL vs ELT in modern data architectures: lakes, lakehouses, and zones

A common question is whether data lakes use ETL or ELT. The answer requires understanding that ETL and ELT describe the order of processing steps, not the storage layer itself. You can apply ETL into a data lake or ELT into a data warehouse.

In practice, most lake and lakehouse architectures follow an ELT pattern because transformation happens in-place using engines like Spark or SQL. The bronze/silver/gold zoning pattern illustrates this:

- Raw/bronze zone: Data lands unmodified via extract and load (the EL portion). This preserves the original data for auditability and reprocessing.

- Silver zone: Cleaned and conformed data created through transformation. This is where deduplication, type casting, and standardization happen.

- Gold zone: Business-ready aggregations and models optimized for specific use cases like dashboards or reports.

ETL can also feed a lake when you require pre-load transformation. If you must mask PII before raw data lands in any storage layer, ETL handles that transformation in a staging environment first.

Choosing the right approach for your data strategy

Rather than general guidance, use these conditional rules based on your specific situation:

If your data volume exceeds several terabytes and continues growing, lean toward ELT. Cloud warehouses handle large-scale transformations more efficiently than dedicated ETL servers.

If your transformation logic is complex (slowly changing dimensions, entity resolution, heavy joins across many tables), evaluate whether your warehouse can handle the compute load. ETL may be more cost-effective for extremely complex transformations that would consume significant warehouse resources.

If your warehouse uses a consumption-based pricing model (like Snowflake or BigQuery), factor in the cost of running transformations there. ELT shifts transformation costs to warehouse compute, which can add up without proper optimization. And honestly, this is the part most teams get wrong: they assume ELT is "free" because they're already paying for the warehouse, then get blindsided by compute bills after running inefficient transformation queries at scale.

If compliance requirements prohibit raw sensitive data from landing in your warehouse, use ETL with pre-load masking or tokenization. There's no workaround here.

If your team needs data available within minutes of extraction, ELT typically delivers shorter time-to-data because it skips the staging transformation step.

Legacy on-premises systems that can't connect to cloud warehouses? ETL remains the practical choice.

Cost and performance considerations

Cost is one of the most misunderstood aspects of the ETL vs ELT decision. Neither approach is inherently cheaper. The cost simply shifts to different parts of your architecture.

With ETL, you pay for the transformation layer. This might be a dedicated server, a managed ETL service, or compute resources in a separate environment. These costs are often more predictable because they're tied to fixed infrastructure rather than query volume.

With ELT, you pay for warehouse compute. Every transformation query consumes resources, and in consumption-based warehouses, this directly impacts your bill. The cost can spike during large backfills or when transformation logic runs inefficiently.

Several tactics help control ELT costs:

- Incremental models that process only changed records rather than full tables

- Partitioning and clustering to reduce scan volume

- Workload isolation to prevent transformation jobs from competing with user queries

- Pre-aggregation for frequently queried datasets

Security and compliance factors

Security and compliance considerations often determine whether ETL or ELT is even an option.

Pre-load tokenization (ETL): The staging environment replaces sensitive fields like social security numbers or credit card numbers with tokens before data reaches the warehouse. The original values never land in the destination system.

Dynamic data masking (ELT): Raw data lands in a restricted zone, and masking rules apply at query time based on permissions. The underlying data remains intact, but people see masked values. Dynamic masking only protects data at query time. If someone exports the underlying table or accesses it through a different tool, those masking rules may not apply.

Column-level security (ELT): Access controls restrict which people can query specific columns containing sensitive data. This works well when some people need the raw data while others should only see masked versions.

Raw zone segregation (ELT): Raw data lands in access-controlled schemas with strict permissions. Only specific service accounts or roles can access the raw zone, while transformed data in downstream zones is more broadly accessible.

Audit logging: Both approaches can implement audit trails, but the requirements differ. ETL audit logs track transformations in the staging environment. ELT audit logs track warehouse queries and data access.

For regulated industries such as the Health Insurance Portability and Accountability Act (HIPAA), the General Data Protection Regulation (GDPR), and the Payment Card Industry Data Security Standard (PCI-DSS), the key question is whether raw sensitive data can exist in your warehouse at all.

How teams run hybrid ETL and ELT without tool sprawl

Hybrid sounds great until you're managing one tool for extraction, another for transformation, and a third for orchestration and monitoring. That's where data governance gaps and inconsistent data quality tend to sneak in, especially when different teams own different parts of the pipeline.

A practical way to keep your sanity is to treat ETL vs ELT as a per-pipeline decision, while keeping ingestion, transformation, and monitoring consistent across both patterns.

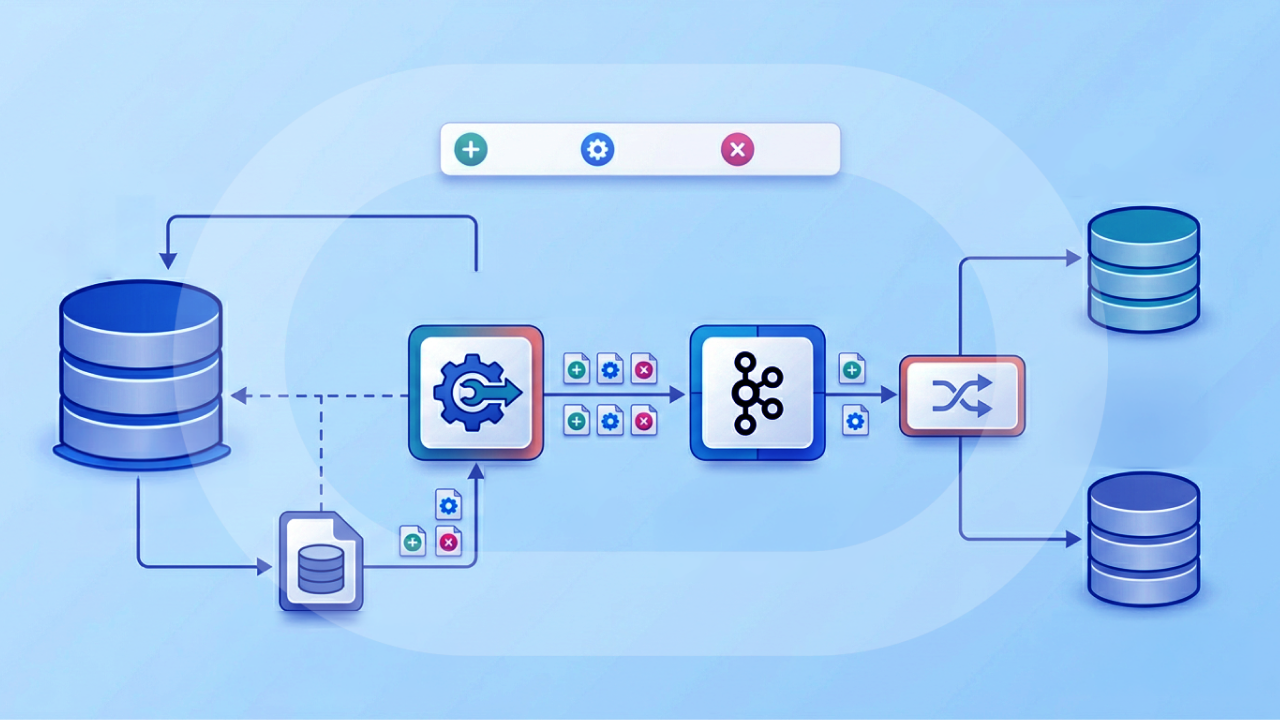

What this looks like in Domo: Data Integration + Magic Transform

If you want the "transform where it makes sense, before or after the load" flexibility without juggling a pile of tools, Domo's Data Integration and Magic Transform are designed to work together:

- Ingest reliably: Data Integration supports push and pull ingestion models, has 1,000+ connectors (cloud apps, databases, files, and on-prem systems), and supports incremental ingestion so pipelines don't have to re-pull everything on every run.

- Trigger work when data changes: Event-based pipeline triggers can kick off downstream ETL or ELT DataFlows when upstream data updates, which helps teams move toward near-real-time pipelines without babysitting schedules.

- Transform your way: Magic Transform supports drag-and-drop ETL and SQL-based ELT in the same environment, including output controls like full replace, append, upsert, and partitioning.

- Standardize logic: Reusable DataFlow templates help analytic engineers apply the same business rules across many datasets, cutting down on copy-and-paste logic and "why are these numbers different?" debates.

- See what's happening: An interactive data lineage map and pipeline health monitoring give teams end-to-end observability from source ingestion through downstream transformation and consumption.

For teams with advanced needs, Magic Transform also supports integrated Jupyter Workspaces with Python and R, so data scientists can embed more complex transformation logic directly in a pipeline without jumping between systems.

Real-world examples of ETL and ELT in action

Consider how these two data processing methods play out in the real world. Take an online streaming service collecting data across multiple sources:

- Website activity logs

- Subscription data from the mobile app

- Customer feedback surveys

- Server logs

How can the streaming service bring all of this information into a unified view?

With ETL, the pipeline will gather the raw data regularly on a schedule. A separate database processes, cleanses, and enhances the raw data. As the system processes the data, it formats it to suit the final destination. Finally, the pipeline can move the data into a data warehouse. There, teams can analyze it for BI purposes. Data quality gets prioritized. But there is not much room for flexibility in data requirements, and the preprocessing aspect of the method will take time.

The streaming service could also choose to try the ELT method. This approach extracts the raw data detailed above and moves directly into a data lake. The data lake stores the raw data and then transforms it as necessary. The company can transform the data on demand, which provides enhanced scalability and flexibility. This method requires cloud infrastructure, so it would not fit an on-premises environment.

Will ELT replace ETL?

The short answer is no. While ELT has become the default for many cloud-native organizations, ETL continues to serve important purposes that ELT cannot easily replicate.

ETL remains the right choice in several modern scenarios:

- Regulated data pipelines where PII must be masked or tokenized before warehouse ingestion

- Edge computing environments where bandwidth constraints require pre-aggregation before transmission to a central warehouse

- Format normalization for sources that produce incompatible schemas requiring standardization before loading

- On-premises systems that cannot push transformation workloads to a cloud warehouse

- Complex preprocessing that would be prohibitively expensive to run in a consumption-based warehouse

Many organizations run hybrid architectures, using ELT for the majority of their pipelines while applying ETL patterns for specific regulated data attributes or bandwidth-constrained sources.

ETL, ELT, and related concepts: reverse ETL, change data capture (CDC), and streaming

As you evaluate data integration approaches, you'll encounter several adjacent concepts that relate to ETL and ELT:

Reverse ETL moves data in the opposite direction, from your data warehouse back into operational systems like customer relationship management systems (CRMs), marketing platforms, or customer support tools. For example, you might sync enriched lead scores from your warehouse back to Salesforce so sales teams can prioritize outreach. Reverse ETL extends the ETL/ELT pipeline beyond analytics into operational action.

Change data capture (CDC) captures row-level changes from source systems in real-time or near-real-time, enabling incremental ingestion rather than full extracts. CDC feeds both ETL and ELT pipelines more efficiently by processing only what changed since the last sync rather than re-extracting entire tables.

Streaming represents a distinct pattern from batch ETL/ELT. Rather than extracting data in scheduled batches, streaming pipelines process data continuously as events occur. Streaming is appropriate when you need sub-second latency, but it adds complexity compared to batch approaches.

In practice, many teams combine CDC-style incremental ingestion with micro-batch processing (every few minutes, for example) to get "close to real time" without taking on full streaming complexity.

Best practices for ETL and ELT implementation

Whether you choose ETL or ELT, there are some best practices for implementation that can help you get the most from either method. Many organizations find that a hybrid approach works best, using ELT for most sources while applying ETL patterns for regulated data attributes or bandwidth-constrained sources.

ETL implementation best practices

- Know your data requirements. Set yourself up for success by defining data sources, quality standards, desired target format, and any system requirements.

- Implement data validation checkpoints throughout the pipeline: schema and null checks at extract, row count reconciliation at load, accepted value checks at transform, and freshness monitoring at publish.

- Document your ETL process and related transformations.

- Use incremental loading techniques to update only new or changed data to reduce processing time.

- Predefine scheduling to extract and transform data.

- Automate ETL workflows as much as possible.

ELT implementation best practices

- Use cloud scalability to manage large volumes of raw data and perform complex transformations.

- Only transform data on-demand for extra flexibility and reduced burden on resources.

- Implement incremental processing strategies (incremental models, upserts, partitioning) to control warehouse compute costs.

- Use bulk loading to manage massive datasets.

- Carefully manage raw data storage to meet compliance and security requirements. Teams sometimes assume that because data lands in a "raw zone," security can wait until transformation. It can't. Access controls and encryption need to be in place from the moment data arrives.

- Carefully document ELT processes and transformations for reference.

- Speed up data processing by optimizing transformations in the target system.

If you're trying to cut down on fragile, hand-coded scripts (and the technical debt that comes with them), prioritize reusable transformation patterns and clear lineage. That's how teams keep ETL and ELT consistent as sources and business rules change.

These best practices help drive efficient and effective implementations of ETL and ELT. If you're interested in making data transformation a success in your organization, connect with Domo. Our drag-and-drop ETL tool makes it easy to extract data from multiple sources, transform it, and load it into Domo, no coding required. Here's how it works.