What Is AI Governance? Frameworks, Principles, and Best Practices

AI governance helps organizations manage the ethical, legal, and societal impacts of artificial intelligence through structured frameworks, clear accountability, and continuous monitoring. This article explains the core principles that guide responsible AI development, walks through the major regulatory frameworks shaping compliance requirements, and provides practical guidance for building governance programs that scale with your AI ambitions.

Key takeaways

Here are the main points to keep in mind as you read.

- AI governance provides the frameworks, policies, and practices that guide ethical, compliant, and accountable AI development and usage across an organization

- Core principles include accountability, transparency, fairness, privacy, security, and empathy, with implementation varying based on organizational context and risk tolerance

- Organizations typically progress through three governance maturity levels: informal, ad hoc, and formal, with each stage adding structure and rigor

- Global regulations including the EU AI Act, the National Institute of Standards and Technology AI Risk Management Framework (NIST AI RMF), International Organization for Standardization/International Electrotechnical Commission 42001 (ISO/IEC 42001), and regional frameworks are shaping compliance requirements and evidence standards

- Effective governance requires cross-functional collaboration, continuous monitoring, measurable metrics, and clear accountability structures with defined escalation paths

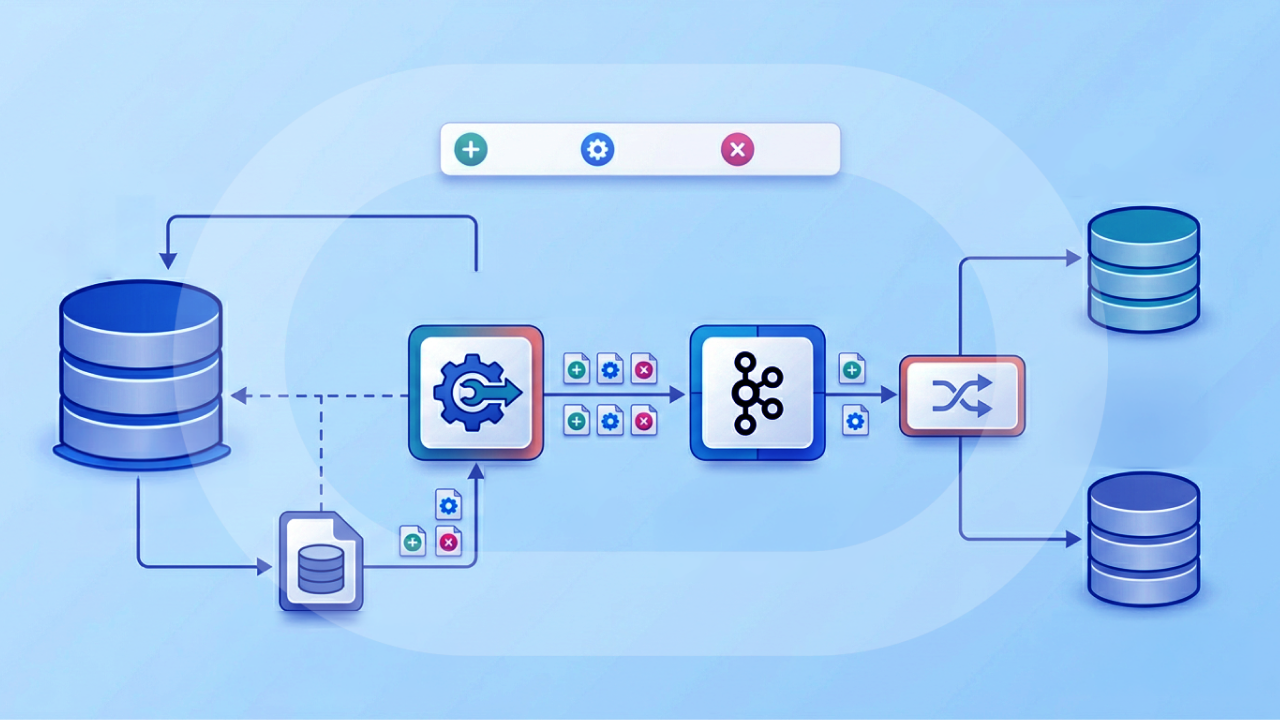

- Strong programs reduce tool sprawl by treating governance as a centralized control plane where policies are set once and enforced across data pipelines, BI, and AI agents

What is AI governance?

AI governance refers to the frameworks, policies, and practices that guide how artificial intelligence is developed and used in a responsible, ethical, and lawful way. It ensures that as AI systems become more advanced and embedded in everyday life, they operate in ways that are fair, transparent, and accountable.

At its core, AI governance helps organizations manage the ethical, legal, and societal impacts of AI and machine learning technologies. Everything from reducing bias and ensuring data privacy to complying with regulations and preparing for unexpected outcomes falls under this umbrella. As innovation speeds up, governance acts as the guardrails. It helps teams strike the right balance between experimentation and accountability.

Understanding how AI governance differs from related concepts helps clarify its scope. AI ethics establishes the values and principles that should guide AI development, such as fairness and human dignity. AI risk management focuses on identifying, assessing, and treating potential harms from AI systems. Model governance addresses the technical lifecycle controls for machine learning models, from development through retirement. AI governance sits above these as the organizational layer that establishes decision rights, accountability structures, and assurance mechanisms to ensure ethics, risk management, and model governance all work together effectively. Organizations that focus only on model governance often discover too late that they lack the cross-functional accountability structures needed when something goes wrong. And honestly, that's the part most guides skip over.

AI governance also increasingly covers AI agents and automated workflows, not just standalone models. When an agent can pull data, call tools, and trigger actions, governance has to span the full chain: data access, orchestration, human review, and the final action taken.

Core elements of AI governance

Several foundational elements make AI governance operational rather than aspirational.

The following components form the backbone of most governance programs:

- Ethical principles that shape how AI is designed and deployed, including fairness, transparency, and inclusivity

- Regulatory compliance with privacy laws, security standards, and industry-specific guidelines

- Risk management strategies to identify and respond to unintended consequences or potential harms

- Transparency and accountability mechanisms that explain how AI systems make decisions and who is responsible for them

- A governed semantic and metrics layer that ensures consistent definitions, certified key performance indicators (KPIs), and approved metric calculations across AI applications

- Tiered access controls including role-based access control (RBAC), row-level security, and identity-based authorization that persist from data sources through AI outputs

- Data governance practices ensuring information fueling AI systems is accurate, well-managed, and responsibly sourced

- Human-in-the-loop oversight for higher-risk workflows, so a person can review, approve, or stop AI-driven decisions before they execute

- Incident response plans to quickly address system failures, security issues, or ethical concerns

- Cross-functional collaboration bringing together developers, ethicists, legal experts, and business leaders

- Continuous monitoring and improvement to evaluate governance effectiveness and adjust as technology evolves

- A centralized governance control plane that reduces gaps created by multiple tools and vendors by enforcing the same policies across data pipelines, BI assets, and AI agents

AI governance provides the structure to guide innovation without letting things spin out of control. It is not just about avoiding harm.

Why AI governance matters

Imagine a world without AI governance. Not pretty. Without frameworks to manage the development and usage of AI, people could experience unethical, immoral, and discriminatory practices from these technologies. Organizations would face legal and financial consequences as a result. On a larger scale, society could see an increase in incidents of injustice and violations of human rights.

The tension between AI innovation speed and compliance requirements creates a particular challenge. AI and machine learning (ML) engineers need governed experimentation environments that do not slow deployment cycles. Line-of-business executives want to adopt AI responsibly without owning the compliance infrastructure themselves. Governance is not a constraint on innovation. It is the infrastructure that makes responsible AI adoption possible and defensible to leadership, legal teams, and regulators.

For regulated industries like healthcare, financial services, and government, the stakes get even higher. It's not only "do we comply with the EU AI Act?" It's also "do we stay aligned with requirements tied to standards and audits like the Health Insurance Portability and Accountability Act (HIPAA), the Payment Card Industry Data Security Standard (PCI DSS), Service Organization Control 2 (SOC 2) Type II, ISO 27001, and the Federal Risk and Authorization Management Program (FedRAMP)?"

Building trust through governance

Improper use of AI has already eroded trust in the technology. AI governance can help organizations improve trust for employees, customers, and other stakeholders. People affected by the technology can gain a better understanding of the systems, their inputs, and their outputs.

Trust operates at multiple levels. Internally, employees need confidence that AI tools augmenting their work are reliable and that they won't be held accountable for AI-generated errors outside their control. Externally, customers need assurance that AI-driven decisions affecting them (whether in lending, hiring, or service delivery) are fair and explainable.

The cost of ungoverned AI

The consequences of operating AI without governance extend beyond regulatory fines. Reputational damage hits when AI systems produce biased outcomes that become public. Legal liability increases when decisions affecting individuals can't be explained or justified. Operational disruptions occur when AI systems fail without incident response protocols in place.

Generative AI introduces additional risks that traditional governance frameworks weren't designed to address. Hallucinations, where models produce confident but incorrect outputs, can propagate into business decisions and customer communications. Prompt injection attacks can manipulate AI workflows to bypass intended controls. Data exfiltration through ungoverned large language model (LLM) integrations can expose sensitive information to external systems.

Key components of AI governance

What does governance actually look like when it's working? Not policy documents gathering dust. Real governance embeds controls into the fabric of how AI gets built and deployed.

The following elements should be present in any comprehensive governance program:

- Audit logs and end-to-end data lineage that trace how data flows from source through transformation into AI outputs, answering "who used what data to produce this result"

- Workflow-embedded certification and publishing gates that move AI assets through defined states (draft, shared, certified) rather than relying on standalone policy documents

- Controls to prevent data leakage including masking, data loss prevention (DLP) and egress controls, and sanctioned endpoint restrictions

- Model and prompt registries with versioning, ownership assignment, and approval workflows

- Risk tiering systems that apply proportionate controls based on use case sensitivity

- Observability infrastructure that captures usage patterns, model performance, and governance exceptions

- Schema change monitoring and data freshness alerts, so teams catch upstream changes that can break pipelines or silently skew AI outputs

- Guardrailed orchestration for AI agents so tool calls, data access, and action execution follow the same policies as the underlying data and BI environment

Data governance and AI

Data governance forms the foundation of AI governance. Data quality, accuracy, and appropriate handling directly determine the quality of AI outputs. Access controls and data quality standards must persist from source systems through transformation layers into AI agent consumption without requiring custom pipeline work for each integration.

For data engineers, this means maintaining row-level and column-level access controls across complex data-to-agent integrations. Data lineage and audit trails become the governance proof points needed to demonstrate compliance to IT leaders and regulators. When a model produces an unexpected output, the ability to trace back through the data pipeline to identify the source becomes essential for both debugging and accountability.

One way to sanity-check your approach is simple: does your governance travel with the data? If access controls or certified definitions disappear the moment data moves into a new tool or an agent workflow, you've found a governance gap worth fixing.

Accountability structures

Clear accountability requires more than general statements about responsibility. Named roles. Specific decision rights. Escalation paths that people actually use.

A typical AI governance structure includes the following roles:

- AI Governance Council: Cross-functional oversight body that sets policy, resolves disputes, and approves high-risk deployments

- Model Risk Owner: Accountable for individual model performance, compliance, and lifecycle management

- Data Steward: Responsible for dataset certification, lineage documentation, and data quality standards

- Legal and Compliance representative: Ensures regulatory requirements are met and advises on risk exposure

- Product or Business Owner: Accountable for use case justification and business outcomes

In many organizations, you'll also want explicit ownership for the layer that sits between BI and AI. A BI Manager or IT Manager often owns governed metric definitions and self-service guardrails, which directly affects what AI sees and says.

The following responsibility assignment matrix (RACI) illustrates how these roles interact across key governance activities:

Escalation paths matter as much as role definitions. When a Data Steward identifies a data quality issue affecting a production model, they should know exactly who to escalate to, what information to include, and what response time to expect. Without defined escalation workflows, governance gaps persist even when roles are clearly assigned. Assigning roles without corresponding authority creates a different problem entirely: a Model Risk Owner who can flag issues but can't halt a deployment isn't really accountable. They're just a messenger.

Principles of AI governance

Principles in AI governance guide the ethical development and usage of AI technology. No universal standard exists for sourcing AI governance principles, but many organizations rely on the National Institute of Standards and Technology AI Risk Management Framework (NIST AI RMF), the Organisation for Economic Co-operation and Development (OECD) Principles on Artificial Intelligence, and the European Commission's Ethics Guidelines for Trustworthy AI.

Foundational ethical principles

These frameworks converge on several core principles that should guide AI development and deployment.

Accountability means organizations embed ethics and morals into the development and usage of AI, taking responsibility for its effects. In practice, this requires named individuals who own outcomes, not just processes. When an AI system produces harm, accountability structures determine who investigates, who remediates, and who communicates with affected parties.

Transparency requires organizations to be clear and honest about how the technology is developed, used, and particularly how teams use it to make decisions. This includes documenting training data sources, model architectures, known limitations, and intended use cases. For high-stakes decisions, transparency may require explainability mechanisms that help affected individuals understand why a particular decision was made.

Fairness demands that teams thoroughly inspect training data to avoid introducing bias into AI algorithms. People should review decisions for bias control. Fairness is not a one-time check but an ongoing monitoring requirement, as models can develop bias over time through data drift or feedback loops. Teams sometimes treat a clean initial bias audit as permanent certification. It isn't. Bias can emerge gradually as underlying data distributions shift.

Empathy means organizations develop and use AI technology with consideration for its potential impact on individuals and society as a whole. This principle asks developers and deployers to consider not just whether they can build something, but whether they should.

Security and privacy principles

Privacy requires organizations to adhere to data security principles and ensure that data is not misused or accessed without consent. Implementing data minimization practices, obtaining appropriate consent for data use, and providing individuals with access to and control over their data where regulations require it.

Security demands that organizations implement strong data security measures, such as encryption, access controls, and threat detection mechanisms. For AI systems specifically, security extends to protecting model weights, preventing adversarial attacks, and securing the infrastructure that hosts AI workloads.

AI governance frameworks to know

Several established frameworks provide structure for implementing AI governance. Understanding how these frameworks relate to each other helps organizations build coherent compliance programs rather than treating each requirement in isolation.

The following table maps major frameworks to their core requirements and the evidence artifacts that demonstrate compliance:

NIST AI risk management framework

The NIST AI Risk Management Framework provides a flexible, voluntary framework organized around four core functions: Govern, Map, Measure, and Manage. Each function contains categories and subcategories that organizations can adapt to their specific context.

The Govern function establishes organizational structures, policies, and processes for AI risk management. Map identifies the context and potential impacts of AI systems. Measure assesses and tracks AI risks using appropriate metrics. Manage addresses identified risks through mitigation, transfer, or acceptance.

For organizations also pursuing ISO/IEC 42001 certification, the frameworks align in several areas. NIST's Govern function maps to ISO 42001's leadership and planning clauses. Map and Measure align with ISO 42001's risk assessment requirements. Manage corresponds to ISO 42001's risk treatment and operational controls.

Is ISO 42001 mandatory? Currently, ISO/IEC 42001 is not legally required in most jurisdictions. However, procurement teams increasingly reference it, particularly for government contracts and regulated industries. Organizations operating in the EU may find ISO 42001 certification helpful for demonstrating conformity with EU AI Act requirements, though it is not a substitute for the Act's specific compliance obligations.

International frameworks and standards

The OECD Principles on Artificial Intelligence, adopted in 2019, established the first intergovernmental standard on AI. These principles emphasize inclusive growth, human-centered values, transparency, robustness, and accountability. While not legally binding, they influence national policies and provide a common reference point for international discussions.

The European Commission's Ethics Guidelines for Trustworthy AI complement the EU AI Act by providing ethical guidance beyond legal compliance. Human agency. Technical robustness. Privacy. Transparency. Diversity. Societal wellbeing. Accountability. The guidelines touch all of it.

ISO/IEC 42001, published in 2023, provides requirements for establishing, implementing, maintaining, and continually improving an AI management system. Unlike guidance documents, ISO 42001 is certifiable, meaning organizations can undergo third-party audits to demonstrate conformity.

Levels of AI governance maturity

Organizations typically progress through distinct maturity levels as their AI governance capabilities develop. Understanding where your organization sits helps identify appropriate next steps without overinvesting in controls that don't match your current AI deployment scale.

The following table describes three common maturity levels and the characteristics of each:

Moving from one level to the next requires deliberate investment. The jump from sandbox to departmental typically requires establishing a cross-functional governance body and standardizing policies across teams. Progressing from departmental to certified? That's where it gets hard. Continuous monitoring, audit-ready documentation, potentially external certification such as ISO/IEC 42001. Most teams underestimate this second jump. The documentation burden alone can stall progress for months without dedicated resources.

How to implement AI governance

Rather than treating governance as a separate compliance exercise, effective implementation embeds controls into existing workflows at natural decision points. Map controls to each stage of the AI lifecycle.

The following lifecycle control map identifies key checkpoints and artifacts for each stage:

Building your governance team

Effective governance requires the right people in the right roles with clear decision rights. The composition of your governance team should reflect your organization's AI maturity and deployment scale.

At minimum, a governance team should include representation from data and analytics (for technical oversight), legal and compliance (for regulatory guidance), security (for risk assessment), and business stakeholders (for use case prioritization). As AI deployment scales, dedicated roles such as a Model Risk Owner or AI Ethics Officer may become necessary.

The escalation workflow should define clear triggers and response expectations. For example, when a model's drift metric exceeds the defined threshold, the governance team should notify the Model Risk Owner within 24 hours. If the team cannot resolve the issue within the defined service-level agreement (SLA), it should escalate the issue to the AI Governance Council with a documented impact assessment and remediation plan.

If your organization runs lots of agents across teams, consider adding an explicit admin role for agent management and approvals. That helps IT leaders stay the "trusted enabler" of AI transformation without becoming the bottleneck.

Creating governance policies

Governance policies translate principles into enforceable requirements. Effective policies are specific enough to guide behavior while flexible enough to accommodate different use cases and risk levels.

Key documentation artifacts that policies should require include the following:

- Model cards documenting intended use, training data, performance metrics, and known limitations

- Dataset datasheets describing data provenance, quality checks, and access controls

- System cards documenting end-to-end system behavior for complex AI applications

- Incident reports capturing trigger, impact, response, and resolution for governance exceptions

- Change logs tracking version history, approvers, and rationale for modifications

These artifacts serve multiple purposes. They provide transparency for stakeholders, create audit trails for compliance, and enable knowledge transfer when team members change.

AI governance best practices

Following best practices for AI governance can help organizations increase trust in the technology and mitigate risks. These practices build on the implementation framework described above.

Collaboration and coordination remain essential. The most effective AI governance approaches involve organizational stakeholders across departments and subject matter expertise. Bringing together these stakeholders to collaborate on ideas and coordinate efforts serves as a force multiplier and allows for the transparent exchange of ideas as well as incorporating diverse perspectives.

Transparent communication builds and maintains trust in AI. Teams should tell all stakeholders, including people affected by the technology, employees, and the community, how they use AI, how they develop it, and which data sets it relies on. This practice is particularly important if teams use the technology for automated decision-making. Consider communication channels, campaigns, and frequency.

Regulatory sandboxes allow organizations to test AI technology in a controlled environment. Organizations can test drive applications while complying with regulatory requirements. They can also address challenges and risk factors as they emerge in a way that mitigates risks.

Ethical guidelines and codes of conduct provide the foundation for AI development and usage. Consider organizational values as well as societal values such as transparency, accountability, and respect. These guidelines and codes of conduct can serve as a guiding framework for all actions related to AI.

Continuous monitoring and evaluation ensure AI applications do not degrade or veer from their design over time. It is crucial to evaluate data sets, potential human errors, and the introduction of bias rigorously.

Measurable governance metrics make monitoring actionable. The following key performance indicators (KPIs) and key risk indicators (KRIs) provide concrete targets for governance programs:

- Model drift: Population Stability Index (PSI) above 0.2 triggers review; above 0.25 triggers immediate remediation. These thresholds matter because drift at this level typically indicates the model is seeing data distributions meaningfully different from training, which degrades prediction accuracy.

- Bias regression: Demographic parity delta exceeding defined threshold triggers audit

- Incident rate: Number of governance exceptions per quarter, tracked against baseline

- Audit findings: Open findings by severity, with SLAs for resolution

- Policy adherence rate: Percentage of AI deployments with current, complete model cards

To bring these best practices to life, consider developing a dashboard that represents the current state of your AI applications, health scores, and related performance alerts for when a model deviates from its purpose. In many cases, incorporating automated detection systems is useful for quickly responding to bias, drift, or performance issues.

For BI and IT managers, it also helps to track semantic and metric governance directly: how many AI experiences (and dashboards) run on certified KPIs vs "homemade math," and how often schema changes trigger breakages. That's the difference between "one source of governed truth" and a weekly reconciliation ritual.

Common AI governance challenges

As organizations work toward AI governance, they will encounter challenges that may threaten the effectiveness and efficiency of their frameworks. Being aware of these challenges helps organizations prepare to address them proactively.

Complex technology creates understanding gaps. AI has a level of opaqueness that makes it difficult to understand the technology and often intimidating for those who don't interact with it regularly. When people lack sufficient knowledge of the technology and how it's used, they may become more distrustful. Furthermore, this lack of understanding makes it more challenging to predict its potential implications.

Lack of accountability allows problems to persist. It can often be difficult to determine who owns the effects of AI applications. This lack of accountability can allow negative effects of AI to continue without being addressed. Codes of conduct, ethical guidelines, and regulations can help address this challenge.

Bias enters through multiple pathways. Bias can enter AI data sets easily, and algorithms can also produce biased outcomes. This is particularly dangerous when the technology disperses misinformation and disinformation. Bias can also affect decisions made by AI technology, leading to unfairness and injustice in society.

Innovation and compliance create tension. Developers of AI may feel hindered by regulations. Requirements and compliance standards can slow the pace of innovation. However, regulation is necessary to ensure AI functions appropriately in society.

Constant evolution outpaces policy. Keeping up with the evolution of AI technology is hard. Its effects can be unpredictable. This makes it difficult to develop policies and procedures quickly enough to address them proactively. For this reason, many AI policies and frameworks lag behind the technology and its function.

Collaboration constraints complicate coordination. Governing AI effectively requires working among multiple stakeholders, institutions, and countries. Coordinating efforts across these groups can be a logistical challenge.

Self-service analytics environments create enforcement gaps. In organizations where people across the business can access ungoverned datasets or create unvetted metrics that feed AI models, maintaining consistent governance becomes particularly difficult. BI managers and IT managers face pressure to loosen governance controls to reduce bottlenecks while simultaneously needing to ensure that AI-driven outputs are based on certified, compliant data. This organizational and tooling challenge requires teams to embed governance in workflows instead of adding it as an afterthought.

Tool sprawl creates governance blind spots. When different teams stitch together separate data tools, BI tools, and agent frameworks, governance often turns into a patchwork. IT leaders end up chasing audits across vendors, while engineers duplicate controls in every pipeline. A centralized control plane approach helps close those gaps by enforcing access policies and approvals consistently across workflows.

Governing generative AI and LLM-specific risks

Generative AI and large language models introduce governance challenges that traditional frameworks weren't designed to address. These risks require specific controls beyond general AI governance practices.

Prompt injection occurs when malicious inputs manipulate model behavior to bypass intended controls or produce harmful outputs. Mitigation requires prompt validation and sanitization, input filtering, and monitoring for anomalous prompt patterns.

Hallucinations happen when models produce confident but incorrect outputs that can propagate into business decisions and customer communications. Output grounding checks that verify generated content against authoritative sources help reduce this risk, as do confidence thresholds that flag uncertain outputs for human review.

Data exfiltration risks emerge when sensitive data leaks through ungoverned LLM integrations. Controls include DLP scanning before LLM calls, sanctioned endpoint restrictions, and monitoring for data patterns in model outputs.

Retrieval-augmented generation (RAG)-specific risks arise when systems access ungoverned document indexes. Retrieval index governance requires curated, access-controlled document sets with clear ownership and update procedures.

For high-stakes outputs, human-in-the-loop review gates provide an additional control layer.

AI governance regulations and compliance

As AI continues to advance and grow in adoption, governmental agencies are working to create regulations for its development and usage. These regulatory models and directives represent some of the most critical developments in AI governance.

The following table maps key regulations to implementation requirements:

Many organizations also align their AI governance program with security and privacy compliance standards that show up in customer due diligence and audits. Common examples include SOC 2 Type II, ISO 27001, HIPAA, CCPA (California Consumer Privacy Act), PCI DSS, and FedRAMP. These are not AI laws, but they shape the evidence you'll need to prove that AI workflows handle data appropriately.

Regulations in North America

Supervision and Regulation Letter SR-11-7 is a regulatory governance standard set forth by the United States Federal Reserve Bank to provide guidance on risk model management. According to SR-11-7, bank officials must apply company-wide model risk management initiatives. They must also keep an inventory detailing which models are currently in use, under development, or recently retired. Furthermore, they must demonstrate that these models serve their intended business purpose, are up to date, and have not drifted from their original purpose. Individuals unfamiliar with the model must be able to comprehend its operations, limitations, and key assumptions.

Canada's Directive on Automated Decision-Making is a policy instrument that guides the Canadian government in its use of AI for automated decision-making. This directive defines automated decision-making as fully or partially using algorithms and computer code to make decisions without human intervention. The directive's purpose is to ensure that the use of these systems is transparent, accountable, fair, and respectful of privacy as well as human rights.

Regulations in Europe and Asia-Pacific

The European approach to artificial intelligence, as outlined by the European Commission, centers on building trust in AI technology through the EU AI Act (the first comprehensive legal framework on AI worldwide), which entered into force on August 1, 2024, along with supporting initiatives such as the AI Continent Action Plan and the Apply AI Strategy. The European Commission has also developed guidelines for trustworthy AI development and usage, focused on fairness, accountability, transparency, and the need for human oversight. Programs such as Horizon Europe help drive the development of innovative AI technology that aligns with European values and standards.

Several countries in the Asia-Pacific region have developed frameworks for AI governance. One example is Singapore's approach to AI governance, which includes the Model AI Governance Framework and its extensions for Generative AI (2024) and Agentic AI (2026), focusing on explainability, transparency, fairness, and human-centric principles to ensure the responsible and ethical use of AI. Japan has also established guidelines around similar principles for AI research and development. Meanwhile, Australia has created an AI Ethics framework designed to guide the responsible development and use of AI technologies, emphasizing accountability, transparency, fairness, and people-centered values.

Just as AI technology continues to evolve, so must the frameworks used to govern it. Despite the challenges, investing in the development of ethics guidelines and principles is worthwhile to ensure the technology is used effectively, ethically, and responsibly.

Getting started with AI governance

You do not need to build effective AI governance from scratch or manage it as a separate compliance exercise. The most practical starting point for most organizations is identifying where governance is already embedded in their data and analytics infrastructure and extending those controls to AI workflows.

Organizations with mature data governance programs have a foundation to build on. Teams can often extend the access controls, data quality standards, and lineage tracking they already use for analytics to AI use cases. The goal is governance as a built-in capability rather than a bolt-on requirement.

For organizations earlier in their governance journey, starting with a minimal viable governance program makes sense. This might include documenting current AI use cases, assigning ownership for the highest-risk applications, establishing basic acceptable use policies, and implementing monitoring for production models. From this foundation, governance capabilities can expand as AI deployment scales.

If you're evaluating platforms to support this work, look for a setup that reduces governance friction: a centralized place to manage AI agents, enforce access policies, and keep audit trails and lineage connected from data to BI to AI outputs. That's also where features like governed metric definitions (a semantic layer), dataset certification, and human-in-the-loop approvals tend to pay off quickly.

Are you interested in seeing how big data and AI can improve your business? Discover how Domo.AI combines AI innovations with our existing BI platform for powerful analysis and meaningful business insights.

Within Domo, teams often use Agent Catalyst to centralize agent management and keep governance and security controls consistent across AI workflows, including human-in-the-loop review for higher-risk decisions. Data teams pair that with Domo BI's semantic layer for governed metrics and Magic Transformation for dataset certification, lineage audit trails, and row- and column-level access controls that persist through transformation layers feeding AI workflows. Agent Catalyst can also connect agents to governed Domo datasets, FileSets, and unstructured documents using retrieval-augmented generation (RAG), so access controls apply at the point of AI consumption.

For line-of-business teams that want structure, guided programs like AgentGuide and Executive Transformation Workshops can help you map use cases to governance requirements early, before an "oops" moment turns into an incident report.