AI Data Integration: A Beginner's Guide to Data Management

What’s worse? A well-established organization with decades worth of data trapped in manual reports and messy spreadsheets or a burgeoning startup that prioritizes speed at all costs, leading to every department onboarding its own data collection system into a tangled, unruly web of applications.

You are welcome to pick your poison, but rest assured data experts have probably given themselves concussions from banging their heads against the walls trying to wrestle with both scenarios. Why do they put themselves through that pain? Because establishing one complete system that encompasses all of your data is the foundation for accurate and actionable business intelligence.

Luckily, AI could be the remedy you need to save you from that headache. AI is rewriting how companies connect, transform, and act on data, turning what used to take weeks into something that happens in real time.

But don’t put away the Advil just yet. According to a 2025 report from the IBM Institute for Business Value (IBV), more than half of executives surveyed said that challenges integrating AI infrastructure with their legacy systems have resulted in their businesses falling short of their goals.

What can you do to unlock the efficiencies that AI data integration promises and remove those pain points? Whether you’re a business leader exploring how to connect your disparate data sources or a new analyst navigating multiple data platforms, this guide will show you how AI can simplify the complexity of data integration to get your data working better for you.

Key takeaways

Here are the main points to keep in mind:

- AI data integration automates the process of connecting, transforming, and managing data from multiple sources, reducing manual effort and errors while enabling more timely, more confident decisions.

- Three main types exist: AI-assisted extract-transform-load (ETL) pipelines (automated mapping within traditional pipelines), AI-native integration (full pipeline orchestration), and large language model (LLM)-based unstructured integration (extracting structured data from documents and images).

- At scale, AI data integration is as much about governance as automation: you need controlled access, clear lineage, and auditability so only authorized, trusted data feeds analytics, AI models, and AI agents.

- Successful implementation requires addressing data quality foundations, choosing transparent AI tools with strong governance features, and planning for iteration rather than perfection.

What is AI data integration?

Data is everywhere. Making it useful? That is where most businesses get stuck.

Between scattered systems, messy spreadsheets, and manual reporting, it is no surprise that data integration often feels like a full-time job. For many teams, it is. But there's a shift underway. AI is rewriting how we connect, transform, and act on data. What used to take weeks now happens in real time.

At its core, data integration brings data from different sources together into a single, cohesive view. Traditionally, this meant writing scripts, maintaining pipelines, and checking for errors (lots of manual effort). AI changes that equation. The process becomes more efficient, more adaptive, and less prone to human error.

AI data integration uses technologies like machine learning (ML) and natural language processing (NLP) to:

- Automate repetitive integration tasks

- Identify data relationships and inconsistencies

- Recommend data mappings

- Monitor integration health and optimize it in real time

Here's a practical way to think about it: AI data integration isn't only about getting data into a warehouse or dashboard. It's also about getting governed, approved data into the things that depend on it (like production AI models and AI agents) without spinning up a new custom pipeline every time someone has a new idea.

Not all AI data integration works the same way, though. Understanding the spectrum helps you evaluate where your current tools fall and what capabilities you might need.

The three main types of AI data integration include:

- AI-assisted ETL: Traditional extract-transform-load pipelines enhanced with AI capabilities like automated schema mapping and quality checks. Your existing tools might already offer this. Think automated field suggestions in Fivetran, which can help with mapping, though teams may still want broader governed workflow support in a platform like Domo.

- AI-native integration: Platforms where AI orchestrates the full pipeline from source discovery through monitoring. These systems learn from your data patterns and adapt workflows without manual reconfiguration.

- LLM-based unstructured integration: Large language models that extract structured data from documents, emails, images, and other unstructured sources. Turning a PDF invoice into database rows or parsing customer feedback into categorized insights.

Instead of spending hours wrangling data from spreadsheets, application programming interfaces (APIs), and databases, AI helps your systems do that work automatically and intelligently. Platformslike Domo AItake this further by embedding these capabilities directly into no-code/low-code data pipelines, so teams can integrate and prepare data without deep technical knowledge.

For teams dealing with scale (think hundreds of systems today and 1,000+ potential sources over time) connector coverage and automated ingestion start to matter as much as the AI features themselves.

Common challenges with traditional data integration

Before exploring how AI transforms integration, it helps to understand why traditional approaches struggle.

Traditional data integration typically involves manual scripting, rigid pipelines, and constant maintenance. When source systems change, someone has to notice, diagnose, and fix the break. A new field appears. A format shifts. An API updates. Multiply that across dozens or hundreds of data sources. You've got a recipe for perpetual firefighting.

Technical debt sneaks in here too. Every one-off integration built for a single team or project adds another thing to own, monitor, and patch. When the organization starts pushing for AI projects and agent workflows, that sprawl can turn "quick prototype" into "permanent pipeline" overnight.

Data silos and fragmented systems

Data trapped in disconnected tools creates more than technical inconvenience. It undermines decision-making at every level. When marketing pulls numbers from one system and finance pulls from another, leadership meetings become debates about whose data is right rather than discussions about what to do next.

Fragmented systems mean fragmented visibility. Sales can't see what support knows about a customer. Operations can't anticipate what sales has promised. Inconsistent reporting. Conflicting metrics. Decisions made on incomplete information.

Manual processes and resource drain

Every hour spent on manual mapping, transformation, and error correction is an hour not spent building the AI infrastructure your organization needs. Traditional integration demands constant human attention: writing transformation logic, fixing broken pipelines, reconciling mismatched records, and validating outputs.

The cost is not just time. It's the errors that slip through. Typos in formulas. Incorrect field mappings. Missed updates when source systems change. These mistakes compound, creating data quality issues that erode trust in reports and dashboards. Your most skilled data engineers end up as pipeline maintenance technicians rather than architects of your data strategy.

Scalability and legacy system limitations

As businesses grow, traditional integration approaches buckle. Adding a new data source means writing new scripts, testing new connections, and hoping nothing breaks downstream. Expanding to a new region or acquiring a company means months of integration work.

Legacy systems compound the problem. Many organizations run critical processes on older platforms that weren't designed for modern cloud architectures. Connecting these systems to AI-ready pipelines without ripping and replacing the entire infrastructure requires careful orchestration (something traditional integration handles poorly).

How AI transforms the data integration process

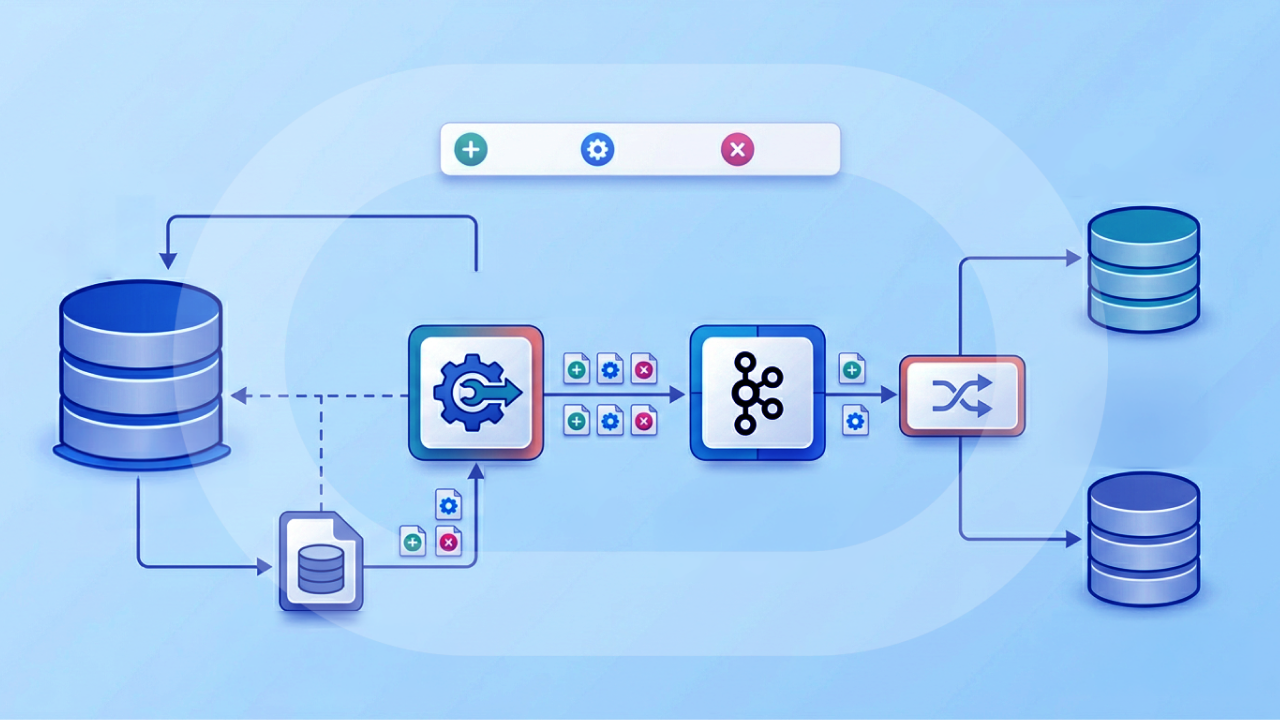

AI doesn't just speed up traditional integration. It fundamentally changes how data flows through your organization. Let's walk through the key stages of AI-enabled data integration and see where intelligence adds value at each step.

Think of this as a sequential workflow with checkpoints. At each stage, AI handles the heavy lifting while you maintain control over critical decisions.

Data discovery and source identification

Before any integration happens, AI scans your systems and identifies what data is available and where it lives across tools like Salesforce, NetSuite, Google Analytics, or even comma-separated value (CSV) files. It reads metadata, identifies column types, and surfaces relationships between systems.

The validation checkpoint here is confirming that AI has correctly identified your priority sources and their update frequencies. Missing permissions that prevent full discovery or misidentified data types in poorly documented systems are failure modes you will encounter. The output is a validated source inventory with metadata profiles for each system.

Smart mapping and schema matching

Instead of mapping every field manually, AI recommends matches between datasets, even when field names don't line up exactly. These recommendations are based on past integrations, data types, and learned patterns.

Modern AI mapping goes beyond simple name matching. ML models assign confidence scores to each recommendation. A 95 percent match on "customeremail" to "emailaddress" might auto-apply, while a 72 percent match on "regioncode" to "territory" gets queued for human review. This confidence threshold approach prevents bad mappings from propagating while still automating the obvious matches. And honestly, accepting all AI-suggested mappings without reviewing the confidence scores is where I've seen teams introduce subtle errors that compound downstream.

This feature is commonly found in modern integration tools that prioritize ease of use and scale, like Domo's connector framework.

Automated data transformation

Once fields are mapped, AI takes over data transformation and begins formatting. It standardizes currencies, reformats dates, removes duplicates, and even flags outliers. Over time, systems learn your preferences, enhancing workflows with every pass.

Change data capture (CDC) plays a critical role here, tracking changes at the source and propagating only what's new or modified rather than reprocessing entire datasets.

Workflow orchestration and scheduling

AI coordinates when and how your data flows. It schedules syncs, reacts to upstream changes, and alerts you when something breaks. In platforms like Domo, you can visualize this orchestration in Domo Workflows and see exactly when jobs succeed or fail.

Pipeline orchestration delivers concrete outcomes: scheduled pipeline runs that execute without intervention, automatic failure handling that retries or escalates based on error type, and automated distribution that pushes updated dashboards and alerts to the right people at the right time.

It also matters for AI projects. When your organization starts building AI agents and production models, orchestration needs to extend to the last mile: getting approved datasets into those workflows consistently, with the right permissions and controls.

Monitoring and anomaly detection

AI watches for issues like missing fields, data drift, or pipeline breaks and alerts you in real time. For example, people can set up alerts to be notified instantly when critical thresholds are hit or when AI identifies an outlier that deserves human attention.

This monitoring represents a fundamental shift. From reactive reporting to proactive intelligence. Instead of discovering stale data during a board meeting, you get notified the moment something goes wrong. Instead of manually checking that yesterday's data loaded correctly, AI validates completeness, freshness, and distribution automatically.

For AI workloads, monitoring also protects model and agent reliability. If a key source changes (like a CRM field rename) and that change quietly breaks an input feature, model accuracy can degrade without anyone noticing.

Key AI techniques powering data integration

AI data integration now spans both structured and unstructured data, and the techniques below reflect that expanded scope. Understanding these technologies helps you evaluate platforms and set realistic expectations for what AI can handle.

Machine learning for pattern recognition

Machine learning identifies relationships in your data that would take humans hours to discover. ML models analyze historical integrations to recommend field mappings, learning from corrections to improve over time. When you override a suggested mapping, the system remembers and adjusts future recommendations.

Pattern recognition also powers anomaly detection. ML establishes baselines for normal data behavior (typical row counts, value distributions, update frequencies) and flags deviations automatically. A sudden drop in daily transactions or an unusual spike in null values triggers investigation before bad data reaches your dashboards.

Natural language processing for data understanding

NLP enables AI to interpret context and meaning, not just structure. This matters when integrating data with inconsistent naming conventions or when you need to extract insights from text fields like customer feedback, support tickets, or product descriptions.

NLP also powers conversational interfaces that let non-technical people query data in plain language. Instead of writing structured query language (SQL), a marketing manager can ask "show me campaign performance by channel for the last quarter" and get results.

For unstructured sources, NLP combined with optical character recognition (OCR) extracts structured data from documents. An invoice PDF becomes a row in your accounts payable table.

LLM-based extraction for unstructured data

Large language models take unstructured data integration further, handling complex documents that traditional parsing cannot manage. The basic pattern works like this: ingest the document through OCR or document parsing, use an LLM with a structured output schema to extract specific fields, validate the extraction against expected formats and confidence thresholds, then load the structured result into your warehouse.

Consider invoice processing as a concrete example. A PDF arrives with varying layouts across vendors. The LLM identifies and extracts vendor name, invoice date, line item descriptions, quantities, unit prices, and totals, even when the format differs from previous invoices. Validation rules check that totals match line item sums and that dates fall within expected ranges.

The failure modes matter here. LLMs can hallucinate field values when documents are ambiguous or OCR quality degrades. Schema drift occurs when document formats change and extraction rules need updating. Successful implementations include confidence scoring that routes low-confidence extractions to human review rather than auto-accepting potentially incorrect data.

Intelligent transformation and real-time processing

Beyond extraction, AI handles the ongoing work of keeping data clean and current. Intelligent transformation learns your formatting preferences: how you want dates displayed, which currency to standardize on, how to handle edge cases in address parsing.

Real-time processing capabilities mean transformations happen as data arrives rather than in scheduled batches.

Benefits of AI-powered data integration

When you automate your data workflows with AI, you're not just improving efficiency. You're laying the groundwork for more informed decisions, quicker reactions, and a more connected business.

Here are the key benefits organizations see when they adopt AI-driven integration tools:

Time to insights

AI delivers clean, unified data in real time, shifting your organization from scheduled, manual report pulls to always-current outputs that surface insights before someone has to ask for them.

Teams don't have to wait days or weeks for manually stitched-together reports. A sales leader can check pipeline health during a leadership meeting with confidence, using live dashboards that pull from multiple systems. The competitive advantage isn't just speed. It's the ability to act on information while it's still relevant.

Greater accuracy and consistency

Manually integrating data leaves plenty of room for human error, especially when dealing with messy formats, duplicate entries, or version conflicts. AI helps eliminate those risks by automatically detecting and correcting inconsistencies, ensuring that what you're analyzing is trustworthy.

Cleaner data leads to better forecasting, fewer rework cycles, and more confident decisions.

Reduced manual workload

Integration tasks like mapping fields, standardizing formats, or creating workflows often require hours of effort from technical teams. AI lightens the load by recommending mappings, building transformations, and monitoring data health behind the scenes.

Beyond time savings, AI eliminates the errors that manual work introduces. Typos in formulas. Incorrect field mappings. Missed updates when source systems change. This allows analysts and engineers to focus on strategic work like building AI models and designing data architecture rather than maintaining pipelines.

Scalability for growth

As businesses grow, so does their data. More customers. More platforms. More frequent updates. AI-enabled systems adapt quickly, learning from patterns and scaling workflows without having to rebuild everything manually.

Whether you're onboarding a new tool, expanding into a new region, or integrating an acquisition, AI makes the transition smoother.

Improved collaboration across teams

When data is accessible, current, and easy to understand, teams across the business can align faster. AI-powered integration centralizes information from multiple systems, reducing siloed reports and inconsistent metrics.

Marketing, sales, finance, and operations can all speak the same language.

How AI data integration reduces manual reporting

One of the most immediate benefits organizations experience is the elimination of recurring manual reporting work. The traditional reporting cycle (collect data from multiple sources, clean and reconcile it, build the report, distribute it, repeat) consumes enormous time and introduces errors at every step.

AI transforms this cycle by automating each stage. Automated ingestion via APIs and CDC keeps source data current without manual exports. Intelligent cleaning handles anomalies, duplicates, and format inconsistencies automatically. Schema matching and entity resolution unify records across systems into a single customer or product view.

The output side changes too. Dashboards refresh automatically on schedules you define or in real time as data changes. Threshold-based alerts notify stakeholders when metrics cross important boundaries. Some platforms even generate narrative summaries that explain what changed and why it matters.

What AI data integration could look like in action

To help bring these concepts to life, here are a few hypothetical scenarios that illustrate how AI-powered data integration could solve common challenges across industries.

Retail: inventory and sales insights

Imagine a regional apparel brand struggling to sync sales from in-store point-of-sale (POS) systems with online orders. The disconnect often leads to overstock in some stores and stockouts in others. With AI-powered data integration, this retailer could unify e-commerce, inventory, and marketing data into a single dashboard. The AI engine would help map SKUs across systems, predict restock needs, and alert store managers before items sell out. A 15 to 20 percent reduction in inventory waste represents significant cost savings for retailers operating on thin margins, where excess inventory ties up capital and stockouts mean lost sales.

Healthcare: unified patient journeys

Consider a healthcare provider with multiple locations where data lives in separate electronic health record (EHR) systems, billing platforms, and lab tools. Integrating this data manually is time-consuming and prone to error. By using AI-assisted integration, the provider could automatically consolidate patient data into unified profiles, flagging billing gaps, appointment follow-ups, or missing lab results. A 40 percent reduction in reconciliation time frees administrative staff to focus on patient-facing work rather than chasing down discrepancies across systems.

Marketing: campaigns with real-time feedback

Think about a mid-size business-to-business (B2B) company running campaigns across Google Ads, LinkedIn, and a customer relationship management (CRM) system like HubSpot. Performance data is siloed. By the time it's reviewed, the campaign is already over.

With AI-enabled integration, marketing teams could merge campaign spend, lead conversion, and engagement data into one real-time view. AI could flag underperforming segments, suggest timing adjustments, and even predict which channels are most likely to generate SQLs.

Manufacturing: production forecasting

A national parts manufacturer might face inconsistent supplier lead times and fluctuating demand from retailers. Without integrated systems, it's hard to anticipate production delays or optimize procurement. AI-powered integration could combine enterprise resource planning (ERP), sales, and logistics data into a cohesive stream (learning from patterns over time). It could then surface when a shipment might be late or when excess inventory is building up, helping the business avoid unnecessary costs and stay ahead of demand.

Small business: more efficient service scheduling

Picture a fast-growing heating, ventilation, and air conditioning (HVAC) service company juggling technician schedules, customer appointments, and last-minute cancellations. By integrating scheduling software, job history, and feedback forms using AI, the owner could gain insights about when demand spikes, which areas take the most time, or how reschedules affect revenue. AI can recommend optimal daily routes or staffing levels, helping the business run leaner while keeping customer satisfaction high.

Enterprise: governed data-to-agent workflows

Now picture a large enterprise rolling out AI agents across functions. Finance wants an agent that answers close questions. Sales wants an agent that summarizes account risk. Support wants an agent that drafts responses. The catch? Each agent is only as useful as the data it can safely access.

With governed data-to-agent integration, teams can connect agents directly to approved datasets and unstructured files (like knowledge base articles or policy documents) using retrieval-augmented generation (RAG). RAG is a pattern where the agent retrieves relevant facts from your governed data and documents, then uses that context to respond.

Keep these challenges in mind

As powerful as AI is, it's not a plug-and-play silver bullet.

Data quality remains foundational

AI can't fix everything. If your source data is messy (inconsistent formats, duplicate records, missing values) those issues will still require attention. AI amplifies what you give it, which means garbage in still produces garbage out. Just faster.

Effective data quality engineering focuses on several dimensions: freshness (how current is the data), completeness (what percentage of expected fields are populated), accuracy (do values conform to expected formats and ranges), consistency (do related records match across systems), and uniqueness (are duplicates identified and resolved).

The goal is creating a golden record, a single, authoritative version of each entity (customer, product, transaction) that resolves conflicts across sources. AI helps by flagging anomalies and suggesting resolutions, but human judgment determines which source wins when records conflict.

Start by auditing your most important systems and cleaning up basic errors like naming conventions or null values before expecting AI to work magic.

Transparency and explainability in AI decisions

AI models can make integration decisions behind the scenes, but that does not mean those decisions are always clear. When an AI system maps "regioncode" to "sales_territory," you need to understand why. Especially when that mapping affects financial reporting or compliance.

Explainability requires concrete practices: logging all AI-driven mapping decisions with confidence scores and rationale, maintaining versioned transformation rules that can be audited and rolled back, implementing lineage tracking that shows exactly how each output field was derived from source data, and creating approval workflows for high-stakes transformations.

Choose platforms that offer this transparency and let you review or override AI-driven decisions when needed.

Avoiding over-reliance on automation

It's tempting to automate everything. But some integration choices (like how to prioritize conflicting data or which source should be authoritative) still need a human touch.

A useful framework: auto-accept AI suggestions when confidence exceeds 95 percent, the data is low-risk, and the decision is reversible. Require human review when confidence falls below 90 percent, PII is involved, regulatory implications exist, or the transformation affects business-critical metrics.

Don't lose sight of the "why" behind your data.

Security and governance requirements

Sensitive data requires safeguards that go beyond general best practices. AI-driven pipelines that move, transform, and enrich data create new exposure points that traditional security models may not address.

Specific governance mechanisms to evaluate include: personally identifiable information (PII) handling (does the platform support redaction, tokenization, or on-premise inference for sensitive fields), access controls (can you restrict who sees which data at the row and column level), audit trails (are all data movements and transformations logged for compliance review), retention policies (can you automatically purge data according to regulatory requirements), and cross-border considerations (does the platform support data residency requirements for the General Data Protection Regulation (GDPR), the California Consumer Privacy Act (CCPA), or industry-specific regulations).

If you're feeding AI models or AI agents, governance needs to cover that path too. Look for guardrails like human-in-the-loop review for sensitive actions, plus clear controls for which governed datasets an agent can reference.

Integration complexity with legacy systems

Many organizations run critical processes on systems that were not designed for modern cloud architectures. Connecting these legacy platforms to AI-ready pipelines requires careful planning.

Evaluate whether potential tools support hybrid deployment models, offer pre-built connectors for older systems, and can handle the batch-oriented data flows that legacy systems often require.

The future of AI in data integration

Looking ahead, AI will play an even bigger role in shaping how we connect and use data. These trends are already emerging in leading platforms and will become standard capabilities over the next few years.

Natural language interfaces will let non-technical people build integrations through conversation. Imagine typing, "Connect my ad campaign data to Shopify and show sales from the last 30 days." AI-powered tools will do the setup for you. No coding required. This democratizes data access and reduces the bottleneck of waiting for technical resources.

Autonomous pipelines represent the next evolution. We're entering an era where data pipelines can self-heal, adapt, and optimize themselves without manual tuning. When a source schema changes, the pipeline detects it, adjusts mappings, and alerts you only if human judgment is needed.

AI-native data contracts will help define, enforce, and evolve data agreements automatically. Instead of manually documenting expected schemas and freshness service-level agreements (SLAs), AI will monitor adherence and flag violations before they cause downstream problems.

Streaming architectures will become the default for more use cases. The shift from batch processing to real-time integration enables immediate analysis and action. For fraud detection, inventory management, and customer experience applications, sub-minute latency will be expected rather than exceptional.

Explainable and responsible AI will continue maturing. Regulations and people's expectations are driving platforms to make AI more interpretable. You'll be able to see exactly why your data is flowing a certain way.

As AI agents become more common, expect tighter integration between governed datasets, approved unstructured documents, and the orchestration layer that controls what an agent can access.

Timeline perspective: natural language interfaces and self-healing pipelines are near-term capabilities (one to two years). Fully autonomous integration agents and AI-native data contracts are mid-term developments (three to five years).

How to prepare your business for AI data integration

Even if you're not ready to implement AI-driven data integration today, there are practical steps you can take to lay the foundation.

Think of this as a readiness checklist, a sequenced approach that builds toward successful implementation.

Audit your data ecosystem

Start by mapping out your current data environment. What tools are in use across departments? Where is your most valuable data stored? Identify overlaps, gaps, and dependencies. Even a simple inventory (like a spreadsheet or visual diagram) can help clarify what you're working with and where integration could deliver the most value.

Set clear goals

Before choosing any tools or building workflows, define what you're trying to solve. Are you looking to improve reporting? Eliminate manual spreadsheet consolidation? Speed up decision-making? These goals will help you prioritize which systems to connect first and what success should look like.

Build team literacy and buy-in

AI integration tools are most powerful when people trust and understand the data they're using. Consider ways to increase data literacy across your teamthrough training, better dashboards, or access to curated datasets. The more comfortable people are with data, the easier it will be to scale your AI initiatives later.

Get the right people in the room

AI data integration touches more roles than most projects, so it helps to align early on who owns what.

Here are a few common stakeholders to plan for:

- Data engineers: care about eliminating brittle, custom pipelines and keeping hundreds of sources flowing reliably

- Data architects: care about hybrid connectivity and standard patterns that work across legacy systems and cloud platforms

- IT and data leaders: care about centralized AI data governance, security, and compliance-ready pipelines

- AI/ML engineers: care about production-ready pipelines, guardrails, and the freedom to experiment with different models without rebuilding integrations

- Executives and line-of-business leaders: care about a single source of truth, faster decisions, and clear ROI from AI investments

Choose scalable, flexible tools

Look for platforms that support a range of data sources, offer pre-built connectors, and include AI-assisted features like automated mapping, transformation, or alerting. Key evaluation criteria include: connector coverage for your existing systems, CDC support for real-time updates, governance features like lineage and audit logs, unstructured data capabilities, transparent pricing, and strong security certifications.

If AI agents are on your roadmap, add one more criterion: can the platform connect governed datasets (and approved unstructured content) directly to agent workflows, so you don't need a custom integration cycle for every new agent?

No-code/low-code environments can also accelerate adoption across non-technical teams. Tools like Domo are built to handle both simple and complex use cases with built-in scalability.

The build-vs-buy decision matters here. Building custom integrations offers flexibility but requires ongoing maintenance. Buying a platform trades some customization for quicker deployment and reduced operational burden. Most organizations benefit from a platform approach for standard integrations, reserving custom development for truly unique requirements.

Plan for iteration, not perfection

Your first integrations won't be your last. Start with a narrow use case, test it, and expand from there. AI systems improve over time as they learn from your data and workflows. Set up feedback loops and be ready to adjust. You'll notice that the most successful teams treat integration as an ongoing process.

Getting started with AI data integration

The promise of AI data integration comes down to outcomes: faster decisions based on complete information, a single source of truth that everyone trusts, and teams freed from data wrangling to focus on work that matters.

You don't need a data science degree to benefit from AI-powered data integration. Whether you're leading a team, running a business, or just starting your data journey, AI can help you bring your data together. More efficiently, more intelligently, and with less stress.

The right platform can make a difference. Choose one that simplifies complexity, connects every part of your business, and brings your data and your people closer to action.

Domo brings all your data together automatically and intelligently. From connecting disparate sources to delivering real-time insights and predictive analytics, Domo's AI-enabled platform helps you move from manual data wrangling to confident decision-making.

If you're building AI agents, Domo Agent Catalyst can link agents directly to governed Domo datasets and FileSets (stored files like documents and spreadsheets) using retrieval-augmented generation (RAG). That means you can move from "we have the data" to "the agent can use the data" without turning your backlog into an integration graveyard.

Get started today with a free trial or watch a demo to see how AI can transform your data integration strategy.