How Big Data and AI Work Together to Drive Business Insights

Big data and AI depend on each other to deliver business value. Big data supplies the massive datasets AI needs for training and improvement. AI provides the processing power to analyze those datasets at scale. Together they move organizations from looking backward at what happened to predicting what comes next. This guide covers how the relationship works across data collection, storage, modeling, and real-time insights, plus the challenges you will need to address along the way.

Key takeaways

Here are the main points to keep in mind:

- Big data provides the massive, complex datasets AI needs to learn and improve, while AI provides the processing power to analyze big data at scale

- The relationship spans the entire data lifecycle, from collection and storage through modeling, training, and real-time insights

- Combining AI and big data delivers measurable business outcomes across industries, including quicker decisions, reduced costs, and predictive capabilities

- Success requires addressing challenges like data quality, governance, and integration complexity

What is big data?

Larger, more complex data sets that overwhelm traditional database tools to capture, manage, and analyze. That's big data in a nutshell. It includes data from many sources and activities, including:

- Customer engagement

- Inventory levels

- Sales transactions

- Marketing campaigns

- Employee information

- Images and videos

- Daily business operations

- Web, app, and social media activity

Once you access and understand it, big data is extremely valuable to businesses, as you can make data-driven decisions that increase efficiency and revenue. But analyzing and drawing insights from big data presents its own challenges. Big data arrives at an increasing volume and velocity, containing both structured and unstructured data. These factors make it too difficult for traditional data processing tools to handle. This is where AI technology comes in, making analytics tools powerful enough to manage the growing influx of big data.

The 5 Vs of big data

Five characteristics distinguish big data from traditional datasets:

- Volume: The sheer scale of data generated, often measured in terabytes or petabytes. Organizations collect data from transactions, sensors, social media, and countless other sources, creating datasets too large for conventional databases.

- Velocity: The speed at which data is created and needs to be processed. Real-time data streams from Internet of Things (IoT) devices, financial transactions, and social platforms require immediate analysis to remain valuable.

- Variety: The different types and formats of data, including structured data (spreadsheets, databases), semi-structured data (JavaScript Object Notation, or JSON, and Extensible Markup Language, or XML), and unstructured data (emails, videos, images, social posts).

- Veracity: The trustworthiness and accuracy of data. With data coming from multiple sources, ensuring quality and reliability becomes a significant challenge.

- Value: The business worth that can be extracted from data. Raw data has little value until it's processed, analyzed, and transformed into actionable insights.

What is AI?

Artificial intelligence (AI) represents the computer science technologies that allow machines to perform tasks and simulate human capabilities. AI-based algorithms, natural language processing (NLP), and machine learning (ML) techniques model parts of human decision-making, enabling computers to learn from data and make reasonable conclusions and predictions. The adoption of AI into business operations has grown dramatically, with 88 percent of organizations reporting regular AI use in at least one business function. AI has moved from experimental to essential for most enterprises.

These AI techniques apply to big data in distinct ways. Machine learning algorithms identify patterns in large datasets and improve their accuracy over time without explicit programming. Deep learning, a subset of ML, uses neural networks to process complex unstructured data like images and natural language. NLP enables machines to understand, interpret, and generate human language, making it possible to analyze text-heavy data sources like customer feedback, support tickets, and social media conversations.

Big data plays different roles depending on the type of AI application. In classic machine learning, big data serves primarily as training and validation material. In modern generative AI applications, big data also functions as retrieval context (enterprise documents, knowledge bases, and structured data that large language models access at inference time through retrieval-augmented generation (RAG) and agent frameworks).

What is the difference between big data and AI?

While big data and AI are often discussed together, they serve fundamentally different purposes. Big data refers to the datasets themselves. The raw material characterized by volume, velocity, and variety. AI refers to the technologies that process, analyze, and learn from that data.

Think of it this way: big data is the fuel, AI is the engine, machine learning is the training process, and data science is the discipline that orchestrates all three.

The following table clarifies the distinction:

Common misconceptions about big data and AI

Several misconceptions cause confusion for people new to these topics:

- Big data and AI are the same thing: They're not. Big data is a category of data characterized by volume, velocity, and variety. AI is a set of technologies that can process and learn from data. You can have big data without AI, and AI without big data.

- AI always requires big data: AI can work effectively with smaller, well-structured datasets. However, big data generally improves model accuracy and generalization, particularly for complex problems with many variables. Teams often assume more data automatically means stronger results. Data quality and relevance matter more than sheer volume.

- Machine learning and AI are interchangeable: Machine learning is a subset of AI, not a synonym. AI encompasses a broader range of technologies including rule-based systems, expert systems, and robotics. Big data is particularly valuable for training ML models at scale, but not all AI applications rely on ML.

What is AI for big data?

AI for big data is the merger of AI technologies with data analytics. AI-powered analytics, also known as augmented analytics, uses machine learning and AI algorithms to enhance the entire analytics process. AI can analyze and interpret all types of big data to derive accurate, meaningful insights quickly.

How does AI in big data accomplish this? Teams train AI models and algorithms on datasets so they can recognize patterns and anomalies in the data and continue to improve with use. AI can identify trends and correlations and make predictions based on data that would otherwise be too time-consuming or difficult for people to handle. Being able to transform raw data into strategic decisions quickly using AI gives businesses a huge advantage over their competitors.

How AI and big data work together

Artificial intelligence and big data are interdependent, relying on one another to operate. AI requires massive amounts of data to learn and refine its techniques for improved decision-making, while big data relies on AI-powered tools for timely, accurate analysis.

This interdependence drives a progression in analytics maturity. Organizations typically move through distinct stages as they combine big data and AI more effectively:

A retailer, for example, might start with descriptive analytics (sales dashboards showing what happened last quarter), progress to diagnostic analytics (understanding why certain products underperformed), advance to predictive analytics (forecasting demand for next season), implement prescriptive analytics (dynamic pricing recommendations), and eventually reach autonomous operations (AI-driven inventory reordering without human intervention).

The synergy between AI and big data shows up across these processes:

Collecting data

Big data is made up of enormous amounts of structured (numbers, dates, or structured text) and unstructured (images, audio, video, and large, text-based documents) data. Teams can model structured data in tables and format it easily for storage and processing, while unstructured data is more complicated to collect and organize.

Both types of data originate from a variety of sources, including customer interactions, marketing and sales efforts, social media channels, website visitors, internet-connected devices and sensors, and so on. This data is the cornerstone of AI tools, allowing them to process and learn from the information. In turn, AI applications make it easier to collect and organize all types of big data for analysis. AI-powered data collection tools can automatically categorize incoming data, extract relevant information from unstructured sources, and flag anomalies that require human attention.

For a lot of data engineers, the hard part isn't "getting data." It's getting it from everywhere (cloud apps, on-premises databases, file shares, logs, IoT streams) into one governed place without building and babysitting custom pipelines.

Storing and processing data

AI algorithms and models require ongoing access to large datasets for training. The more high-quality data AI has access to, the more accurate its findings tend to be, as smaller amounts of data and low-quality data impact the accuracy and quality of its findings.

Big data storage technologies, like cloud-based data warehouses and data lakes, make it possible to store and process the vast quantities of raw data needed for AI applications. Data warehouses store structured, processed data optimized for analytics queries. Data lakes retain raw data in its native format until needed. Many organizations now use data lakehouses that combine the flexibility of lakes with the performance of warehouses. Cloud-based storage capacity allows for quicker data transfer and reduces bottlenecks of data inputs and outputs. Businesses also rely on big data's security, compliance, and governance features to define and manage what AI can access for training.

Tool sprawl can quietly wreck this stage. Every disconnected ingestion tool, warehouse, model experiment, and dashboard layer creates another place where definitions drift and access controls get fuzzy. Which is a polite way of saying "governance gap."

Cleaning, transforming, and structuring data

Big data brings information together from numerous different sources, platforms, and applications, but it has to be pre-processed before AI can analyze it. This process involves cleaning and transforming data into a structure suitable for use in models and algorithms.

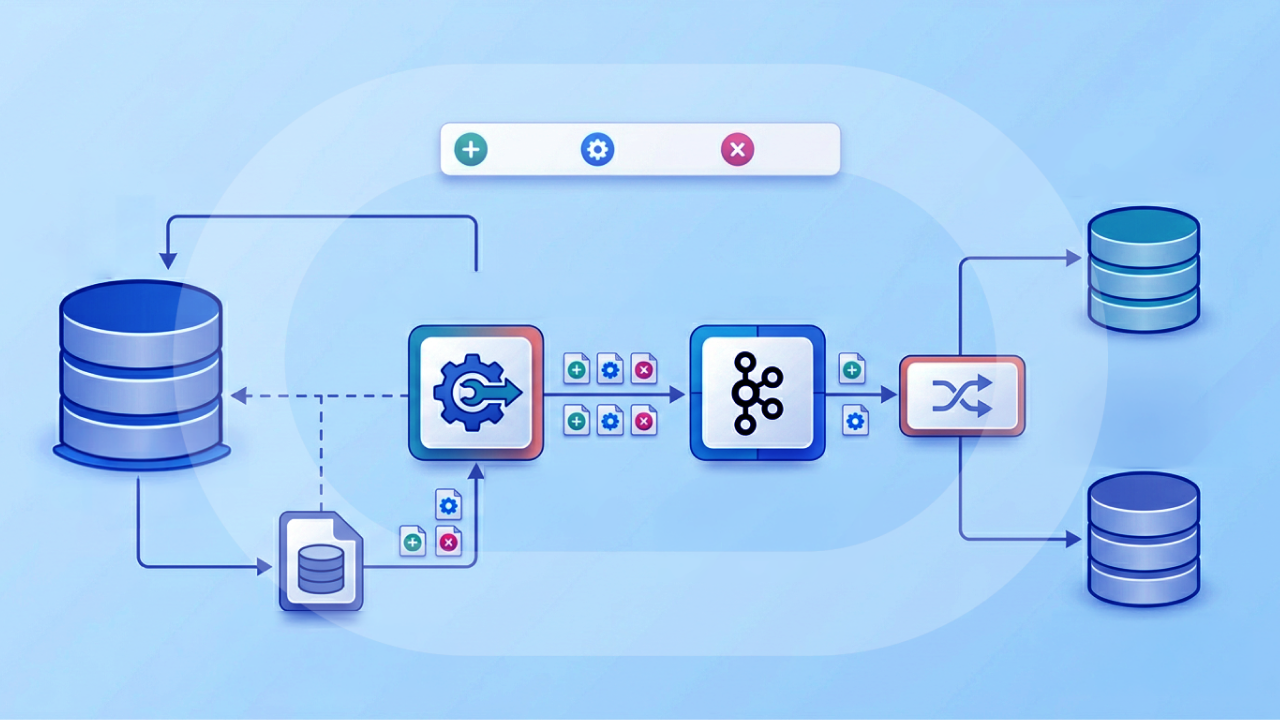

Modern extract, transform, and load (ETL) technology is cloud-based. It takes raw data from its original source, transforms it into a format usable for AI tools, and loads the data into a targeted database for AI purposes. At the same time, AI technology, such as NLP and ML, is being incorporated into the ETL process for increased efficiency.

This is also where "governed metrics" starts to matter. If one team defines revenue one way and another team defines it another way, your dashboards disagree. And your AI will learn the argument. A semantic layer (a shared definition and calculation layer for metrics) helps keep big data analytics and AI outputs anchored to consistent definitions.

AI modeling and training

After teams process the data, they can use it in machine learning models. These models apply specific algorithms on big data inputs to make decisions or find programmed outcomes like pattern recognition or predictions without human intervention. Some human supervision is needed, though, to assess the validity of predictions and for reinforcement learning.

AI and ML models aren't useful without proper training, which is where big data comes in. Models rely on access to historical data to learn, using a specific algorithm to find patterns in data based on the target, or answer, you want the model to locate. Teams often test several algorithms during training to find the strongest result. Once a model has been trained, it can find patterns and make predictions on new, unseen data in real-time.

Big data serves multiple roles in the AI lifecycle beyond initial training. It supports model validation and testing, ensuring the model generalizes to unseen data rather than memorizing training examples. In retrieval-augmented generation (RAG) systems, the system retrieves enterprise data at inference time to ground AI responses in current, relevant information. Ongoing monitoring and retraining rely on production data to detect model drift, which occurs when the statistical properties of real-world data shift away from what the model learned during training.

As more teams add large language models (LLMs) and AI agents into the mix, this stage also turns into an orchestration problem: which model should answer which question, what data is it allowed to see, and who signs off when the stakes are high? Many enterprises handle this with human-in-the-loop validation and AI governance guardrails so people can test, approve, and monitor AI behavior against governed big data instead of guessing what happened after the fact.

How big data quality affects AI model performance

The quality of data directly determines the quality of AI outcomes. Several common data quality failure modes can degrade model performance:

- Label noise: Inaccurate or inconsistent labels in training data cause models to learn incorrect patterns. If customer churn labels are wrong 20 percent of the time, the model's predictions will reflect that error.

- Data leakage: When information from outside the training set contaminates the model, it performs artificially well during testing but fails in production. This often happens when future information accidentally appears in historical training data.

- Class imbalance: When one outcome is significantly underrepresented in the training data, models struggle to predict rare events. A fraud detection model trained on data where only 0.1 percent of transactions are fraudulent may simply predict "not fraud" for everything.

- Missing data: Gaps in datasets cause models to learn incorrect patterns or fail to generalize. Systematic missingness, where certain types of records are more likely to have gaps, is particularly problematic.

- Data drift: When the statistical properties of production data shift away from training data over time, model accuracy degrades. Customer behavior changes. Market conditions evolve. Models trained on historical patterns become less relevant.

Addressing these issues requires ongoing data quality monitoring, not just a one-time cleaning effort before training.

Batch processing vs. real-time streaming

Most enterprise AI systems rely on two complementary approaches, batch and stream processing, to handle big data. Understanding when to use each is essential for effective implementation.

Batch processing collects data over a period and analyzes it together. This approach suits historical analysis, model training, and decisions that don't require immediate action. Monthly churn prediction, weekly demand forecasting, and quarterly customer segmentation are typical batch use cases. Batch processing is generally simpler to implement, easier to debug, and more cost-effective for large-scale analysis.

Real-time streaming processes data continuously as it arrives, enabling time-sensitive decisions. Fraud detection at transaction time, real-time product recommendations, and IoT anomaly detection require streaming architectures. These systems typically involve an event bus (like Kafka or Kinesis), a stream processor, an online feature store, and a low-latency inference service.

The choice between batch and streaming depends on the decision's time sensitivity and value. If acting on information an hour later still delivers most of the value, batch processing is usually the right choice. If delays of even seconds significantly reduce value or increase risk, streaming is necessary. And honestly, this is where teams stumble: they default to real-time streaming because it sounds more advanced, then struggle with the added complexity when batch would have served the use case just fine.

Generating real-time insights

AI tools can reveal current trends, patterns, and anomalies in big data that could otherwise go unnoticed by human analysis. Businesses use these intelligent insights to streamline their daily operations, enhance their services, adjust their product offerings, optimize their supply chain, reduce fraud or security risks, and more. AI's data-informed predictions give companies even more advantages, as they can develop strategies based on sound information rather than gut feelings.

Real-time AI applications typically operate within strict latency budgets. Fraud detection systems often need to make decisions in under 100 milliseconds to avoid delaying transactions. Personalization engines for e-commerce usually target sub-500-millisecond response times to avoid degrading user experience. Predictive maintenance systems for industrial equipment may have slightly more flexibility, but still need to alert operators within seconds of detecting anomalies.

This is also where BI and analytics leaders feel the pressure: if only a handful of technical experts can access big data and interpret AI outputs, insights pile up in a queue. AI-assisted analytics like natural language querying and AI chat can help more people explore governed data directly, without turning every question into a ticket.

Driving innovation in research and technology

Innovation in AI and big data go hand in hand. Big data heavily uses AI methods to operate and derive the most value. AI is dependent on big data's large volumes of information, cloud-based storage, and other tech like ETL tools to learn and refine its decision-making abilities. Research and innovation in one affects the other.

Benefits of combining AI and big data

The business value of combining AI and big data manifests across three dimensions: cost reduction through automation and efficiency, revenue growth through personalization and optimization, and risk mitigation through fraud detection and predictive maintenance.

Organizations that effectively combine these technologies typically experience the following benefits:

- Shorter time to insight: AI processes data in minutes or seconds that would take human analysts days or weeks to review. This acceleration enables more responsive decision-making and shorter feedback loops.

- Improved prediction accuracy: Machine learning models trained on large, diverse datasets make more accurate predictions than traditional statistical methods or human intuition alone. Organizations report improvements in demand forecasting accuracy, customer churn prediction, and equipment failure prediction.

- Automated decision-making: Routine decisions can be automated entirely, freeing human experts to focus on complex, high-value problems. This includes everything from dynamic pricing adjustments to automated customer service responses.

- Personalization at scale: AI enables individualized experiences for millions of customers simultaneously. Something impossible with manual approaches. Recommendations, content, pricing, and communications can all be tailored to individual preferences and behaviors.

- Proactive problem identification: Rather than reacting to issues after they occur, AI can identify problems before they impact customers or operations. Predictive maintenance, fraud prevention, and quality control all benefit from this shift.

- Competitive differentiation: Organizations that effectively combine AI and big data can respond more quickly to market changes, serve customers more effectively, and operate more efficiently than competitors still relying on traditional approaches.

One extra benefit that teams often overlook: when AI sits on governed metrics (and not a dozen versions of "the truth"), scaling insights to more people becomes much easier.

Challenges of implementing AI and big data

While the benefits are significant, organizations face real obstacles when combining AI and big data:

- Data quality and governance: AI models are only as good as the data they learn from. Ensuring accuracy, completeness, and consistency across massive datasets requires ongoing investment in data quality processes and governance frameworks. Organizations need clear data ownership, access controls, and quality monitoring.

- Integration complexity: Big data typically resides in multiple systems, formats, and locations. Integrating these sources into a unified pipeline that feeds AI models requires significant technical effort and ongoing maintenance.

- Skills gaps: Data scientists, ML engineers, and data engineers remain in high demand. Many organizations struggle to hire and retain the talent needed to build and maintain AI systems.

- Infrastructure costs: Storing, processing, and analyzing big data requires substantial infrastructure investment. Cloud services have reduced upfront costs but ongoing compute and storage expenses can grow quickly.

- Explainability and trust: Complex AI models can be difficult to interpret, making it hard to explain decisions to stakeholders, regulators, or customers. This "black box" problem limits adoption in regulated industries and high-stakes decisions.

- Change management: Implementing AI often requires changes to existing processes, roles, and decision-making authority. Organizational resistance can slow or derail otherwise sound technical implementations.

Fragmentation across the big data and AI stack is a very modern problem. When ingestion, governance, experimentation, deployment, and BI live in separate tools, teams move slower and compliance work gets harder. A centralized approach that ties AI activity back to governed datasets and consistent metric definitions can reduce operational overhead and help keep guardrails in place.

The environmental impact of AI and big data

Training large AI models and operating data centers at scale consumes significant energy and water resources. Training a single large language model can emit as much carbon dioxide as five cars over their lifetimes. That comparison puts the scale of compute-intensive AI work into perspective for sustainability planning. Data centers consume one to two percent of global electricity and require millions of gallons of water daily for cooling.

Organizations can take practical steps to reduce their environmental footprint:

- Data minimization: Collect and retain only the data actually needed for analysis. Smaller datasets require less storage and processing.

- Model efficiency: Choose smaller, more efficient models when accuracy requirements allow. Techniques like quantization and pruning can reduce model size without significant performance loss.

- Training frequency: Retrain models only when drift is detected rather than on a fixed schedule. Continuous retraining wastes resources when model performance remains stable.

- Carbon-aware scheduling: Run intensive workloads during periods of lower grid carbon intensity where infrastructure supports it. Some cloud providers now offer carbon-aware scheduling options.

Use cases of AI for big data by industry

Big data and AI play significant roles in data analytics and predictive analytics, using technology to automate and speed up the process. This decreases the time from data collection to insight, can also reduce errors within the process, and can result in cost savings.

Companies across all industries can benefit from AI and big data, as it empowers them to make informed decisions. Here are just a few of the many use cases of AI in big data:

Financial services

Financial institutions use AI to detect trends and anomalies in data, which can help them detect risks and fraud. Real-time fraud detection systems analyze transaction patterns and flag suspicious activity within milliseconds, preventing losses before they occur. They can also take advantage of AI-powered chatbots for customer service, ML models for credit risk assessment, and algorithm-based trading. Some institutions report fraud detection improvements of 50 percent or more after implementing AI-powered systems. That's a meaningful reduction in losses that often justifies the implementation investment within the first year.

Retail

AI tools predict consumer trends to optimize inventory and assist with product innovations. Demand forecasting models analyze historical sales, weather patterns, economic indicators, and social media trends to predict what customers will want and when. Many retailers also use AI-powered data to develop personalized recommendations for customers, driving higher conversion rates and average order values.

Marketing

AI helps marketers more clearly understand customer behavior and sentiment to segment audiences and deliver personalized marketing based on preferred methods, previous customer engagement, personal interests, or purchase history. Predictive models identify which customers are most likely to convert, churn, or respond to specific offers, enabling more efficient marketing spend allocation.

Healthcare

AI analyzes patient charts, imaging, and other data to assist professionals in diagnoses. Image recognition models detect anomalies in X-rays, magnetic resonance imaging (MRI), and computed tomography (CT) scans, often identifying issues that human radiologists might miss. It can also develop personalized treatment options and predict patient outcomes, helping clinicians make more informed decisions about care plans.

Manufacturing

Big data and AI enhance existing quality control systems to further improve product quality. Computer vision systems inspect products on assembly lines, identifying defects more quickly and more consistently than human inspectors. AI can also reduce equipment downtime by forecasting maintenance and equipment failures and predicting trends that will impact the supply chain.

IT and cybersecurity

IT professionals rely on AI in big data to monitor hardware and other tech infrastructure, predict maintenance needs, and identify cyber security risks to prevent attacks and downtime. Security systems analyze network traffic patterns, user behavior, and threat intelligence feeds to detect and respond to attacks in real time, often before human analysts would notice the threat.

Getting started with AI and big data

Before investing in AI tools, organizations should assess their data foundation. AI initiatives frequently stall not because of model complexity, but because the underlying data isn't ready.

Start by auditing existing data sources for completeness and quality. Understand where your data lives, who owns it, and how accessible it is. Establish data ownership and access controls before layering AI on top. Governance gaps become much harder to close once AI systems are in production.

Evaluate whether current infrastructure can support the ingestion and processing volumes that AI workloads require. Many organizations underestimate the compute and storage demands of training and running models at scale.

Consider starting with a focused use case that has clear business value and relatively clean data. Success with a smaller project builds organizational confidence and capability for larger initiatives.

A practical way to roll this across teams

Different roles feel different parts of the big data + AI puzzle, so it helps to align on who does what early. Here's a simple way to divide the work:

- Data engineer: Prioritize automated ingestion and integration so teams stop building pipelines from scratch for every new source. Focus on repeatable patterns for structured and unstructured data, plus data quality monitoring that catches issues before models do.

- AI/ML engineer: Set up experimentation and deployment workflows that run against governed enterprise datasets. Aim for flexibility in model choice (including third-party and custom LLMs) while keeping guardrails, approvals, and monitoring in place.

- IT leader / data leader: Reduce tool sprawl and centralize security, compliance, and governance controls. Make sure real-time pipelines can support both BI and AI workloads without creating blind spots.

- BI and analytics leader: Lock in consistent definitions with a semantic layer and governed metrics so AI outputs stay aligned with how the business measures performance. Expand access with AI-assisted analytics like natural language querying while keeping governance intact.

- Line of business executive: Start with ROI-first use cases in your function (finance, marketing, sales, operations). Ask for outcomes you can measure, and favor deployments that fit into existing workflows so adoption doesn't drag.

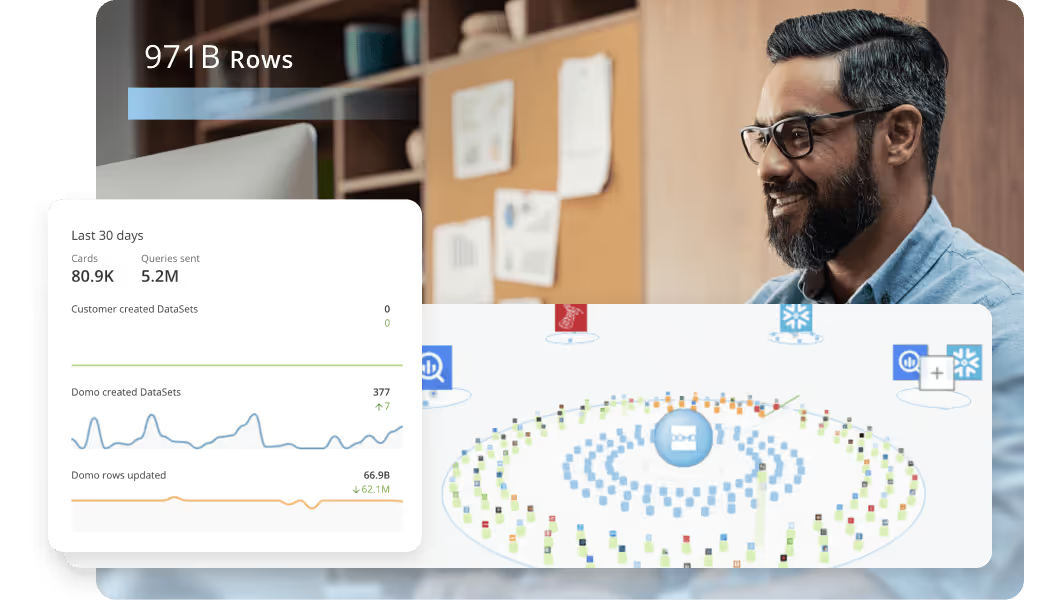

Are you interested in seeing how big data and AI can improve your business? Discover how Domo.AI combines AI innovations with our existing BI platform for powerful analysis and meaningful business insights.

If you're exploring AI agents specifically, look for approaches that connect agents directly to governed datasets and unstructured documents through RAG. That way you can automate work without creating a new maze of custom integrations to maintain.