11 Best Change Data Capture (CDC) Tools to Consider in 2026

As data volumes grow and businesses demand fresher insights, traditional batch-based data movement is no longer enough. Modern analytics, operational reporting, and AI use cases increasingly rely on near-real-time data updates to remain relevant and actionable. Delays of hours (or even minutes) can limit visibility into operational performance, customer behavior, or system health.

That’s where Change Data Capture (CDC) platforms come in. CDC tools help organizations detect and replicate data changes as they happen, providing faster information while reducing system load on production databases.

In this guide, we’ll explain what CDC platforms are, why organizations use them, what features to evaluate, and highlight 10 CDC tools to consider in 2026, helping data teams make more informed decisions as real-time data becomes the norm.

What is a Change Data Capture (CDC) platform?

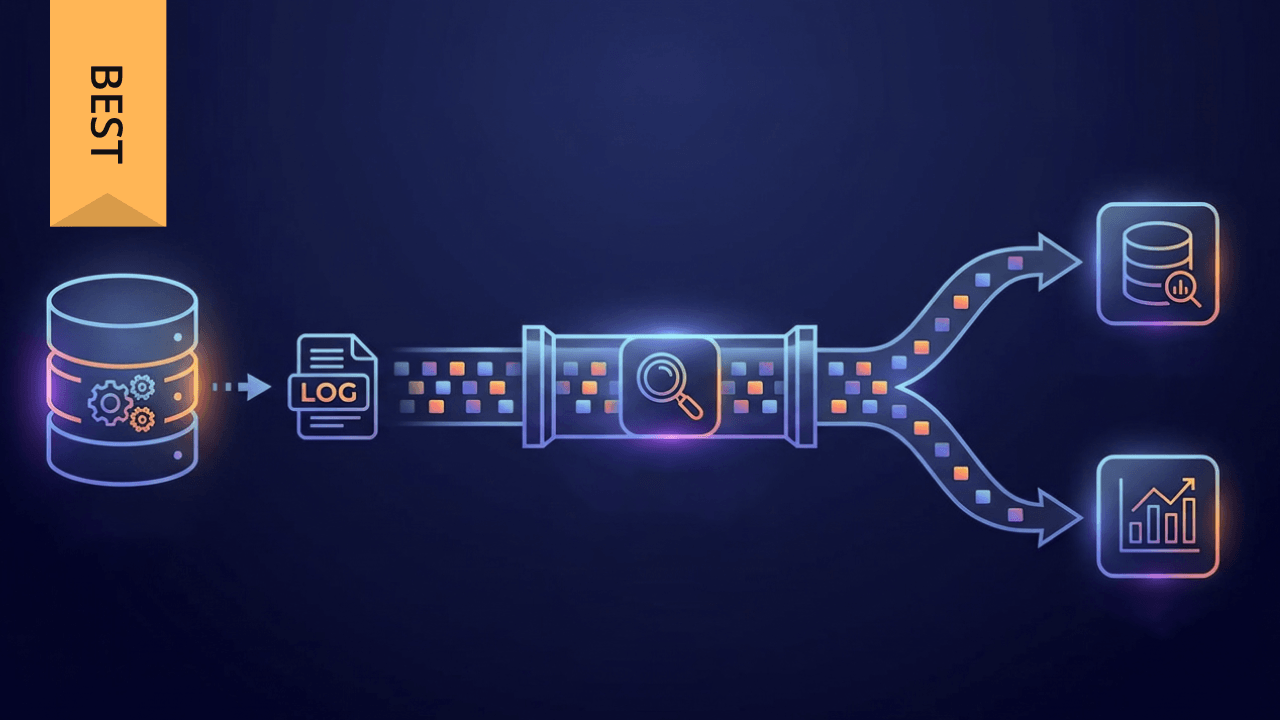

A Change Data Capture (CDC) platform is a data integration tool designed to identify and capture changes (such as inserts, updates, and deletes) in source systems and replicate those changes to downstream destinations in near real time. Rather than relying on periodic full data extracts, CDC focuses on tracking what has changed since the last update.

Instead of repeatedly extracting entire tables, CDC platforms monitor incremental changes using database logs, timestamps, or triggers. This approach significantly reduces the volume of data that must be moved and processed, while improving data freshness and system performance.

As a result, organizations can:

- Keep analytics systems continuously updated.

- Reduce load on production databases.

- Support real-time or near-real-time use cases.

CDC is commonly used to replicate data from transactional databases into data warehouses, data lakes, streaming systems, or operational applications. It plays a key role in modern data architectures, enabling event-driven workflows, continuously updated dashboards, and AI models that depend on timely, accurate data.

Benefits of using a CDC platform

Why organizations adopt CDC tools

Fresher data for analytics

CDC enables near-real-time updates, ensuring dashboards, reports, and models reflect the most current data available. This allows teams to respond faster to changes in customer behavior, operational performance, or market conditions, rather than relying on stale, batch-based reporting.

Reduced system load

By capturing only incremental changes, CDC minimizes the impact on source systems compared to full table refreshes. This reduces query overhead on production databases and helps maintain application performance during peak usage periods.

Scalability

CDC pipelines scale efficiently as data volumes grow, making them well-suited for modern cloud data architectures. As transaction rates increase, CDC allows organizations to process more data without proportionally increasing infrastructure complexity.

Operational and analytical use cases

CDC supports both analytics (BI, ML, forecasting) and operational use cases such as syncing applications, triggering workflows, or supporting event-driven architectures across systems.

Improved data reliability

Many CDC tools include built-in mechanisms for consistency, ordering, and fault tolerance, helping ensure data remains accurate, complete, and trustworthy even as pipelines grow more complex.

What to look for in a CDC platform

While CDC tools vary in approach and architecture, most buyers evaluate platforms across a common set of criteria:

Source and destination support

Look for support across popular databases (e.g., MySQL, PostgreSQL, SQL Server, Oracle) as well as modern cloud data warehouses, data lakes, and streaming platforms. Broad connectivity reduces the need for custom workarounds as your data ecosystem evolves.

Latency and throughput

CDC platforms should balance low latency with the ability to handle high transaction volumes. Some use cases require sub-second updates, while others prioritize stable throughput for large-scale workloads. Understanding these tradeoffs is essential.

Deployment flexibility

Some tools are fully managed cloud services, while others support self-hosted or hybrid deployments. Deployment flexibility allows teams to align CDC pipelines with security, compliance, and infrastructure requirements.

Schema change handling

The ability to detect, propagate, and adapt to schema changes without breaking pipelines is critical. Strong schema evolution support reduces maintenance overhead and prevents downstream failures.

Monitoring and reliability

Strong observability, alerting, and recovery mechanisms help maintain trust in CDC pipelines. Visibility into lag, failures, and data freshness is especially important for business-critical workloads.

Security and compliance

CDC platforms should support encryption, access controls, and audit logging. This is particularly important for regulated industries handling sensitive or personally identifiable data.

Ease of management

Configuration, onboarding, and ongoing maintenance should be manageable without excessive engineering effort. Automation and clear operational controls can significantly reduce long-term costs.

Integration with analytics and downstream systems

CDC delivers the most value when it connects easily to transformation, analytics, and decision-making workflows. Platforms that integrate well downstream help shorten the path from data change to business impact.

Cost transparency and resource efficiency

Understanding how a CDC platform consumes compute, storage, and network resources is important for long-term scalability. Clear pricing models and efficient resource usage help teams avoid unexpected costs as data volumes grow.

Future readiness

As data strategies evolve, CDC platforms should support new sources, destinations, and real-time use cases without major re-architecture. Flexibility and extensibility help protect investments over time.

11 best Change Data Capture platforms in 2026

Change Data Capture platforms vary widely in architecture, deployment models, and use cases. The tools below represent CDC solutions commonly evaluated by data teams in 2026, spanning cloud-native services, open-source frameworks, and enterprise platforms. Each offers a different approach to capturing and delivering incremental data changes at scale.

1. Domo

Domo offers Change Data Capture capabilities as part of its broader cloud-native data platform, enabling incremental data ingestion from databases, applications, and operational systems. CDC in Domo supports near-real-time data updates, helping organizations keep dashboards, alerts, and analytics continuously current without relying on full data refreshes.

What distinguishes Domo’s approach? It’s how Domo CDC connects directly to downstream analytics, transformation, governance, and AI-driven insights within a single environment.

Data changes can be ingested, modeled, visualized, and acted upon without moving across multiple disconnected tools. This makes Domo a common choice for teams that want CDC tightly aligned with business intelligence, operational reporting, and decision automation rather than treating CDC as a standalone replication layer.

2. Fivetran

Fivetran provides managed CDC through log-based replication for many widely used relational databases. Its platform is designed to automate the extraction and delivery of incremental data changes into cloud data warehouses and analytics destinations. Fivetran emphasizes automated setup, schema change propagation, and hands-off pipeline management.

The service is commonly adopted by analytics and data engineering teams that want to minimize operational overhead while maintaining reliable access to fresh data. By handling infrastructure management and ongoing maintenance, Fivetran allows teams to focus on modeling, analytics, and downstream use cases rather than managing CDC mechanics. It is frequently evaluated in cloud-first analytics environments.

3. Debezium

Debezium is an open-source CDC platform built on Apache Kafka that captures row-level changes from database transaction logs. It streams these changes as events into Kafka topics, making them available for real-time processing and downstream consumption.

Debezium is often used by engineering-driven organizations building event-based or streaming architectures. Because it integrates closely with Kafka, it supports use cases such as microservices communication, real-time analytics, and custom data pipelines. Debezium is commonly deployed by teams that want deep control over CDC behavior and already operate open-source streaming infrastructure as part of their data stack.

4. Qlik Replicate

Qlik Replicate is designed for real-time data replication and Change Data Capture across a wide range of source and target systems. It supports heterogeneous database environments and enables continuous data movement with minimal impact on source systems.

The platform is commonly used in hybrid and multi-cloud architectures, especially where organizations need to keep legacy systems synchronized with modern analytics platforms. Qlik Replicate is frequently evaluated by enterprises undergoing cloud migration or consolidating data from multiple operational systems into centralized analytics environments while maintaining near-real-time data availability.

5. AWS Database Migration Service (DMS)

AWS Database Migration Service supports CDC as part of ongoing database replication workflows. After initial data migration, DMS can continuously capture changes and replicate them to AWS destinations such as data warehouses, data lakes, and analytics services.

AWS DMS is commonly used by organizations operating primarily within the AWS ecosystem. It supports both homogeneous and heterogeneous database replication and is often leveraged during cloud modernization initiatives. Its CDC functionality enables teams to maintain synchronized data sets while minimizing disruption to production systems during and after migration.

6. Microsoft SQL Server Change Data Capture

SQL Server Change Data Capture is a native feature of Microsoft SQL Server that records insert, update, and delete activity at the database level. It stores change data in relational tables that can be queried or replicated downstream.

This capability is frequently used in Microsoft-centric environments where SQL Server serves as a core transactional system. SQL Server CDC is commonly paired with reporting, ETL, or data warehousing workflows to support incremental data processing. Because it is built directly into the database engine, it is often part of existing enterprise data architectures.

7. Oracle GoldenGate

Oracle GoldenGate is an enterprise-grade CDC and data replication platform designed for high availability, low latency, and real-time data movement. It supports a wide range of databases and operating environments, enabling continuous replication across distributed systems.

GoldenGate is commonly deployed in mission-critical enterprise environments where data consistency, ordering, and uptime are essential. It supports complex replication scenarios, including multi-database and cross-platform environments. Organizations often evaluate GoldenGate when building large-scale, always-on data integration architectures.

8. Talend

Talend provides Change Data Capture as part of its broader data integration and data management platform. Its CDC capabilities support log-based change capture from multiple databases and can be combined with transformation, data quality, and governance workflows.

Talend is frequently used by organizations that want CDC integrated into an end-to-end data pipeline rather than treated as a standalone capability. By combining incremental ingestion with transformation and quality controls, Talend supports use cases that require both data freshness and structured, analytics-ready outputs.

9. IBM InfoSphere Data Replication

IBM InfoSphere Data Replication offers CDC capabilities focused on reliability, consistency, and enterprise-scale data movement. It supports continuous replication for a variety of enterprise databases and platforms.

The tool is commonly used in regulated industries and large organizations where governance, auditability, and data integrity are critical. InfoSphere Data Replication is often deployed as part of broader IBM data management ecosystems, supporting long-running, business-critical replication workloads across complex environments.

10. Striim

Striim is a real-time data streaming platform that includes CDC capabilities for continuous data ingestion and processing. It captures changes from transactional systems and streams them to analytics platforms, cloud services, and messaging systems.

Striim is frequently evaluated for use cases requiring low-latency data movement and real-time processing. It supports both operational and analytical workloads, making it suitable for organizations building event-driven pipelines, streaming analytics, or continuously updated dashboards.

11. Apache NiFi

Apache NiFi is an open-source dataflow automation tool that supports CDC-style patterns through event-based ingestion and database monitoring. It provides a visual interface for designing and managing data flows across systems.

NiFi is often used in hybrid or custom data movement scenarios where teams require flexibility, routing control, and integration across diverse systems. While not a dedicated CDC tool, it is commonly evaluated for CDC-adjacent use cases in environments that prioritize customization and open-source tooling.

Together, these CDC platforms highlight the range of approaches available in 2026, helping organizations select solutions that align with their data architecture and real-time needs.

Choosing the right CDC platform in 2026

There is no one-size-fits-all CDC solution. The right platform depends on a combination of technical, operational, and organizational factors, including:

Your source systems and destinations

Different CDC tools specialize in different ecosystems. Some excel at relational databases, while others support streaming platforms, cloud warehouses, or hybrid environments. Compatibility across your current—and future—stack is essential.

Latency and freshness requirements

Not all use cases require real-time replication. Operational dashboards, fraud detection, or event-driven workflows may need near-instant updates, while analytical reporting can tolerate minutes or hours of delay. Aligning CDC latency with business needs prevents over-engineering.

Cloud, hybrid, or on-prem deployment needs

Infrastructure strategy plays a major role. Fully managed cloud services reduce maintenance overhead, while self-hosted or hybrid options offer greater control for security, compliance, or data residency requirements.

Desired level of operational control

Some teams prefer turnkey solutions that abstract away infrastructure management. Others want hands-on control over pipelines, configurations, and tuning—especially in complex or high-volume environments.

Scalability and growth expectations

As transaction volumes increase, CDC pipelines must scale without degrading performance or reliability. Evaluating how platforms handle growth helps avoid costly re-architecture later.

Integration with downstream analytics and AI

CDC delivers the most value when it feeds directly into analytics, machine learning, and operational workflows. Platforms that integrate smoothly with transformation, governance, and analytics tools shorten time to insight.

Governance, security, and compliance

Industries with strict regulatory requirements should assess encryption, access controls, audit logging, and data lineage support as part of the CDC evaluation.

Some teams prioritize managed simplicity, while others value flexibility or deep integration with streaming architectures. In many modern data stacks, CDC is just one component of a broader data platform strategy—one that must align with how organizations ingest, transform, analyze, and act on data at scale.

Final thoughts

Change Data Capture has become a foundational capability for modern data architectures. As real-time analytics, AI, and operational use cases continue to expand in 2026, CDC platforms play a critical role in keeping data fresh, reliable, and scalable.

By understanding how CDC tools differ and where they fit, organizations can design data pipelines that support both today’s analytics needs and tomorrow’s innovation.

Why Domo for Change Data Capture in 2026

As Change Data Capture becomes a foundational capability rather than a standalone tool, many organizations are rethinking how CDC fits into their broader data strategy. Capturing changes is only the first step—the real value comes from how quickly those changes can be governed, analyzed, and acted upon.

Domo supports CDC as part of a unified, cloud-native data platform. Incremental data changes from databases and applications can be ingested continuously and made immediately available for transformation, analytics, alerts, and AI-driven insights. By connecting CDC directly to downstream analytics and decision workflows, Domo helps teams reduce data latency while simplifying their overall data stack.

Rather than managing separate tools for CDC, transformation, visualization, and governance, organizations can centralize these capabilities in one environment. This approach enables faster time to insight, greater visibility across data pipelines, and a more scalable foundation as data volumes and real-time use cases continue to grow.

Ready to see how Change Data Capture fits into a unified data platform?

Contact Domo to learn how incremental data ingestion, real-time analytics, and AI-powered insights can work together to help your organization move faster—from data change to business impact.

Frequently asked questions

What is a Change Data Capture (CDC) platform?

What are the main benefits of using a CDC platform?

How does Change Data Capture (CDC) work?

What is the difference between CDC and traditional batch data processing?

What key features should you look for when choosing a CDC platform?

Domo transforms the way these companies manage business.