Building Data Pipelines in Python: Framework, How To Build, Examples

Python, the flexible programming language that’s been around since the late 1980s, continues to be a useful, easy-to-learn language. It’s straightforward design and clarity make it user-friendly, whether you’re first starting out or have years of experience. In fact, many of the world’s leading names in technology—including Google, Amazon, OpenAI, and Quora—rely on Python for its comprehensive features and scalability.

What are data pipelines in Python?

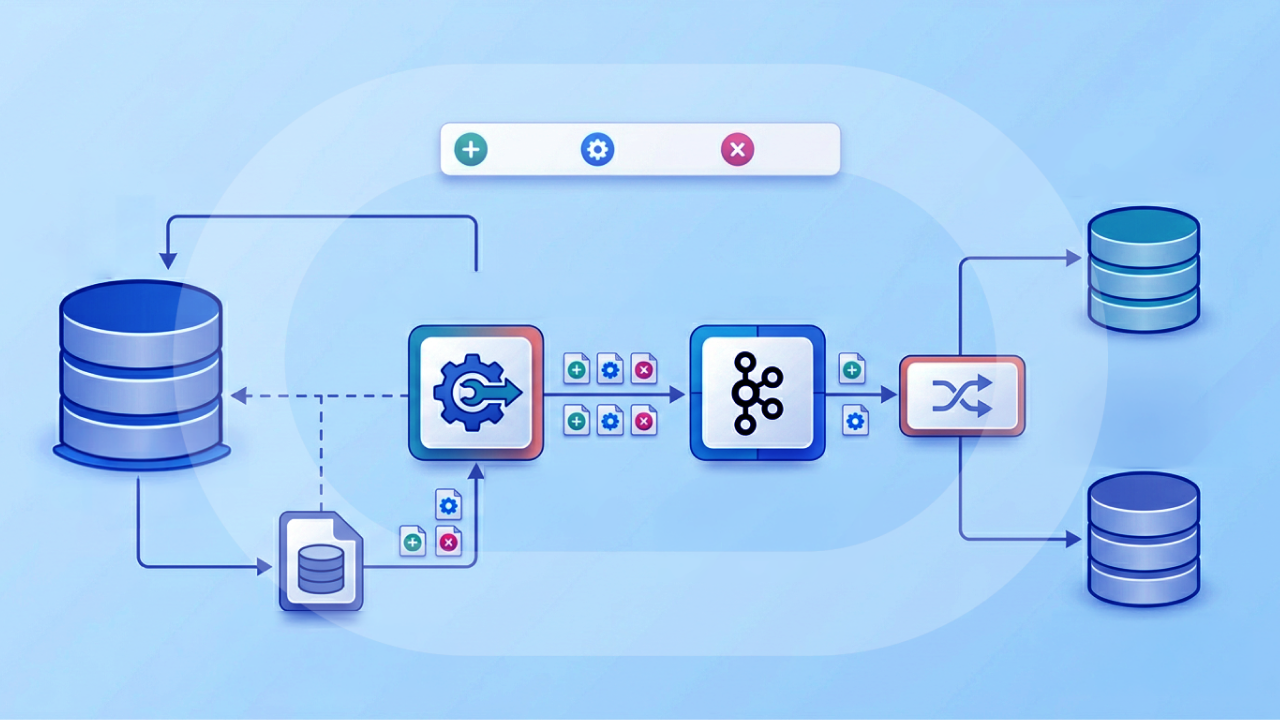

In Python, data pipelines are structured workflows designed to move, transform, and process data from one point to another in a systematic, automated way. They act as assembly lines for information: Raw data enters the pipeline, passes through a series of steps, such as cleaning, filtering, aggregating, or enriching, and emerges in a format that’s ready for analysis or storage.

These steps can be written in Python using built-in libraries like Pandas (Python Data Analysis Libraries) or csv files, or with specialized tools like Apache Airflow, Luigi, or Prefect for orchestration. The goal is to ensure that data flows efficiently and consistently from its source—whether it’s a database, API, or file—through transformation steps and into a target system like a data warehouse, dashboard, or machine learning model.

Because Python integrates easily with both data sources and storage solutions, it’s widely used for building pipelines that can handle anything from small-scale ETL (Extract, Transform, Load) processes to large-scale, distributed workflows. A well-constructed Python data pipeline not only streamlines operations but also ensures data quality, reproducibility, and scalability for analytics and decision-making.

Benefits of building data pipelines in Python

Python data pipelines are a powerful way to manage and automate the flow of data from one point to another, ensuring it’s cleaned, transformed, and ready for use. In a world where organizations are inundated with massive volumes of big data from multiple sources, pipelines make it possible to move data efficiently and consistently without constant manual oversight.

By using Python’s rich ecosystem of libraries and frameworks, businesses can build custom pipelines around their specific requirements, whether for analytics, reporting, or powering machine learning.

Automated workflows

Python data pipelines remove the repetitive, manual work involved in moving and preparing data. Once a pipeline is built, it can automatically handle ingestion, transformation, and output according to a set schedule or triggers. This means your team spends less time on tedious data handling and more time on analysis, innovation, and strategic decision-making.

Improved data quality

When data is processed manually, errors and inconsistencies are inevitable. Python data pipelines ensure every data set follows the same cleaning, validation, and formatting rules. This data consistency improves its reliability and makes it easier to trust the results of reports, dashboards, and predictive models.

Scalability and flexibility

As your business grows, so does the amount and variety of data you handle. Python pipelines are highly adaptable, allowing you to scale from small ETL jobs to processing massive data sets across distributed systems. They can also be modified quickly to accommodate new data sources, formats, and processing logic without a complete rebuild.

Integration with multiple data sources

Python has a vast ecosystem of libraries and connectors that make it easy to pull data from diverse sources such as APIs, SQL and NoSQL databases, spreadsheets, and cloud storage. This ability to combine data from many systems into a single workflow creates a more complete and unified view of your operations.

Reproducibility

A well-defined pipeline ensures that the same steps can be executed repeatedly with identical results. This reproducibility is crucial for auditing, regulatory compliance, and verifying analytics or machine learning results. It also makes it simple for new team members to follow and maintain established workflows.

Faster insights

By streamlining the entire data process—from ingestion to transformation to loading—Python pipelines significantly reduce the time between receiving raw data and producing analysis-ready data sets. This speed empowers decision-makers to act faster, which can be a competitive advantage in fast-moving markets.

Cost efficiency

Automating your data workflows reduces the labor costs associated with manual handling and minimizes the impact of costly errors. Because Python is open-source and widely supported, you can also avoid high licensing fees while still making use of enterprise-grade capabilities.

How to build data pipelines in Python

Building a data pipeline in Python involves creating a streamlined process for collecting, transforming, and delivering data from various sources to a target system. While the specific tools and libraries you use may vary, the overall process typically follows a series of structured steps to ensure your data flows smoothly and reliably.

1. Define your requirements

Before writing any code, clearly outline what your pipeline should achieve. Identify your data sources, the transformations required, the target destination, and the frequency of data movement. This ensures you select the right tools and structure for your project.

2. Choose your tools and libraries

Select Python libraries that match your requirements. Common choices include Pandas for data manipulation, SQLAlchemy for database connections, requests for API calls, and orchestration tools like Apache Airflow, Luigi, or Prefect for scheduling and automation.

3. Set up data ingestion

Write scripts to extract data from your chosen sources, such as APIs, databases, flat files, or cloud storage. Use connectors or APIs that support authentication, error handling, and retries to ensure data is pulled reliably.

4. Clean and transform the data

Implement cleaning steps to remove duplicates, handle missing values, and standardize formats. Apply transformations that align the data with your target schema, business logic, or analytical requirements, using tools like Pandas or PySpark.

5. Load the data into the destination

Send the processed data to your target system, whether that is a relational database, a data warehouse like Snowflake or BigQuery, or a cloud storage bucket. Ensure the load process is idempotent so it can run repeatedly without creating duplicates or inconsistencies.

6. Orchestrate and automate the pipeline

Use a scheduling tool to run the pipeline on a set schedule or in response to events. Orchestration ensures dependencies are respected and tasks run in the correct order. Tools like Airflow allow you to monitor pipeline health and troubleshoot failures quickly.

7. Monitor and maintain the pipeline

Set up logging, alerts, and dashboards to track pipeline performance, data quality, and run status. Regularly review and update the pipeline to accommodate changes in data sources, formats, or business requirements.

Different frameworks for Python data pipelines

Here’s a quick tour of popular Python frameworks you can use to build, orchestrate, and scale data pipelines. They vary in focus from distributed compute to workflow orchestration to project structure, so the “best” choice depends on your team size, reliability needs, and where your bottlenecks are (compute vs coordination vs maintainability).

Apache Beam

Beam is a unified model for batch and streaming data processing. You write pipelines once in Python and execute them on multiple runners, like Google Cloud Dataflow, Apache Flink, or Apache Spark. It shines when you need windowing, exactly-once semantics, and portability across engines, though its learning curve is steeper than most orchestrators.

Dagster

Dagster is a modern orchestrator centered on software-defined assets (data products) with strong typing, IO managers, and built-in observability. It encourages testing and modularity, making lineage and deployments first-class. Great for teams that want maintainable, production-grade pipelines with clear contracts between steps.

Luigi

Luigi (from Spotify) is a lightweight Python framework for building batch pipelines via tasks with explicit dependencies and targets (usually files or tables). It’s simple and stable, good for on-prem or smaller setups, though it lacks some of the UI polish, scheduling features, and ecosystem breadth of newer tools.

Dask

Dask provides parallel and distributed computing in pure Python with familiar NumPy/Pandas/SciPy-like APIs. It’s ideal when your pipeline is compute-bound (e.g., large dataframe transforms) and you want to scale horizontally without rewriting code for Spark. It’s not an orchestrator; pair it with one if you want scheduling and retries.

Apache Airflow

Airflow is a battle-tested scheduler/orchestrator that represents workflows as DAGs in Python. It was actually listed as the #1 best Python ETL tool by Estuary. With a huge ecosystem of operators and a mature UI, it’s often the default choice for coordinating SQL jobs, Spark tasks, and external systems. The tradeoff: verbose DAG (directed acyclic graphics) code and a batch-first mindset (streaming requires add-ons).

Prefect

Prefect is an orchestration tool that emphasizes developer ergonomics: You write “flows” and “tasks” as normal Python, add decorators for retries/caching, and get a cloud or self-hosted control plane for observability. It’s flexible and easy to adopt, especially for teams that want less boilerplate than Airflow.

How to choose the right Python framework for your data pipeline

With so many Python frameworks for data pipelines, it’s easy to get lost in the feature lists. The best choice depends on your priorities: Are you optimizing for raw compute performance, ease of orchestration, project maintainability, or deployment flexibility? Here’s a more detailed guide to help narrow your decision.

Apache Beam

Pick Beam if you’re looking for a unified way to handle both batch and streaming data, and you want to avoid lock-in to a single Python runner. Ideal for teams operating in multi-cloud or hybrid environments, or those already using backends like Dataflow or Flink.

Dagster

If data quality, lineage, and maintainability are top concerns and you want strong typing and modularity baked in, Dagster excels. Great for analytics engineering teams who treat pipelines like production software.

Luigi

Go with Luigi if you want simple, dependency-driven workflows without the complexity of heavier orchestrators. It’s perfect for small teams running batch jobs in stable, predictable environments.

Dask

Choose Dask if your biggest bottleneck is processing large data sets in Python and you want to scale Pandas/NumPy-like operations horizontally. It’s a compute framework, so you’ll likely still want an orchestrator for scheduling.

Apache Airflow

Airflow remains a solid pick when you want an industry-standard orchestrator with a huge plugin ecosystem, especially for integrating SQL jobs, Spark, and ETL processes. It’s a strong fit for organizations with mixed-tech workflows.

Prefect

If you want low-friction orchestration that feels like normal Python, Prefect is a great choice. It emphasizes flexibility, fast iteration, and an approachable learning curve while still offering retries, caching, and observability.

Real-world Python data pipeline examples

Python is a versatile language for building data pipelines, from small ETL scripts to large-scale distributed systems. Its rich ecosystem of libraries and frameworks makes it possible to connect to virtually any data source, transform data in flexible ways, and integrate with visualization or analytics platforms.

Below are some common examples of Python-based data pipelines and the kinds of problems they solve.

ETL for sales data aggregation

Tools:

- Pandas, SQLAlchemy, Airflow/Dagster for orchestration

Workflow:

- Extract: Query daily sales transactions from a PostgreSQL database using SQLAlchemy.

- Transform: Clean the data with Pandas—remove duplicates, standardize currency, convert time zones.

- Load: Insert aggregated daily metrics (total sales, average order value, top products) into a data warehouse like Snowflake or BigQuery.

Example use case: Generating daily dashboards for an e-commerce analytics team.

Social media sentiment analysis

Tools:

- Tweepy (Twitter API), Dask, scikit-learn, Prefect for orchestration

Workflow:

- Extract: Pull tweets from a specific hashtag or account via the Twitter API.

- Transform: Use Dask to parallelize text preprocessing (tokenization, stopword removal).

- Analyze: Apply a sentiment analysis model with scikit-learn.

- Load: Store results in Elasticsearch for querying or in a BI tool for visualization.

Example use case: Tracking brand sentiment for marketing campaigns.

Real-time fraud detection

Tools:

- Apache Beam (running on Google Cloud Dataflow), BigQuery

Workflow:

- Extract/Stream: Ingest credit card transactions in near-real time from a Kafka topic.

- Transform: Apply Beam’s windowing to analyze transaction patterns over rolling 5-minute intervals.

- Detect: Flag anomalies based on spending thresholds or velocity checks.

- Load/Alert: Store flagged transactions in BigQuery and send alerts via a webhook to a fraud monitoring system.

Example use case: Preventing fraudulent purchases in a financial platform.

Machine learning model training pipeline

Tools:

- Kedro for structure, scikit-learn or TensorFlow, MLflow for tracking

Workflow:

- Extract: Pull labeled training data from cloud storage.

- Transform: Apply feature engineering and normalization.

- Train: Fit a machine learning model and log results with MLflow.

- Evaluate and Deploy: Save the model artifact, push to a model registry, and trigger deployment.

Example use case: Automating weekly retraining of recommendation algorithms.

Website log analysis

Tools:

- Luigi, Pandas, Matplotlib

Workflow:

- Extract: Read Apache/Nginx access logs from an S3 bucket.

- Transform: Parse logs into structured data with Pandas, filtering for status codes and paths.

- Load/Visualize: Generate plots for daily traffic, errors, and request latency.

Example use case: Monitoring website performance trends.

From Python pipelines to Domo dashboards

Python offers a powerful, flexible foundation for building data pipelines. Whether you’re orchestrating complex workflows, scaling compute-intensive jobs, or simply automating routine data tasks, Python is a great choice. The right framework depends on your specific needs, but every pipeline benefits from a clear path from raw data to information you can use.

That’s where Domo comes in. By connecting your Python-powered pipelines to Domo’s robust BI platform, you can transform processed data into interactive dashboards, real-time reports, and shareable insights that drive smarter decisions.

Ready to see how Domo can supercharge your Python data workflows? Explore how Domo integrates with your data pipelines.