What Is Data Pipeline Orchestration: Examples & Benefits

Modern organizations rely on a constant flow of accurate, timely information to make confident decisions. Data pipeline orchestration is what keeps that flow running smoothly by coordinating every step, from extracting raw data to delivering polished insights. It ensures tasks happen in the right order, at the right time, and with minimal errors, which becomes increasingly important as data sources multiply and workflows grow more complex.

In this article, we’ll explore what orchestration is, why it matters, and how organizations like yours are using it in real-life scenarios across different industries.

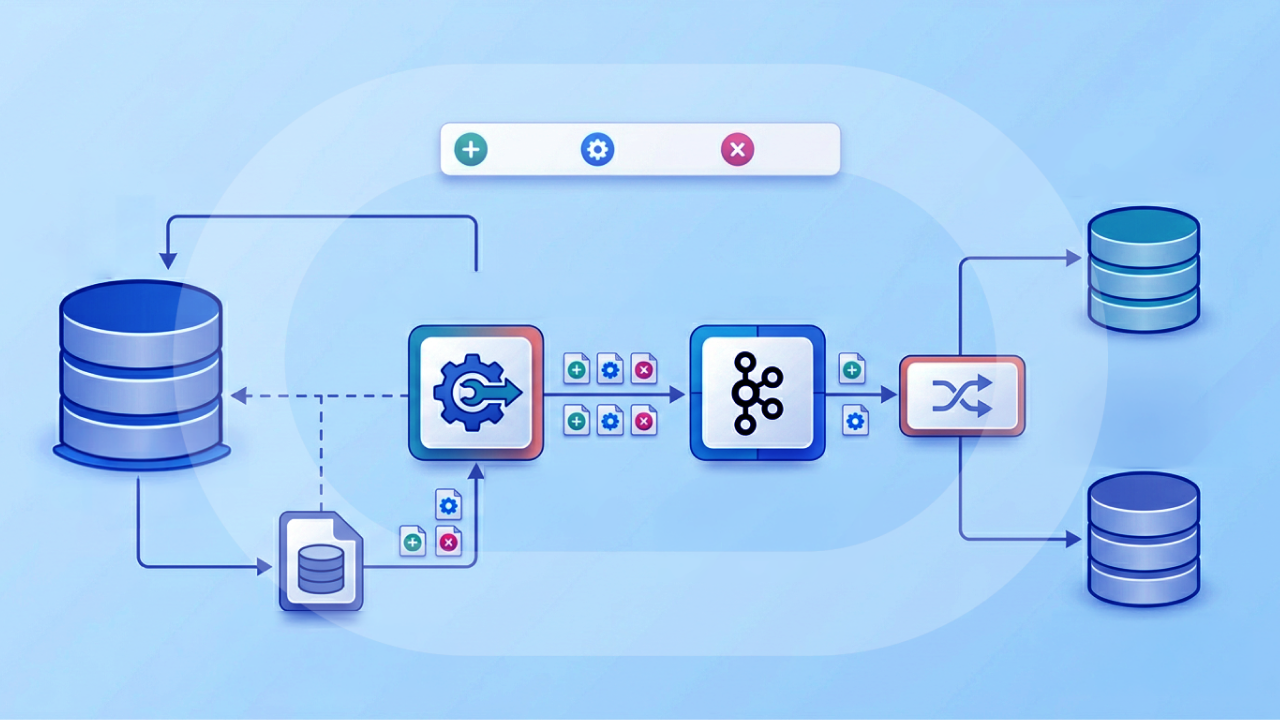

What is data pipeline orchestration?

Data pipeline orchestration ensures that all the steps in a data workflow happen in the right order, at the right time, and in the right way. Think of it as the conductor of an orchestra: Each instrument (or in this case, each task in your pipeline) has its own part to play, but only with coordination do they work together to produce a polished, finely-tuned performance.

In the world of data, a pipeline might have many steps—pulling data from different sources, cleaning and transforming it, running calculations, and then loading it into a dashboard or database. Orchestration tools like Apache Airflow, Dagster, or Prefect act as the “traffic controllers” for these steps. They handle scheduling (e.g., “Run every night at 2 a.m.”), manage dependencies (“Don’t start step B until step A is done”), and keep track of successes or failures. Without orchestration, you might have to manually trigger each step or write a lot of extra code just to manage timing and order. The result? Your workflows quickly become messy, inefficient, and error-prone.

Benefits of data pipeline orchestration

Data pipeline orchestration is more than just “nice to have.” It is what keeps complex workflows running smoothly and reliably. Without orchestration, data teams often face missed deadlines, broken processes, and inconsistent results. Here are some of the main benefits and why orchestration is so important.

Automation and time savings

Orchestration automates the process of running your data pipeline, so you don’t have to manually start each step or keep track of what is next. Once the schedule and dependencies are set, the system takes care of everything, freeing up time for higher-value work.

Reliability and consistency

By controlling the order and timing of each task, orchestration ensures your data is always processed the same way every time. This reduces the risk of human error and guarantees that downstream systems get the data they expect.

Error handling and recovery

If something goes wrong, such as a data source being unavailable, orchestration tools can alert you, retry failed steps, or skip non-critical tasks. This helps prevent small issues from growing into major delays or bad outputs.

Scalability

As your data workflows grow in size and complexity, orchestration makes it possible to coordinate dozens or even hundreds of tasks across multiple systems. You can expand your pipelines without having to manually manage each moving part.

Visibility and monitoring

Most orchestration tools offer dashboards and logs that let you see exactly what is running, what succeeded, and what failed. This visibility helps with troubleshooting, reporting, and compliance, and gives you confidence that your pipelines are doing their job.

Components of data pipeline orchestration

Data pipeline orchestration involves several key components that work together to ensure data flows smoothly from source to destination. Each plays a specific role in making pipelines reliable, efficient, and adaptable to changing business requirements. Here are the main components you’ll find in most orchestration setups.

Scheduler

The scheduler determines when each part of the pipeline should run. It can trigger tasks at fixed times, in response to events, or based on the completion of other steps in the workflow.

Task manager

The task manager handles the execution of individual steps within the pipeline. It ensures that each task runs in the correct order, has the right resources, and communicates its status back to the orchestration system.

Dependency management

Dependency management defines the relationships between tasks so that they run in the right sequence. This prevents downstream tasks from starting before upstream steps have completed successfully.

Monitoring and logging

Monitoring tools provide visibility into pipeline performance, showing which tasks are running, completed, or failed. Logging captures detailed information about each step, which is essential for troubleshooting and optimization.

Alerting and error handling

Alerting systems notify the right people when something goes wrong, while error handling features can retry failed tasks, skip non-critical steps, or trigger backup workflows to keep processes moving.

Integration connectors

Integration connectors link the pipeline to data sources, destinations, and other systems. These connectors handle the technical details of moving data in and out of databases, APIs, file systems, and cloud services.

Examples of data pipeline orchestration

From retail and finance to healthcare and manufacturing, many industries rely on carefully coordinated data workflows to keep information flowing smoothly and insights arriving on time. Orchestration tools manage complex sequences of tasks, ensuring each step runs in the right order and keeping teams informed when issues arise.

The following examples show how this coordination works in practice and the value it delivers in real-world scenarios.

E-commerce sales reporting

An online retailer uses an orchestration tool like Apache Airflow to coordinate nightly data pulls from multiple systems, including the order management platform, payment processor, and customer database. The pipeline transforms the raw data into a standardized format, loads it into a data warehouse, and updates sales dashboards by morning so teams can track performance in near real time.

Streaming fraud detection

A financial services company uses Apache Beam with Google Cloud Dataflow to orchestrate a real-time fraud detection pipeline. Incoming credit card transactions are streamed from Kafka, enriched with customer history, and scored for fraud risk. The orchestration layer ensures each processing step happens in sequence and without delay, triggering alerts within seconds when suspicious activity is detected.

Marketing campaign analytics

A marketing team uses Prefect to orchestrate weekly pipelines that gather campaign performance metrics from multiple advertising platforms, CRM data from Salesforce, and website engagement data from Google Analytics. The pipeline merges, cleans, and enriches the data, then updates a Domo dashboard that stakeholders can use to evaluate campaign ROI.

Healthcare data integration

A hospital network uses Dagster to orchestrate data pipelines that pull patient records from multiple systems, apply compliance checks for HIPAA regulations, and load approved data into a secure analytics environment. Orchestration ensures that sensitive information is processed in the correct order and that compliance rules are enforced at each stage.

IoT device monitoring

A manufacturing company uses Luigi to coordinate a daily pipeline that collects sensor readings from thousands of IoT devices on the factory floor. The pipeline aggregates and analyzes the data to spot maintenance needs before equipment fails. Orchestration ensures each step, from data collection to analysis, runs in the correct order with minimal downtime.

Supply chain optimization

A global logistics company uses Airflow to orchestrate a daily pipeline that combines shipment tracking data, warehouse inventory levels, and supplier delivery schedules. The pipeline analyzes this information to identify bottlenecks, forecast demand, and optimize delivery routes. Orchestration ensures the analysis is completed before morning planning meetings so operations managers have the latest insights.

Challenges with data pipeline orchestration

Data pipeline orchestration can streamline workflows and improve reliability, but it also comes with its own set of hurdles. These challenges often become more noticeable as pipelines grow in size, complexity, and importance to the business. Understanding these obstacles is the first step to addressing them effectively.

Complex dependencies

Pipelines often involve multiple tasks that must run in a specific order. As the number of dependencies grows, coordinating them becomes more complicated and increases the risk of failures if one step is delayed or skipped. This is especially true when pipelines span multiple teams or data sources, where delays in one area can have a ripple effect across the entire workflow.

While having clear documentation and dependency mapping can help, orchestration tools need to actively manage these relationships. In fact, TechRadar wrote extensively about troubles that DevOps teams face, and not understanding the complexities of their data pipelines was at the top of their list.

Scalability limits

A pipeline that works for small data volumes can break down when traffic spikes or the business starts handling much larger data sets. Without proper scaling strategies, this can lead to bottlenecks and missed deadlines. Teams should have orchestration platforms that can handle horizontal scaling, distributed processing, and workload balancing to ensure performance remains consistent as data volumes grow.

Error handling and recovery

When a step fails, it can be difficult to automatically retry or resume the pipeline without manual intervention. Poor error handling can lead to bottlenecks, data loss, or missed deadlines, and in some cases, the entire pipeline may have to be restarted from scratch. Effective orchestration tools should include automated retries, alerts, and the ability to restart from the point of failure to minimize downtime.

Monitoring and visibility

Without clear, real-time insight into pipeline status, it is harder to troubleshoot issues or verify that data is flowing as expected. Teams often lack dashboards or alerts that give them confidence in their workflows. Good orchestration platforms provide visual representations of workflows, historical logs, and proactive notifications that allow teams to catch and resolve issues before they impact the business.

Integration complexity

Pipelines often span different tools, databases, and services, each with its own data formats and connection requirements. Getting all of them to work together seamlessly and maintaining those connections over time can be a major challenge. Changes in APIs, software updates, or security policies can disrupt existing integrations, so orchestration systems must be adaptable and easy to update.

Cost control

Complex orchestration setups can consume significant compute resources, especially when pipelines are running around the clock. Without careful optimization, companies can see costs rise sharply as they scale their pipelines. Effective cost management may involve optimizing task scheduling, reducing unnecessary processing, and using cloud cost monitoring tools to keep expenses in check.

The future of data pipeline orchestration

The future of data pipeline orchestration is moving toward greater intelligence, flexibility, and real-time adaptability. As organizations manage larger and more complex data ecosystems, orchestration will become less about simply scheduling jobs and more about managing dynamic workflows that can adjust instantly to changing conditions.

AI-driven orchestration

Predicting and preventing failures

Artificial intelligence will play a bigger role in this shift, helping pipelines run more efficiently by predicting failures, automatically adjusting schedules, and even rerouting workflows when problems occur. This proactive approach will reduce downtime and improve overall reliability.

Dynamic scheduling and routing

Additionally, rather than relying solely on fixed schedules, future pipelines will increasingly run in real time, triggering actions the moment new data arrives or certain conditions are met. This will allow businesses to respond more quickly and deliver essential information that is always up to date.

Unified, cross-environment orchestration

Orchestration platforms will also unify management across different environments, from on-premises systems to multiple clouds and SaaS tools, providing a single control point for complex, distributed workflows.

Modular, Tool-Agnostic Pipelines

Rather than relying on traditional point-to-point tools, enterprises will likely move to unified orchestration platforms that integrate across environments, from on-prem to cloud. These solutions emphasize modularity, adaptability, and tool-agnostic workflows, enabling pipelines that can evolve with growing business needs. Forbes recently wrote about how these unified data pipelines are the backbone of AI agents.

The final step: turning orchestrated data into action

Effective data pipeline orchestration ensures that the right data reaches the right place at the right time, empowering teams to make informed, timely decisions. While orchestration tools coordinate the technical flow, the value comes when that data is transformed into actionable insights.

Domo connects seamlessly to your orchestrated pipelines, bringing together data from across your organization into a single platform where it can be visualized, shared, and acted on. Whether your pipelines run in Python, across multiple clouds, or in real time, Domo helps turn that orchestrated flow into clear, impactful stories that drive results.

Ready to see how Domo can supercharge your orchestrated data workflows? Watch a free demo to learn more about Domo’s data integration capabilities.