Benefits

If you manage data pipelines in any production environment, you already know the anxiety of deploying changes to critical dataflows. This agent acts as your always-on safety net.

- Change impact visibility: Every dataflow modification is automatically summarized by AI before it reaches production, giving data engineers a clear, plain-language explanation of what changed and what downstream assets are affected

- Risk prevention at the gate: The governance layer evaluates proposed changes against criticality ratings and dependency chains, flagging high-risk modifications before they can break dashboards, reports, or downstream pipelines

- Faster incident diagnosis: When data issues arise, AI-powered change summaries let engineers quickly identify which recent modification caused the problem without manually diffing dataflow versions

- Natural language data exploration: Non-technical stakeholders can ask plain-language questions about differences between dataset versions, reducing the burden on data teams to investigate and explain every anomaly

- Audit trail automation: Every change, approval, and impact assessment is logged automatically, creating a complete governance record without requiring engineers to maintain manual documentation

- Reduced rollback frequency: By catching risky changes before deployment, the agent significantly reduces the number of emergency rollbacks and the production downtime they cause

Problem Addressed

Data engineering teams operating large-scale analytics environments face a persistent governance challenge: changes to critical data pipelines can have cascading effects that are difficult to predict and expensive to repair. A single modification to a dataflow transformation can break dozens of downstream dashboards, corrupt calculated metrics, or introduce silent data quality issues that go undetected for days.

The traditional approach relied on peer review of dataflow code, but reviewers lacked the tooling to quickly understand the full impact of proposed changes. Engineers spent hours tracing dependency chains manually. When issues did reach production, diagnosing the root cause meant comparing dataflow versions line by line. Meanwhile, business users noticed data discrepancies in their reports but had no way to understand what had changed or why. The organization needed a governance layer that could evaluate change risk automatically, explain modifications in plain language, and give non-technical users the ability to investigate data differences on their own.

What the Agent Does

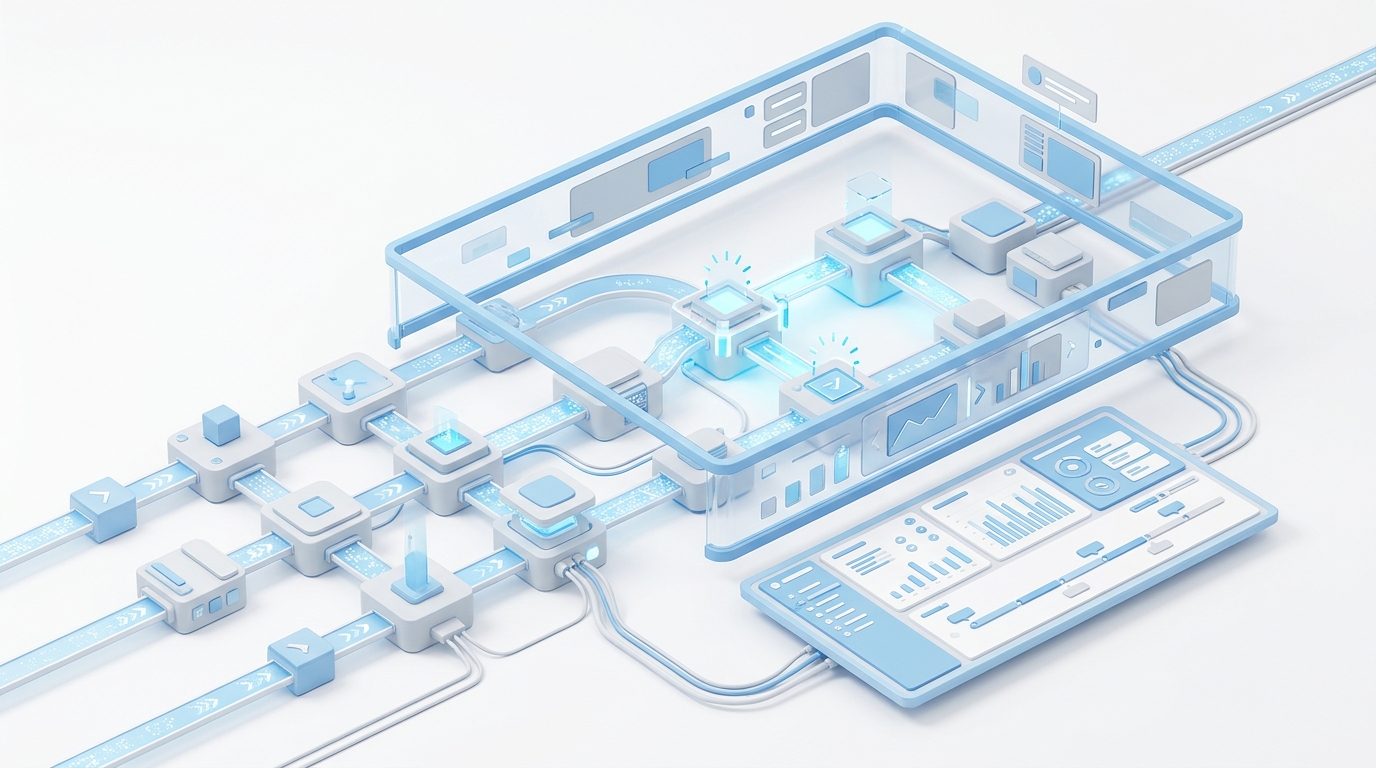

The agent provides a comprehensive governance and productivity toolkit that sits between data engineers and their production pipeline environment:

- Change detection and summarization: When a dataflow is modified, the agent compares the current and proposed versions, then generates a plain-language summary of what changed, including added or removed transformations, altered join logic, and modified filter conditions

- Dependency impact analysis: The agent maps downstream consumers of the affected dataflow — datasets, cards, alerts, and other dataflows — and scores the blast radius of the proposed change based on asset criticality and usage patterns

- Risk classification: Each proposed change is classified as low, medium, or high risk based on the criticality of affected assets, the nature of the modification, and the historical stability of the pipeline

- AI-Readiness Q&A: Users can ask natural language questions about differences between output datasets, such as asking why row counts changed, which columns have new null values, or how aggregated metrics shifted between versions

- Approval workflow integration: High-risk changes are routed through configurable approval workflows, ensuring that the right stakeholders review and approve modifications before they reach production

- Historical change tracking: The agent maintains a complete history of all dataflow modifications with their AI-generated summaries, making it easy to trace when and why specific changes were made during incident investigations

Standout Features

- AI-generated change summaries: Instead of reading raw dataflow diffs, engineers get concise, context-aware explanations of what each modification does and why it matters, written in language that both technical and non-technical stakeholders can understand

- Blast radius scoring: Every proposed change receives a quantified impact score based on the number and criticality of downstream dependencies, making it easy to distinguish routine updates from high-risk modifications that require extra scrutiny

- Natural language dataset comparison: The AI-Readiness layer lets users ask conversational questions about how datasets differ between versions, eliminating the need for custom SQL queries or manual data profiling to investigate anomalies

- Criticality-aware gating: The governance rules are configurable by pipeline criticality tier, so mission-critical dataflows get stricter change controls while development pipelines maintain engineering velocity

- Proactive drift detection: The agent continuously monitors output datasets for unexpected changes in row counts, schema, or value distributions, alerting engineers to silent data quality issues even when no explicit dataflow changes were made

Who This Agent Is For

This agent is designed for data teams that operate production analytics environments where pipeline stability directly impacts business decision-making.

- Data engineers responsible for maintaining and modifying production dataflows who need a faster way to assess change impact before deployment

- BI administrators managing large card and dashboard environments who need early warning when upstream pipeline changes threaten report accuracy

- Data governance teams establishing change control processes for critical data assets without creating bottlenecks that slow engineering velocity

- Analytics managers who need to quickly investigate and explain data discrepancies reported by business users

- Platform administrators responsible for maintaining data quality SLAs across hundreds of interconnected pipelines and datasets

Ideal for: Enterprise analytics teams, data platform operators, business intelligence organizations, and any environment where data pipeline changes carry production risk that requires structured governance.