Vous avez économisé des centaines d'heures de processus manuels lors de la prévision de l'audience d'un jeu à l'aide du moteur de flux de données automatisé de Domo.

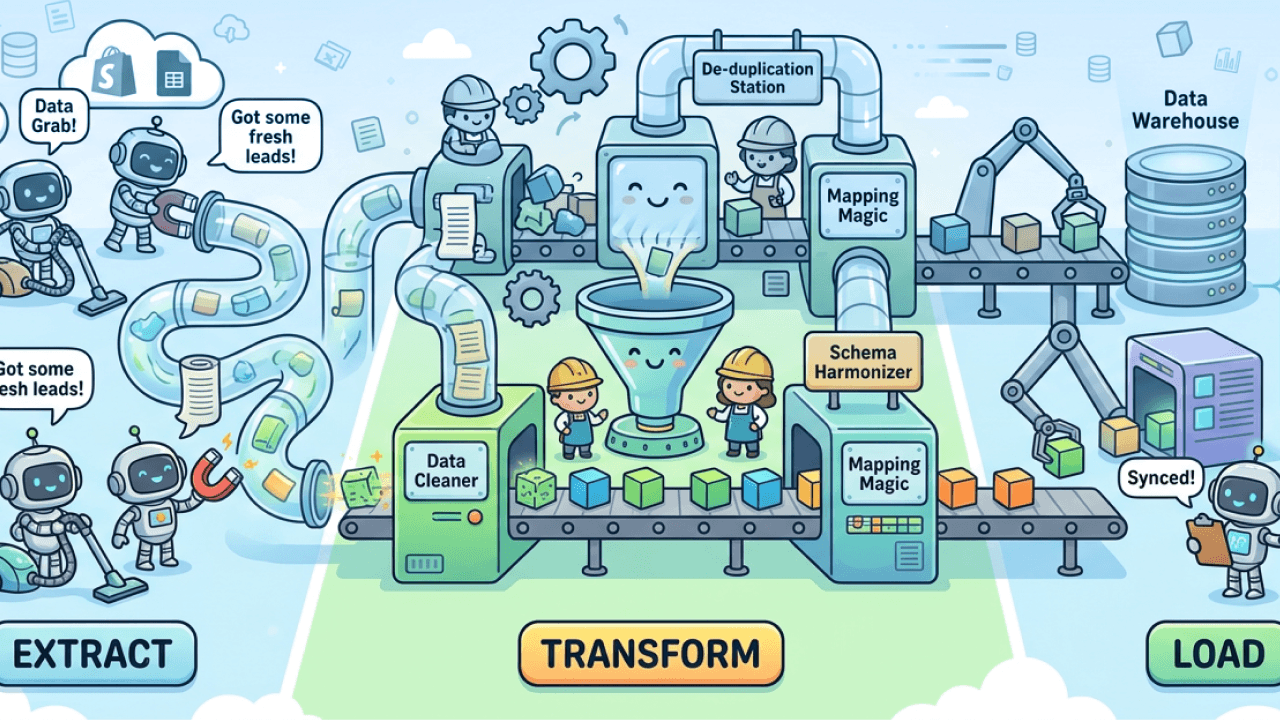

Extract, transform, and load (ETL) is a foundational process in modern data operations. With the explosion of cloud data sources, analytical workloads, and real-time insights requirements, SaaS-based ETL platforms have become the backbone of enterprise data architecture. In this article, we’ll define what a SaaS ETL platform is, explore their benefits and key capabilities to look for, and highlight 10 of the best SaaS ETL tools to consider in 2026.

A SaaS ETL (Extract, Transform, Load) platform is a cloud-hosted service for organizations to collect data from disparate sources, transform that data into a usable format, and load it into target systems such as data warehouses, lakes, analytics tools, or operational applications.

Unlike traditional on-premises ETL solutions, SaaS ETL tools are delivered via the cloud, reducing infrastructure overhead, accelerating deployment, and simplifying maintenance.

Key characteristics of SaaS ETL platforms include:

Organizations of all sizes use SaaS ETL tools to modernize data workflows and improve agility. Some of the most compelling benefits include:

SaaS ETL platforms eliminate the need for hardware provisioning and extensive setup, enabling teams to start extracting and transforming data quickly.

Cloud-native ETL solutions can elastically scale with data volume and complexity, supporting both batch and streaming workloads.

With infrastructure managed by the provider, internal teams can focus more on data strategy and less on system maintenance, patching, and capacity planning.

SaaS vendors frequently roll out new features, connectors, and performance improvements that keep organizations on the cutting edge without manual upgrades.

Modern SaaS ETL platforms support hundreds of prebuilt connectors to databases, APIs, applications, and cloud services, simplifying onboarding and connectivity.

Selecting the right ETL tool depends on your specific business goals, technical environment, and long-term data strategy. Below are key features and capabilities to evaluate:

A broad catalog of connectors ensures you can extract data from all essential systems, including SaaS apps, databases, files, streaming sources, and cloud storage. In 2026, most organizations operate across dozens—if not hundreds—of data sources, ranging from CRM and ERP platforms to marketing tools, financial systems, and custom applications.

A strong connector ecosystem reduces the demand for custom development, accelerates onboarding of new data sources, and lowers ongoing maintenance effort.

Beyond sheer quantity, evaluate connector depth and reliability. Look for connectors that support incremental loads, change data capture (CDC), schema evolution, and API version updates.

Some platforms also provide configurable sync frequencies and real-time ingestion options, which are increasingly important for operational analytics and time-sensitive use cases. As your application stack evolves, a flexible and continuously expanding connector library ensures your ETL platform can grow with your business without becoming a bottleneck.

Look for platforms that offer both simple data preparation and advanced transformation logic, either through visual interfaces or code-based options like SQL, Python, or Spark. Not all users interact with data the same way: Business analysts may prefer drag-and-drop transformations, while data engineers often need programmatic control and reusable logic.

Modern ETL platforms increasingly support ELT patterns, where raw data is loaded first and transformed within the target warehouse for greater scalability.

Evaluate whether the platform supports modular transformations, version control, testing, and reusability. Advanced features like window functions, joins across large data sets, and support for semi-structured data (JSON, Parquet) are also important as data complexity grows.

Strong transformation capabilities ensure your downstream analytics, machine learning models, and operational reports are built on clean, consistent, and trustworthy data.

Consider the platform’s ability to handle large data sets, high concurrency, and complex workflows without performance bottlenecks.

As data volumes grow and more teams rely on shared pipelines, scalability becomes a critical differentiator. A scalable ETL platform should automatically adjust compute resources to meet workload demands without requiring manual intervention.

Performance also matters at the workflow level. Look for platforms that can efficiently process incremental updates, parallelize tasks, and optimize resource usage across pipelines. Support for both batch and streaming workloads is increasingly important, especially for organizations delivering near real-time dashboards or operational alerts.

Poor performance can delay insights, disrupt downstream systems, and erode trust in analytics. A scalable, high-performance ETL platform ensures your data pipelines remain reliable as usage expands across teams and use cases.

Extensive tools for logging, alerting, lineage, and error handling make it easier to operate pipelines at scale and maintain data reliability. As ETL workflows become more complex, visibility into pipeline health is essential for both data engineers and business stakeholders.

Effective observability includes real-time pipeline status, historical run logs, error diagnostics, and dependency tracking.

Data lineage capabilities help teams understand where data originates, how it’s transformed, and where it’s consumed—critical for troubleshooting, governance, and compliance.

Alerting mechanisms should notify teams proactively when failures, delays, or anomalies occur, minimizing downtime and data gaps. Strong monitoring and observability reduce operational risk, improve trust in data, and enable teams to move from reactive firefighting to proactive pipeline management.

Enterprise-grade encryption, role-based access control, data masking, and compliance certifications (e.g., SOC 2, GDPR, HIPAA) are critical for regulated industries. As sensitive data moves across systems, ETL platforms play a central role in protecting information throughout the data lifecycle.

Evaluate how the platform handles data encryption in transit and at rest, credential management, and access auditing. Granular role-based access controls ensure users only interact with the data they’re authorized to see or modify.

For organizations operating globally, compliance with regional regulations such as GDPR is essential, while healthcare and financial services may require HIPAA or other industry-specific certifications. A secure ETL platform not only protects data but also simplifies audits and builds confidence across compliance, security, and executive teams.

Even within SaaS, some vendors offer hybrid or dedicated deployment options for sensitive data environments.

Deployment flexibility is especially important for organizations with regulatory constraints, data residency requirements, or legacy on-premises systems. Hybrid deployment models allow data to be processed behind the firewall while still benefiting from cloud-based management and orchestration.

Dedicated or single-tenant options may appeal to enterprises with strict isolation or performance requirements. Additionally, consider how easily the platform integrates with existing cloud providers, identity systems, and networking configurations.

Flexible deployment options give organizations greater control over where and how data is processed, without sacrificing the scalability and ease of use associated with SaaS platforms.

Here’s a look at 10 SaaS ETL platforms that organizations are evaluating or adopting in 2026. These tools vary in specialization, scale, and ecosystem focus, but each is a strong contender in the modern data stack.

Overview:

Domo’s platform combines ETL capabilities with a broader data ecosystem that includes data integration, governance, analytics, and visualization. With a fully cloud-native architecture, Domo enables users to build, schedule, and monitor data pipelines that feed into dashboards and analytics applications.

Key strengths:

Why it’s a contender:

Domo is often chosen by organizations that want an all-in-one solution where data integration cleanly ties into analytics, reporting, and operational dashboards—reducing tool sprawl and accelerating insights.

Overview:

Fivetran is known for its fully managed data pipelines that automatically extract and load data into cloud data warehouses and lakes with minimal configuration. It emphasizes schema-aware connectors that adapt to source changes without manual intervention.

Key strengths:

Ideal use cases:

Teams prioritizing hands-off connectivity and reliability for their core business applications and operational data sources.

Overview:

Stitch Data is a simple, scalable ETL service acquired by a major cloud data provider and widely used for rapid data replication to warehouses. It focuses on extracting and loading data, with transformation left to downstream tools or ELT processes.

Key strengths:

Ideal use cases:

Small to midsize teams onboarding data quickly into analytics environments.

Overview:

AWS Glue is a cloud-native ETL service within the Amazon Web Services ecosystem. It provides serverless data integration, cataloging, and transformation, tightly integrated with other AWS services.

Key strengths:

Ideal use cases:

Organizations heavily invested in AWS seeking a unified cloud data pipeline and cataloging experience.

Overview:

SnapLogic uses a visual, AI-assisted workflow builder to create and manage data and application integration pipelines. It supports both ETL and API-centric integration use cases.

Key strengths:

Ideal use cases:

Enterprises seeking flexible integration patterns that blend ETL with broader iPaaS requirements.

Overview:

Informatica’s cloud ETL offering is part of its broader Intelligent Cloud Services (IICS) suite. It combines data integration with master data management, data quality, and governance.

Key strengths:

Ideal use cases:

Large enterprises requiring extensive data quality, governance, and complex integration scenarios.

Overview:

Denodo provides a data virtualization platform that enables real-time integration across disparate sources without physically moving data. While different from traditional ETL, Denodo’s approach accelerates data access and analytics.

Key strengths:

Ideal use cases:

Organizations prioritizing real-time access, data abstraction, and minimizing data duplication across systems.

Overview:

Azure Data Factory (ADF) is Microsoft’s cloud ETL and data orchestration service. ADF provides a visual interface and code-based workflows to build complex pipelines across diverse data ecosystems.

Key strengths:

Ideal use cases:

Enterprises using Azure services that need scalable cloud data pipelines and orchestration.

Overview:

Oracle Data Integrator (ODI) is an enterprise data integration platform that supports high-performance bulk data movement and transformation. With both on-premises and cloud deployment options, ODI is widely used in complex enterprise environments.

Key strengths:

Ideal use cases:

Organizations entrenched in the Oracle ecosystem requiring scalable, high-throughput ETL.

Overview:

Airbyte is an open-source ETL platform that has gained popularity for its extensible connector framework and community-driven development. It supports custom connectors and flexible deployment—from self-hosted to cloud managed.

Key strengths:

Ideal use cases:

Teams that value open-source extensibility or need connectors outside traditional catalogs.

While all ten platforms above help you move and prepare data for analytics, they differ in focus areas:

Selecting the best ETL platform for your organization depends on multiple factors. Ask yourself:

Evaluating these questions in the context of the platforms above will help you narrow your choices.

Among the diverse SaaS ETL options available in 2026, Domo stands out for organizations that want a comprehensive, cloud-native platform that goes beyond data movement. While many tools specialize in extraction, replication, or transformation, Domo combines these capabilities with data warehousing, governance, visualization, and collaborative analytics in a single SaaS solution.

Domo eliminates the traditional boundaries between ETL and BI. Users can build pipelines, curate data sets, and instantly power dashboards, all within the same environment. This unification accelerates time to insight and reduces reliance on multiple point solutions.

Its scalable architecture supports both business users and data engineers, offering visual tools for rapid pipeline development as well as APIs and programmatic access for complex use cases.

With prebuilt connectors and flexible integration options, Domo enables enterprises to bring together operational data, cloud sources, files, and real-time streams—ensuring a holistic view of their business.

Enterprise-grade security, access controls, and governance features ensure that as your data grows in volume and importance, it remains protected and trustworthy.

In 2026, organizations seeking a complete cloud data platform (not just ETL) will find Domo a compelling choice that accelerates analytics while simplifying the data stack.

Contact us to see how Domo brings together data integration, transformation, governance, and analytics, so your teams spend less time managing pipelines and more time driving impact.

Domo transforms the way these companies manage business.