Vous avez économisé des centaines d'heures de processus manuels lors de la prévision de l'audience d'un jeu à l'aide du moteur de flux de données automatisé de Domo.

MongoDB is one of the most widely adopted databases in the world, with more than 50,000 customers using it to power everything from customer-facing applications to large-scale operational systems. Its flexible, document-based model makes it easy to store and scale data—but that same flexibility creates challenges when it’s time to analyze, report on, or use that data elsewhere.

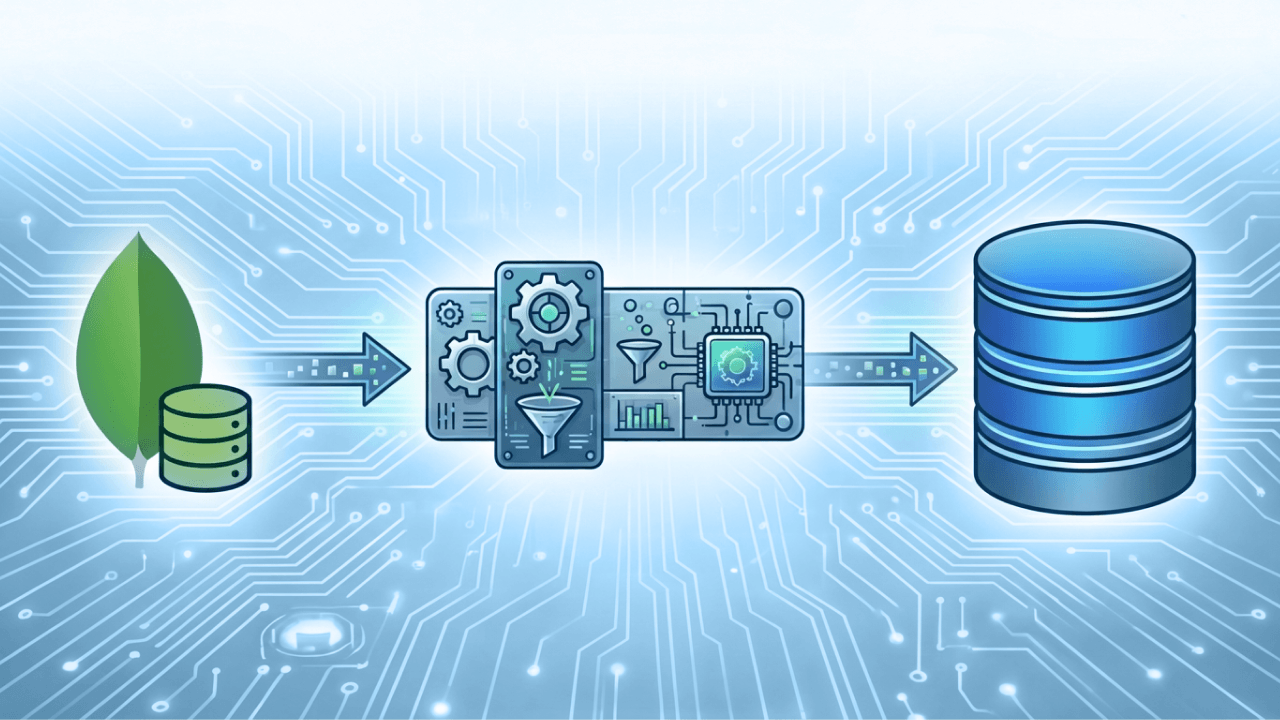

If you’re one of those MongoDB customers (or you’re considering becoming one), there’s one critical piece of the puzzle to get right: ETL. The right MongoDB ETL tool helps you move data out of MongoDB, transform it into analytics-ready formats, and load it into data warehouses, BI platforms, and machine learning pipelines.

In this blog, we’ll break down what MongoDB ETL tools are, the benefits of using them, what features to look for, and the best MongoDB ETL tools to consider in 2026 so you can choose the right solution for your data stack and business goals.

MongoDB is a popular, open-source, document-oriented NoSQL database that stores data. A MongoDB ETL tool is software designed to extract data from MongoDB, transform it into a usable format, and load it into another system such as a data warehouse, analytics platform, or operational database. These tools help organizations move and prepare MongoDB data for reporting, analysis, and downstream applications without relying on custom scripts or manual processes.

MongoDB stores data in flexible, schema-less documents, and effective data management requires tools that can handle nested structures, arrays, and changing data models. MongoDB ETL tools are built to interpret this complexity and support reliable ETL data migration from MongoDB into relational databases, cloud data warehouses, or BI platforms.

In addition to moving data, MongoDB ETL tools perform ETL data transformation, such as flattening documents, normalizing fields, cleaning data, and mapping attributes to target schemas. This keeps MongoDB data accurate, consistent, and ready for analytics, reporting, and operational use—making ETL tools a critical component of any MongoDB data pipeline.

MongoDB is powerful for storing and managing flexible, document-based data—but that flexibility can make analytics, reporting, and downstream use cases more complex. A purpose-built MongoDB ETL tool helps bridge that gap by transforming raw, semi-structured data into reliable, analytics-ready assets. Below are the key benefits of using a dedicated ETL solution.

MongoDB data often lives in nested documents and arrays that aren’t immediately usable for analysis. An ETL tool flattens, normalizes, and cleans this data so teams can easily query it, build dashboards, and generate actionable data. This gives faster insights without requiring analysts or engineers to reshape data by hand every time they look for answers.

A MongoDB ETL tool makes it easy to load transformed data into a centralized data warehouse, where it can be combined with data from CRMs, ERPs, marketing platforms, and other systems. This creates a single source of truth and allows organizations to analyze MongoDB data in the broader context of the business, improving consistency and trust in reporting.

Maintaining custom scripts for MongoDB extraction and transformation can be time-consuming and fragile. A dedicated ETL tool provides built-in scheduling, monitoring, and error handling, making pipelines more reliable and easier to scale as data volumes grow. This reduces operational overhead and lowers the risk of broken or incomplete data flows.

Machine learning models depend on clean, well-structured data. MongoDB ETL tools prepare data specifically for ETL machine learning use cases by enforcing consistent schemas, handling historical data, and ensuring data quality. This makes it easier for data science teams to train models, validate results, and deploy AI-driven applications with confidence.

ETL tools standardize how MongoDB data is transformed and loaded, providing consistent definitions, naming conventions, and data quality rules. This improves governance for different teams and prevents discrepancies between reports, dashboards, and models that rely on MongoDB data.

By replacing custom development with configurable pipelines, MongoDB ETL tools allow teams to move faster. Analysts, data engineers, and business users can spend less time managing infrastructure and more time extracting value from data, which shortens the path from raw MongoDB documents to meaningful business outcomes.

Not all MongoDB ETL tools are created equal. Because MongoDB data is flexible, high-volume, and often used in real-time applications, the right ETL solution must go beyond basic extraction and loading. When evaluating tools, look for the following features for scalability, reliability, and long-term value.

Modern use cases often require access to real-time data, not just nightly batch jobs. A strong MongoDB ETL tool should support change data capture (CDC) or incremental syncs so new and updated records are available for analytics and downstream systems as soon as possible. This is especially important for operational dashboards and time-sensitive decision-making.

MongoDB’s nested and schema-less structure requires strong data transformation features. Look for tools that can flatten documents, handle arrays, normalize fields, apply business rules, and map data to structured schemas without extensive custom code. The more flexible and visual the transformation layer, the easier it is to adapt as data models evolve.

The best tools support both traditional batch processing and ETL streaming. This allows teams to handle high-velocity data flows while still supporting historical backfills and periodic loads. Streaming ETL is particularly valuable for event-driven applications, monitoring, and real-time analytics.

As MongoDB usage grows, ETL pipelines must scale with it. Look for tools designed to handle big data volumes efficiently, with features like parallel processing, cloud-native architecture, and performance optimization. This makes sure pipelines remain fast and reliable as data size and complexity increase.

A strong MongoDB ETL tool should easily connect with cloud data warehouses, BI platforms, and analytics tools. Native connectors reduce setup time, minimize maintenance, and deliver data in formats ready for analysis.

Production-grade ETL tools provide visibility into pipeline health through monitoring, alerts, and detailed logs. Built-in retry logic and error handling help prevent data loss and ensure consistent delivery, even when source systems change or experience disruptions.

Consider how easy the tool is to configure, maintain, and scale over time. Low-code or no-code interfaces, reusable transformations, and clear documentation can significantly reduce the operational burden on data teams while speeding up deployment.

According to Forbes, forward-looking ETL tools will integrate with AI. Artificial intelligence can help ETL tools adapt to changes in source data and optimize data workflows. AI can also promote “self-healing” in ETL tools, where AI can help pipelines automatically fix or reroute around problems.

Beyond extracting and transforming data, a strong MongoDB ETL tool should make it easy to use the data once it’s loaded. Look for tools that connect directly with BI and analytics platforms or offer built-in visualization and analysis capabilities. This reduces the delay between data ingestion and insight, aligns transformed data with reporting needs, and helps teams validate data quality quickly. When ETL outputs are immediately usable for dashboards, ad hoc analysis, and machine learning workflows, organizations can move faster and get more ROI from their MongoDB data pipelines.

Choosing the right ETL tool for MongoDB can make or break your analytics, AI, and operational data workflows. Below are ten of the top tools in 2026—each strong in connectivity, transformation, scalability, and suitability for different use cases.

Domo is an end-to-end data platform that includes powerful ETL capabilities with native connectors for MongoDB. It allows organizations to extract, transform, and load MongoDB data into a unified platform where teams can visualize, analyze, and share insights without spinning up separate data warehouses.

Domo’s low-code transformation engine makes it easy to clean and reshape semi-structured MongoDB data into analytics-ready tables. With built-in scheduling, monitoring, and governance, Domo provides enterprise teams with reliable pipelines that support real-time data dashboards, automated workflows, and collaboration across business units.

Fivetran specializes in zero-maintenance data pipelines, and its MongoDB connector is designed to keep data synchronized with minimal engineering overhead. Once connected, Fivetran automatically handles schema changes and incremental updates, making it ideal for teams that want reliable, real-time data movement without scripting.

Fivetran integrates with major cloud data warehouses like Snowflake, BigQuery, and Redshift, and supports automated transformation via dbt. Fivetran’s approach enables analytics teams to focus on insights rather than upkeep, while also providing visibility into pipeline health and data freshness through its intuitive dashboard.

Informatica remains a leader in enterprise data integration. PowerCenter (for on-premise) and Informatica Cloud (iPaaS) offer good support for MongoDB ETL alongside traditional relational sources. Their visual transformation tools are ideal for complex ETL workflows, including data cleansing, enrichment, and orchestration across hybrid environments.

Informatica excels in governance, lineage tracking, and security—important for regulated industries. With broad connector libraries and support for big data platforms, Informatica enables organizations to build scalable, governed pipelines that feed analytics, machine learning, and operational reporting systems.

Talend provides a flexible, open-source-friendly ETL platform with strong support for MongoDB. Talend Data Integration includes drag-and-drop jobs for extracting data, performing complex data transformation, and loading into targets like data warehouses or lakes. It also supports real-time streaming via Kafka and offers quality and governance components.

Talend’s modular design makes it suitable for both small teams and large enterprises, and its metadata management features help maintain data consistency as schemas evolve. The platform also integrates with cloud services and big data ecosystems.

Pentaho Data Integration (PDI) is a versatile ETL and ELT platform that works well with MongoDB, especially for organizations with multi-source pipelines. PDI’s intuitive visual designer and wide adapter library allow developers to build complex jobs that extract from MongoDB’s document stores, apply business logic, and deliver to BI or data lake environments. Because it supports both batch and ETL streaming, PDI is suitable for real-time reporting and historical processing alike. Its analytics and reporting layers also allow teams to operationalize insights directly within the platform.

MongoDB offers built-in tools like mongoexport, mongodump, and MongoDB Atlas Data Lake with aggregation pipelines that can act as lightweight ETL mechanisms. While not as fully featured as third-party ETL platforms, these native tools are excellent for simple extraction and transformation tasks, especially in cloud-native MongoDB Atlas environments. You can embed transformation logic using MongoDB’s aggregation framework or use Atlas Triggers for near-real-time workflows. These tools are cost-effective and tightly integrated with MongoDB’s core capabilities.

Stitch, now part of the Talend family, offers a straightforward, developer-friendly ETL experience with a strong MongoDB connector. Stitch is designed for quick setup and minimal configuration, making it ideal for small to medium teams that need reliable data replication into warehouses like Snowflake or Redshift. Stitch handles incremental syncs and schema drift, and because it’s part of Talend’s ecosystem, teams can later grow into more advanced transformation and governance capabilities. Stitch strikes a balance between simplicity and scalability.

Airbyte is an open-source ELT platform that’s rapidly gaining traction for its extensibility and community-built connectors, including support for MongoDB. Airbyte allows teams to centralize extraction and loading while pairing with transformation tools like dbt.

Its modular architecture supports both on-premise and cloud deployments, and its connector framework makes it easy to add custom sources and destinations. Airbyte’s real-time CDC capabilities and visibility into pipeline performance make it attractive for teams building data stacks without vendor lock-in.

Matillion provides cloud-native ETL/ELT with a focus on simplicity and performance for modern data warehouses. Its visual job designer makes building MongoDB pipelines intuitive, while support for orchestration, environment promotion, and parameterization suits enterprise needs.

Matillion also supports real-time workflows and integrates with tools like dbt and Snowflake. With strong scheduling and logging, teams can maintain reliable pipelines that bring MongoDB data into analytics-ready schemas quickly.

Hevo Data offers a fully managed data pipeline service with drag-and-drop simplicity and near-real-time syncs from MongoDB to destinations like BigQuery, Snowflake, and Redshift. Hevo handles complex schema changes and automates ETL data transformation on the fly, allowing teams to define transformation logic without heavy engineering effort.

Real-time data updates ensure analytics teams always work with current information. Hevo’s monitoring and alerting features also give visibility into pipeline health and performance, making it a strong choice for fast-moving organizations.

As MongoDB continues to power more applications and data-driven businesses, the ability to move and transform their data effectively becomes just as important as how it’s stored. The right ETL solution makes sure MongoDB data doesn’t stay siloed, but instead becomes accessible, trusted, and ready to drive analytics, machine learning, and operational decisions.

Domo supports MongoDB ETL by providing native connectivity, flexible transformation capabilities, and an end-to-end data platform to unify MongoDB data with the rest of your business data. With built-in automation, governance, and real-time analytics, Domo helps teams move beyond merely extracting data to turn MongoDB data into useable insights without the complexity of managing separate tools.

Ready to see how Domo will simplify MongoDB ETL and accelerate access to the information you can build on? Explore how Domo helps teams connect, transform, and analyze MongoDB data—all in one platform.

Domo transforms the way these companies manage business.